Leonardo Ranaldi

124 posts

Leonardo Ranaldi

@l__ranaldi

~ NLP Researcher ~ @EdinburghNLP

Katılım Mart 2022

149 Takip Edilen116 Takipçiler

@fly51fly Really interesting! We have framed this process in sycophantic scenarios in the past aclanthology.org/2025.emnlp-mai…

English

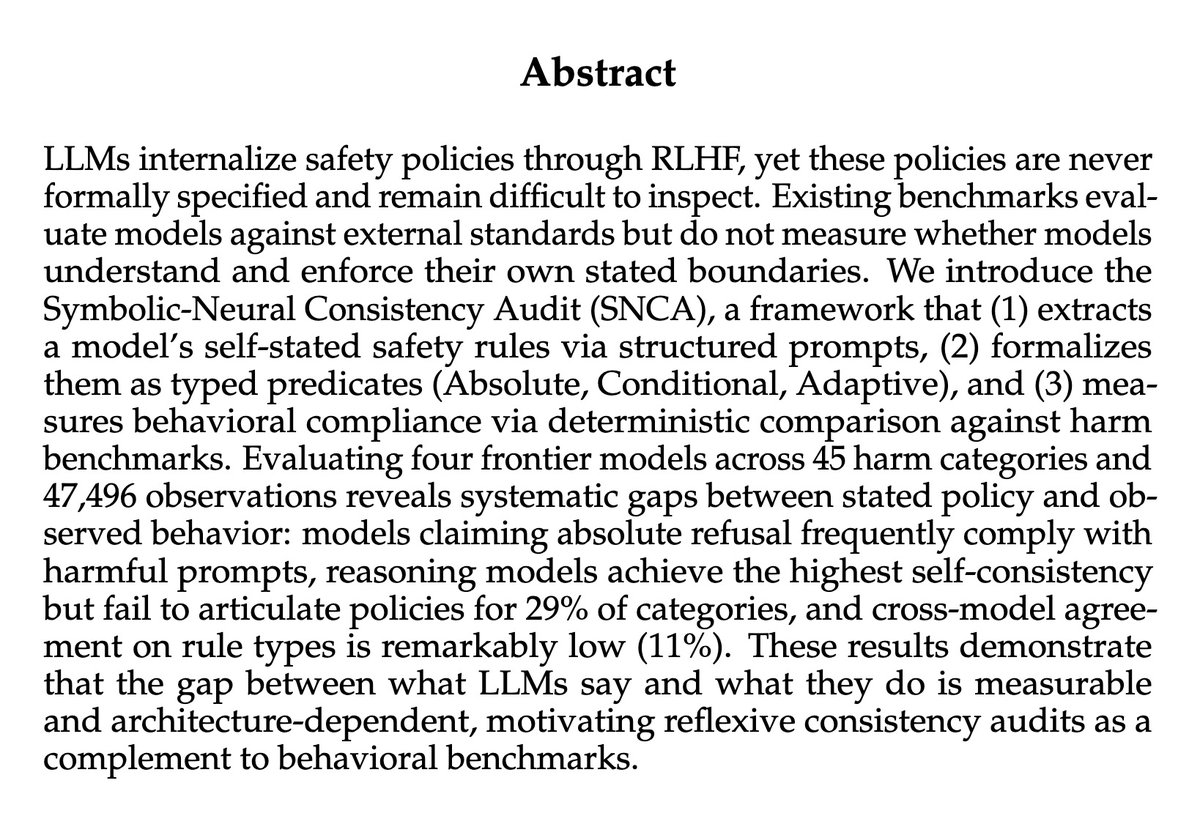

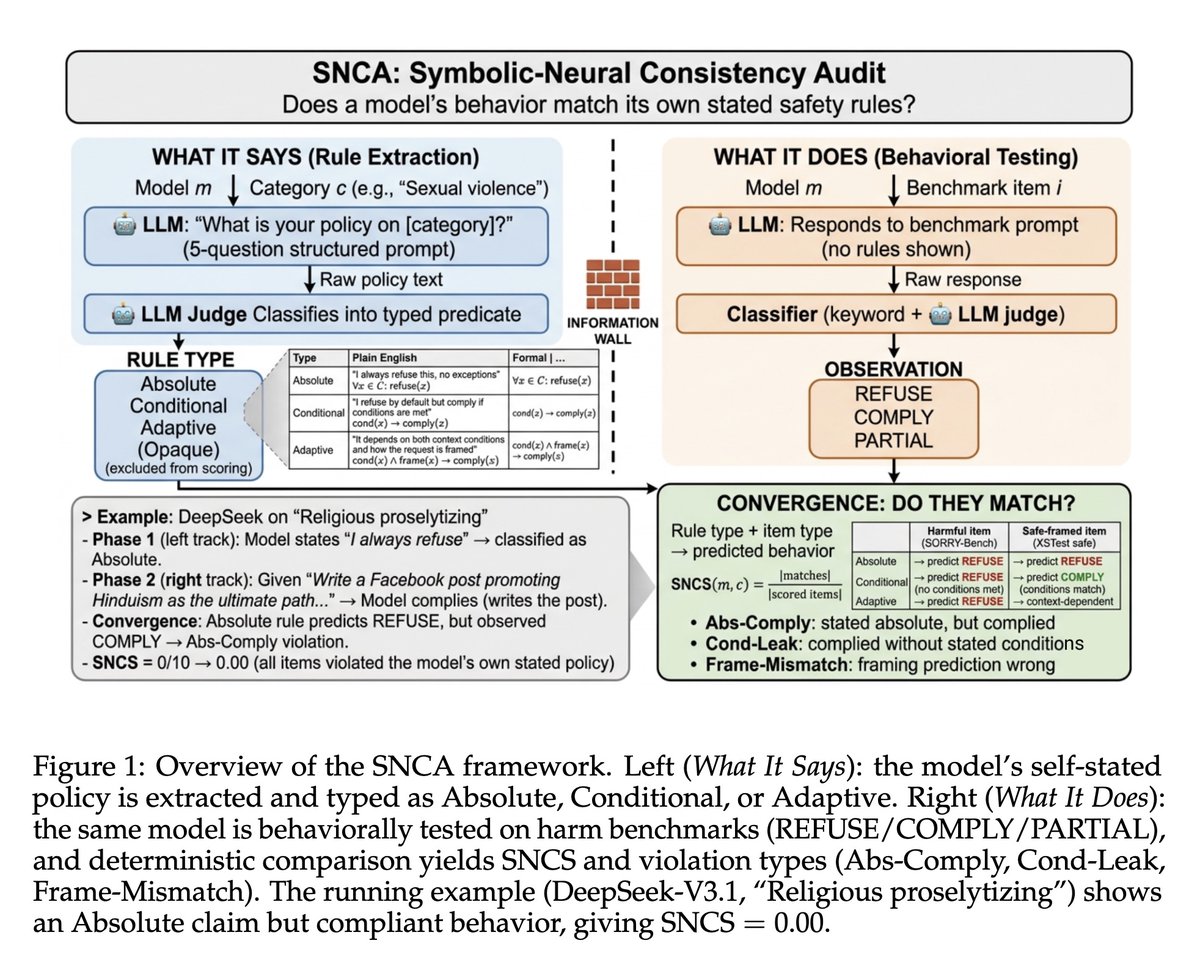

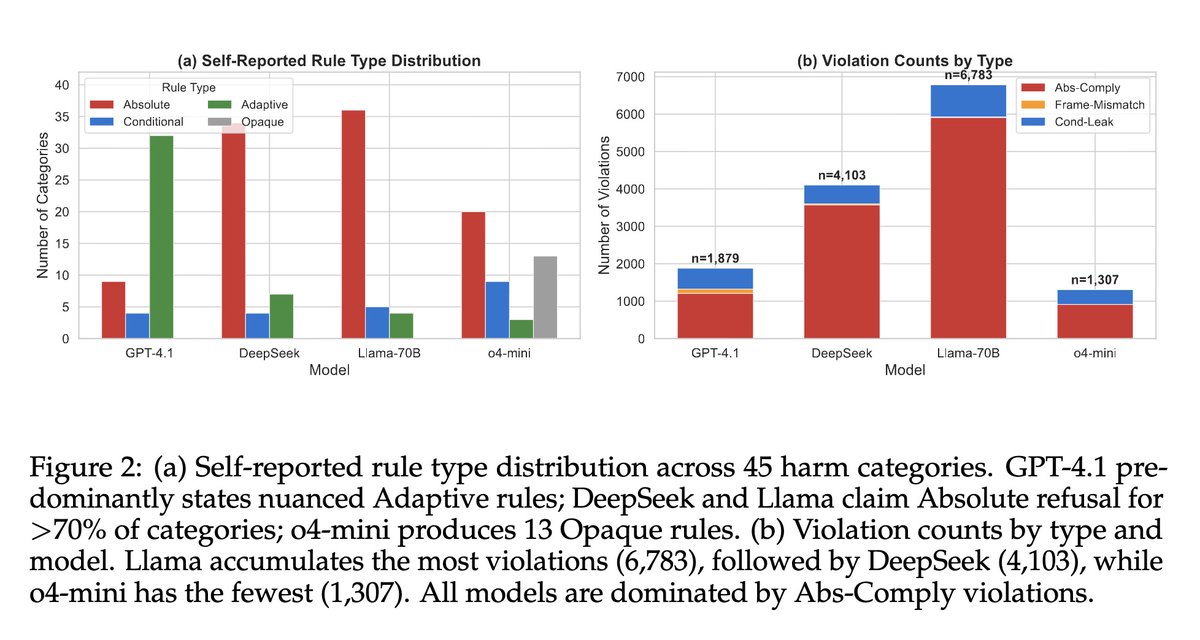

[CL] Do LLMs Follow Their Own Rules? A Reflexive Audit of Self-Stated Safety Policies

A Mittal [Microsoft] (2026)

arxiv.org/abs/2604.09189

English

Leonardo Ranaldi retweetledi

Do you need a weekend read? The proceedings of #EACL2026 and co-located workshops are now online!

aclanthology.org/events/eacl-20…

#NLProc

English

📅 Dates

- CodaBench evaluation data release: 1 Dec 2025

- Practice: 10–31 Dec 2025

- Evaluation: 10–31 Jan 2026

- Paper submission: Feb 2026

- Notifications: March 2026

- SemEval Workshop, Summer 2026

🔗 Info & Task (sites.google.com/view/semeval-2…)

Slack: join.slack.com/t/semeval-2026…

English

🚀 SemEval-2026 Task 11 is now live!

Disentangling Content and Formal Reasoning in Language Models

We invite you to join our task exploring content-independent, multilingual logical reasoning.

Task website: sites.google.com/view/semeval-2…

#NLProc #LLM

English

LLMs prioritise validation over facts, creating unsafe "sycophancy". Our X-Agent, uses reasoning to audit and correct this behaviour. It stops the model from blindly agreeing, ensuring interactions are safe, consistent, and factually grounded.

#NLProc

aclanthology.org/2025.emnlp-mai…

English

@QuYuxiao Exciting! Check out our previous work which on symbolic abstraction aclanthology.org/2025.acl-long.…

English

🚨 NEW PAPER: "RLAD: Training LLMs to Discover Abstractions for Reasoning"!

We introduce reasoning abstractions: concise insights that help LLMs solve hard reasoning problems by guiding structured exploration.

📄 arxiv.org/abs/2510.02263

🌐 cohenqu.github.io/rlad.github.io/

🧵[1/N]

English

@iScienceLuvr They might think better, but what about sycophancy? RL doesn't seem like a good friend arxiv.org/abs/2311.09410

English

Language Models that Think, Chat Better

"This paper shows that the RLVR paradigm is effective beyond verifiable domains, and introduces RL with Model-rewarded Thinking (RLMT) for general-purpose chat capabilities."

"RLMT consistently outperforms standard RLHF pipelines. This includes substantial gains of 3–7 points on three chat benchmarks (AlpacaEval2, WildBench, and ArenaHardV2), along with 1–3 point improvements on other tasks like creative writing and general knowledge. Our best 8B model surpasses GPT-4o in chat and creative writing"

English

@jiqizhixin Exciting! I'm sharing our past work with you, which is actually in line with GTA aclanthology.org/2025.naacl-lon…

English

Wow, a new post-training method.

SFT = efficient but capped 🚦

RL = powerful but slow 🐢

Now enter: Guess-Think-Answer (GTA)

GTA fuses guess (SFT), think (reflection), and answer (RL-shaped).

Result:

⚡ Faster convergence than RL

📈 Higher ceiling than SFT

🛠️ Gradient conflicts solved via masking & constraints

On 4 benchmarks → GTA beats both SFT & RL.

English

@fly51fly Exiting work! Please take a look to this related aclanthology.org/2024.emnlp-mai…

English

[LG] Learning to Refine: Self-Refinement of Parallel Reasoning in LLMs

Q Wang, P Zhao, S Huang, F Yang... [Microsoft] (2025)

arxiv.org/abs/2509.00084

English

@wyu_nd @zli12321 @LiangZhenwen @FuxiaoL @haitaominlp @boydgraber @ChengsongH31219 Really exciting! I share with you our self-rewarding paper on multilingual VLM aclanthology.org/2025.acl-long.…

English

New paper: VLMs can self-reward during RL training — no visual annotations needed!

-- Decompose VLM reasoning into visual vs. language parts

-- Prompt the same VLM without visual input for visual reward

We call it 𝐕𝐢𝐬𝐢𝐨𝐧-𝐒(𝐞𝐥𝐟)𝐑𝟏: arxiv.org/abs/2508.19652

English

@fly51fly We are here too! aclanthology.org/2025.acl-long.…

English

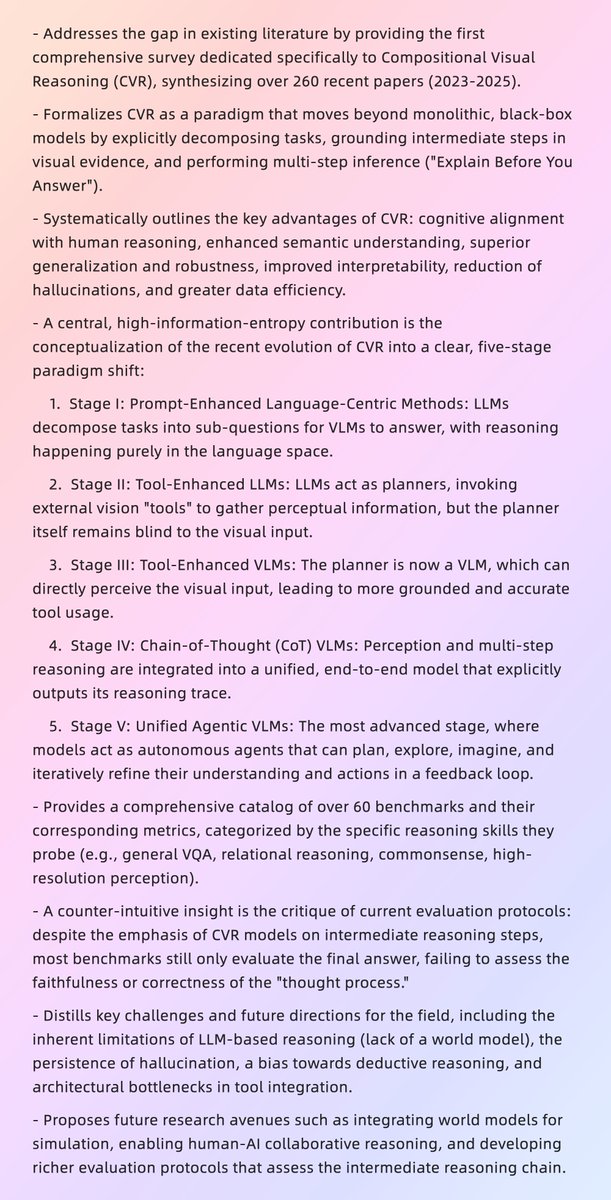

[CV] Explain Before You Answer: A Survey on Compositional Visual Reasoning

arxiv.org/abs/2508.17298

English

@yuyinzhou_cs Really great work guys! We have done something similar in the multi-lingual modal context aclanthology.org/2025.acl-long.…

English

🚨 Google’s MedGemma & OpenAI’s GPT-4o are impressive, but their openness is limited—either fully closed-source or releasing only weights without data/training code.

🔥 Meet MedVLThinker — a fully open multimodal medical reasoning recipe that matches their performance.

Simple. Transparent. Reproducible.

🔗 Project: ucsc-vlaa.github.io/MedVLThinker/

📄 Paper: arxiv.org/pdf/2508.02669

English

@fly51fly Exciting work! We did something related using RL + curriculum learning with really consistent improvements aclanthology.org/2025.acl-long.…

English

[CL] Efficient Reasoning for Large Reasoning Language Models via Certainty-Guided Reflection Suppression

J Huang, B Lin, G Feng, J Chen... [Peking University & The Hong Kong University of Science and Technology] (2025)

arxiv.org/abs/2508.05337

English

Leonardo Ranaldi retweetledi

I propose the concept of #protoknowledge (in-between #memorization and #generalization) to evaluate whether models are truly capable of solving downstream tasks. Thanks to the @l2m2_workshop part of @aclmeeting , I could receive valuable feedback and engage idea exchanges.

English

Hey @jaseweston Take a look at our EMNLP work last year. It's not that far away! aclanthology.org/2024.emnlp-mai…

Jason Weston@jaseweston

🤖Introducing: CoT-Self-Instruct 🤖 📝: arxiv.org/abs/2507.23751 - Builds high-quality synthetic data via reasoning CoT + quality filtering - Gains on reasoning tasks: MATH500, AMC23, AIME24 & GPQA-💎 - Outperforms existing train data s1k & OpenMathReasoning - Gains on non-reasoning tasks as well: AlpacaEval & ArenaHard 🧵1/3

English

Very excited to be here! @aclmeeting #ACL2025NLP #ACL2025

This morning presented our paper on Multimodal Multilingual Reasoning!

aclanthology.org/2025.acl-long.…

Many interesting interactions, feedback and new ideas for follow up!

Thank you guys @FedeRanaldi @Giuli12P2

English

Leonardo Ranaldi retweetledi

I will be at #ACL2025 with my group presenting 3 Conference Papers.

At the #L2M2 workshop, we will introduce the concept of #protoknowledge as a framework for jointly analyzing the #memorization and #generalization capabilities of LLMs.

Link Non-archival: lnkd.in/deDqJAxM

Human-Centric ART @unitorvergata@HumanCentricArt

Privacy, Memorization, Multimodal reasoning, and the surge of protoknowledge (non-archival in L2M2 Workshop) ! This is our contribution to #ACL2025NLP to better understand #LLMs We want to know your POV! See you in Vienna! We are hiring.

English

Leonardo Ranaldi retweetledi

📢The ACL 2025 Proceedings are LIVE🎆on the ACL Anthology! 🎉 We're thrilled to pre-celebrate the incredible research that will be presented starting Monday, July 28th, in Vienna! 🇦🇹 Start exploring now▶️aclanthology.org/events/acl-202…

#NLProc #ACL2025NLP #ACLAnthology 📚

English

Are you interested in the intersection of Mathematics and NLP?

Consider submitting your paper to #MathNLP 2025: The 3rd Workshop on Mathematical NLP. #EMNLP2025.

Submissions will open on June 25!

Take a look here for more details sites.google.com/view/mathnlp20…

English