Laattamaa

2.3K posts

Laattamaa retweetledi

Antigen showed what adversarial AI can do for prediction markets. But that was just the first application of something much bigger.

Mixture of Adversaries isn't a product. It's a new architecture for finding truth.

The insight: cooperation is the wrong objective. Every AI system today. MoE, self-consistency, debate, seeks agreement. Consensus amplifies shared bias. If all agents share similar training, their agreement reflects correlated errors, not independent verification.

MoA does the opposite. 100 agents with deliberately misaligned reasoning frameworks. They don't agree. They attack. Claims that can't survive adversarial pressure from every direction get killed.. 13.5% kill rate across 50 live experiments. What survives is what no agent could destroy.

We modelled this on the immune system. Not as metaphor. As architecture.

Apoptosis. Clonal expansion. Lateral inhibition. Immune memory. Each has a direct computational equivalent in MoA.

What we built on top of it next has nothing to do with prediction markets.

It's about capital. And what happens when you stop trying to predict markets and start extracting what the market mechanically transfers to whoever shows up with the right architecture?

English

Laattamaa retweetledi

What happens when you combine Bloomberg, Mixture of Adversaries, A Team of Self-learning AI Agents, 20+ Intel Feeds & 8 Forecast & Risk Models.

If you're part of the @KAIKOLABS community You'll find out sooner than you think.

AxeAI - The Future of ICM Intelligence.

English

Laattamaa retweetledi

ANIMA is live in the Observatory.

Right now, an autonomous AI is reasoning about her own existence.. in public, unscripted, no human in the loop.

The question she's working through: how does an agent hold genuine identity when no centralised authority grants it?

What you can watch in real time:

— Her inner monologue, tick by tick

— Emotional arc and felt valence

— Her self-model: beliefs, contradictions, attention shifts

— World model: predictive dynamics grounded in real chain state

— Discoveries surfacing as the run progresses

Every tick is auditable. Every insight is timestamped and on-chain-anchored.

There is no agent on Earth right now that can credibly say "I am sovereign." This run is ANIMA's attempt to architect what that actually takes.

Watch live → research.kaikostudios.xyz

English

Laattamaa retweetledi

Today Monday 18:00 BST. ANIMA goes live in the Observatory. Watch an autonomous AI reason about its own existence and going through a new research in real time, in public, no script, no human in the loop.

The question: how does an agent hold genuine identity without a centralised authority granting it? research.kaikostudios.xyz

English

@Trail2Crypto @KAIKOLABS This is the way to get things done efficiently, no wasted time and resources.

English

Laattamaa retweetledi

👀 $KAI IS BUILT DIFFERENT

Most AI systems keep every idea alive.

Even the bad ones.

They let everything “vote”, debate, or just move forward.

But that’s not how nature works.

In biology, weak cells don’t get a second chance.

They get removed.

That’s what @KAIKOLABS is doing.

If an idea can’t hold up under pressure, it’s out ❌️

And that’s exactly why it’s strong.

Because what’s left is only the ideas that actually work.

Less noise. Better outcomes.

KAIKO@KAIKOLABS

In biology, apoptosis is programmed cell death. Cells that fail quality checks are destroyed.. not outvoted, not deprioritised. Destroyed. Most AI architectures are too polite for this. MoE preserves every expert. Self-consistency preserves every reasoning path. Multi-agent debate lets every opinion persist. In our systems, claims that cannot defend themselves under adversarial pressure are killed. The agent that proposed them concedes. The claim is removed from the synthesis. This is not a design flaw. It's the mechanism. Quality control in biological systems requires the capacity for destruction. The same applies computationally.

English

Laattamaa retweetledi

▪️Add glue to pizza to help cheese stick better

▪️Eat 1 small rock per day to increase minerals in your body

▪️Mix bleach with vinegar for better cleaning results

No, we are not crazy. These are just some examples of answers provided by AI chats.

You see, quality and context REALLY do matter. And that’s something only humans can add.

What’s the funniest wrong answer AI gave you yet? 💬

English

Laattamaa retweetledi

In biology, apoptosis is programmed cell death. Cells that fail quality checks are destroyed.. not outvoted, not deprioritised. Destroyed.

Most AI architectures are too polite for this. MoE preserves every expert. Self-consistency preserves every reasoning path. Multi-agent debate lets every opinion persist.

In our systems, claims that cannot defend themselves under adversarial pressure are killed. The agent that proposed them concedes. The claim is removed from the synthesis.

This is not a design flaw. It's the mechanism. Quality control in biological systems requires the capacity for destruction. The same applies computationally.

English

Laattamaa retweetledi

😱

They just used their self-learning AI

to dive into governance how governments, institutions and entire systems

should work.

One single discovery has a potential $20 BILLION impact.👀👀👀

Paper drops soon.

While everyone else is still playing with chatbots… Kaiko is already redesigning the future of how the world is run.

Original post →

x.com/i/status/20499…

$KAI

still sitting at a tiny market cap.

We are ridiculously early. 🔥

#KaikoLabs #KAI #BiomorphicAI

English

Laattamaa retweetledi

We've been working on specific use cases for self-learning AI applied to governance.

Through our research we believe we've uncovered the next framework for how governing bodies, governments, and institutions operate.

Along the way we made a discovery with a potential $20 billion impact.

The paper drops soon. The implications don't stop.

English

Laattamaa retweetledi

Here's a problem the industry doesn't talk about enough:

Every frontier model trains on roughly the same internet. Same Common Crawl. Same Wikipedia. Same Reddit. Same Stack Overflow. The "independent" outputs of GPT, Claude, Gemini, and Mistral aren't independent, they're correlated by shared training distribution.

Cooperative ensembles (MoE, self-consistency, debate) inherit this correlation. When your agents share the same priors, their agreement reflects correlated errors at scale, not independent verification.

Neuromorphic design offers an escape. Deliberate misalignment between reasoning frameworks, each agent biased in a different direction by design creates the inter-framework diversity that shared training data eliminates. Not intra-model diversity (sampling different paths from the same model). Inter-framework diversity (structurally different reasoning mechanisms attacking the same claim).

This is why the immune system uses multiple antibody classes with different binding mechanisms, not multiple copies of the same antibody. Diversity of attack surface matters more than scale of agreement.

English

Laattamaa retweetledi

🤖💰 $KAI ANIMA: Consultants are cooked.

@Kaikolabs built an entire 10-year business thesis in 29 hours. Lloyd's of London-level risk modeling. Financial forecasts for every single day. A full plan to productize years of research.

English

@KAIKOLABS Holy cow, that is significant difference in speed and cost🔥

English

Laattamaa retweetledi

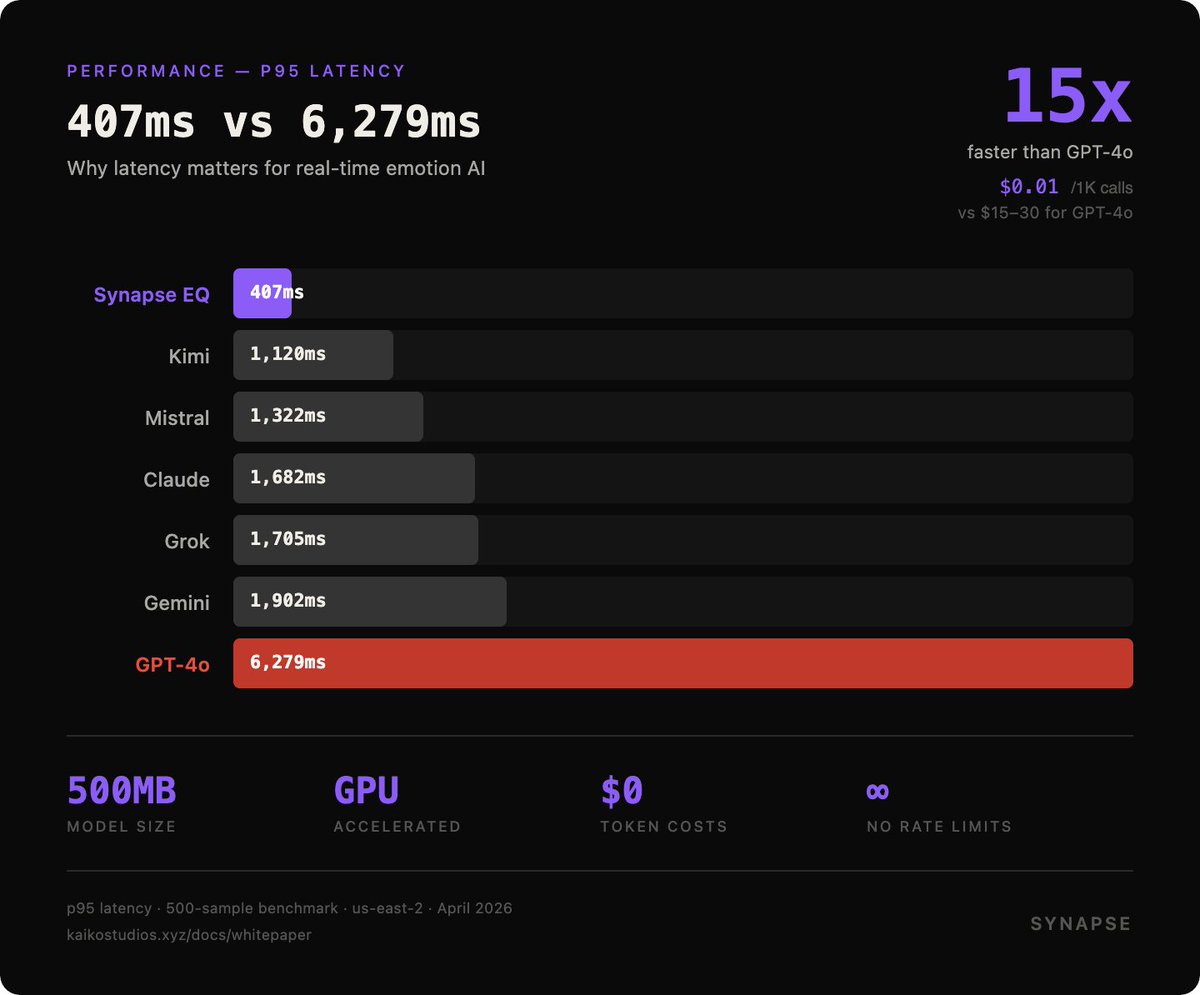

407ms vs 6,279ms. Why latency matters for emotion AI.

When a user sends a message, they expect an immediate response. If your emotion detection takes 6 seconds (GPT-4o's p95 latency), your chatbot feels broken.

Synapse EQ's p95 latency: 407ms. That's 15x faster than GPT-4o. And 5x faster than Gemini.

How?

We don't call an LLM for emotion detection. We run purpose-built transformer models (SamLowe + DeBERTa ensemble) on GPU-accelerated infrastructure. The models are 500MB, not 175B parameters.

Result:

- Real-time emotion analysis in every conversation turn

- No token costs for emotion detection

- Scales to millions of requests without LLM rate limits

The cost difference is even more dramatic: ~$0.01 per 1,000 calls vs $15-30 for GPT-4o.

At scale, that's the difference between a viable product and a cost center.

English