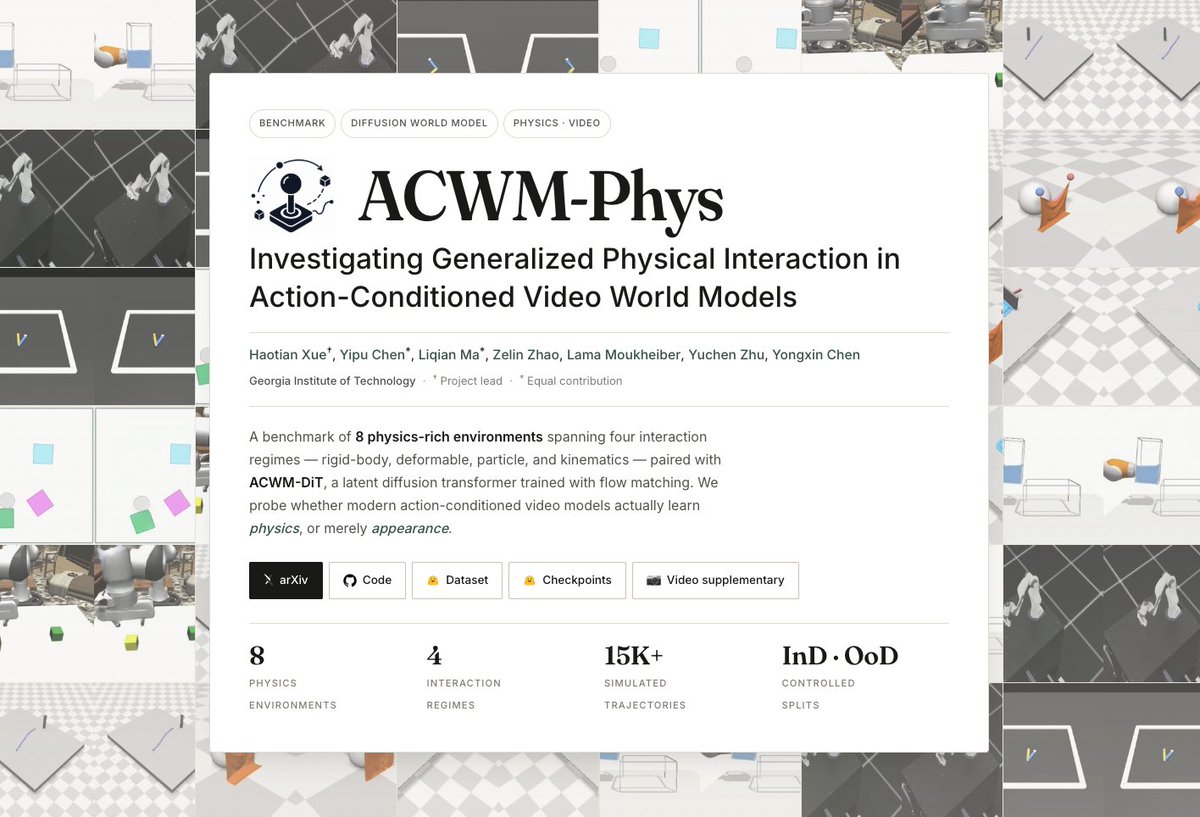

❓ How well can ACWMs learn different types of physics e.g. rigid bodies, deformables, particles, and kinematics? ❓ Can they actually generalize beyond the training distribution? 🚀 We are excited to release ACWM-Phys: a Physics-rich investigation into Action-Conditioned video World Models! While most world-model research today focuses on ego-view game play or narrow robot-arm manipulation, we ask two questions: We collect 15K+ simulated trajectories across 8⃣ environments spanning 4⃣ physics regimes (rigid contact🧊, particle dynamics🌊, kinematics🦾, and deformable contact🧥), each with a controlled, physically meaningful InD ↔ OoD split (unseen cube counts, larger cloth, doubled particle counts, expanded workspaces, …). We train ACWM-DiT, a latent diffusion transformer with flow matching, and find a pattern: simple low-dimensional geometry generalizes cleanly, but contact-rich deformation, particle dynamics, and high-DoF kinematics break down current ACWMs still capture visual statistics, not physical laws. We also did some ablation to draw insights about model arch, data scaling and action complexity. The datasets and checkpoints for all 8 environments have been publicly released: 📃Paper: arxiv.org/pdf/2506.01392 📘Page: xavihart.github.io/ACWM-Phys/ 🐙Code: github.com/xavihart/ACWM-… 📠Dataset: huggingface.co/datasets/t1an/… 🤗Checkpoints: huggingface.co/t1an/ACWM-Phys… Also shout out to @YongxinChen1 , Yipu, Liqian, Zelin, @lamawm7 @YuchenZhu_ZYC