Sabitlenmiş Tweet

🚀 Langflow V 1.8 is live

Langflow 1.8 represents a structural leap in how AI solutions are built, integrated, and scaled. This release makes Langflow more mature, more powerful, and ready for intelligent agents in production, going beyond prototypes and experiments.

With 1.8, Langflow is simpler to configure, easier to integrate, faster to use, and prepared for the next generation of AI agents, from visual workflows to production code.

What’s new in this release:

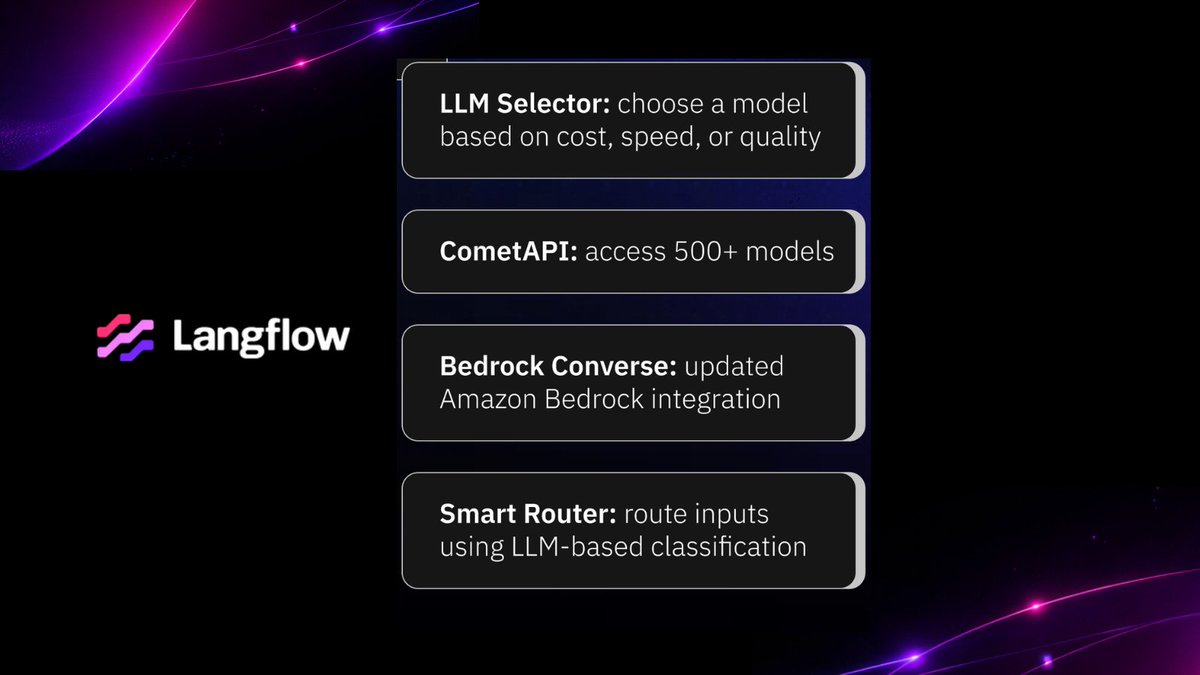

🔹 Model Provider Setup

Model configuration now follows a single, reusable standard, reducing manual setup, configuration drift, and errors when scaling projects.

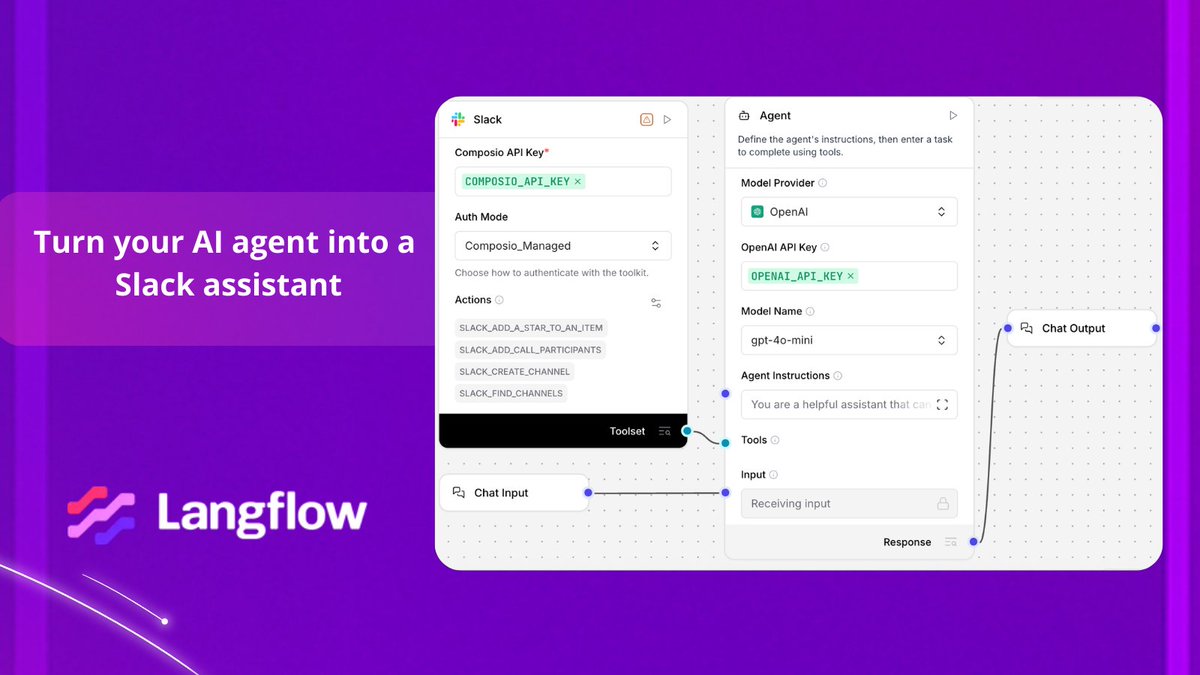

🔹 API Redesign (Phase 1)

Flows can now be consumed via standardized APIs, making Langflow a more predictable and robust part of applications, systems, and backends.

🔹 Chat Refactor (Playground Improvements)

Improved session and message management delivers more stable interactions and a smoother experience when working with long or complex conversations.

🔹 Inspection Panel

Direct access to component configuration, parameters, and optional inputs directly from the workspace panel, reducing context switching and accelerating debugging and iteration.

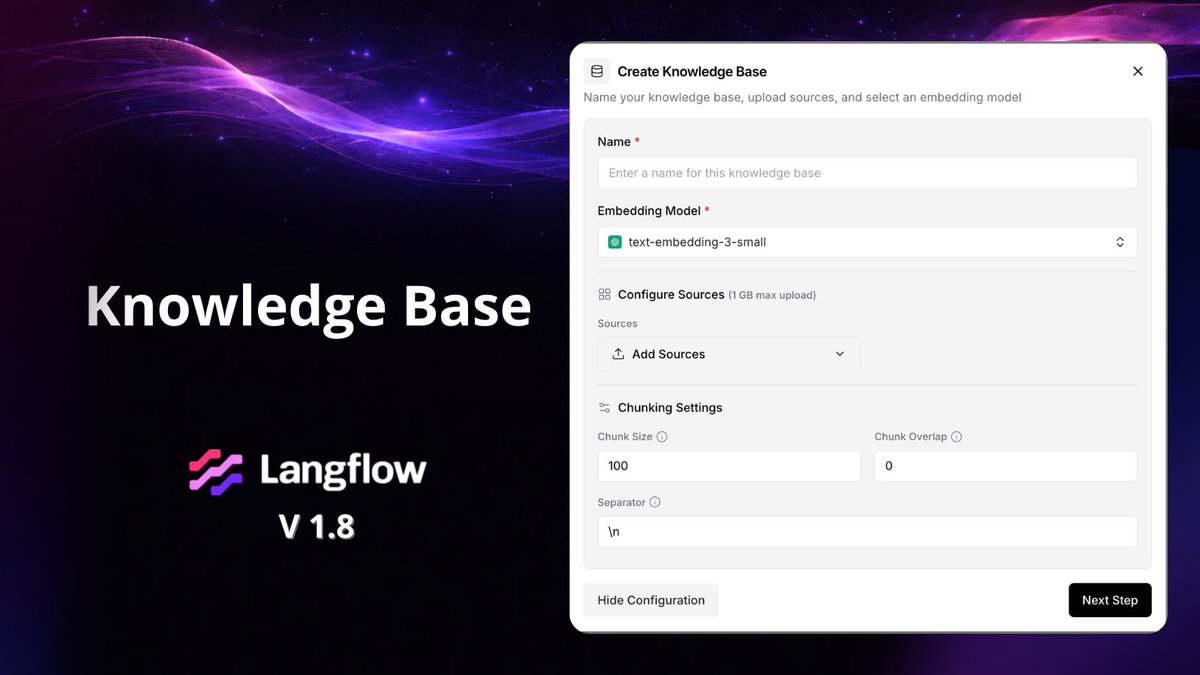

🔹 Knowledge Bases

Built-in knowledge bases act as local vector databases inside Langflow, making it easier to store and retrieve documents and datasets while enabling retrieval-augmented workflows.

🔹 Traces

Trace support provides deeper visibility into workflow execution, helping developers follow execution paths, measure latency, track token usage, and debug complex flows more easily.

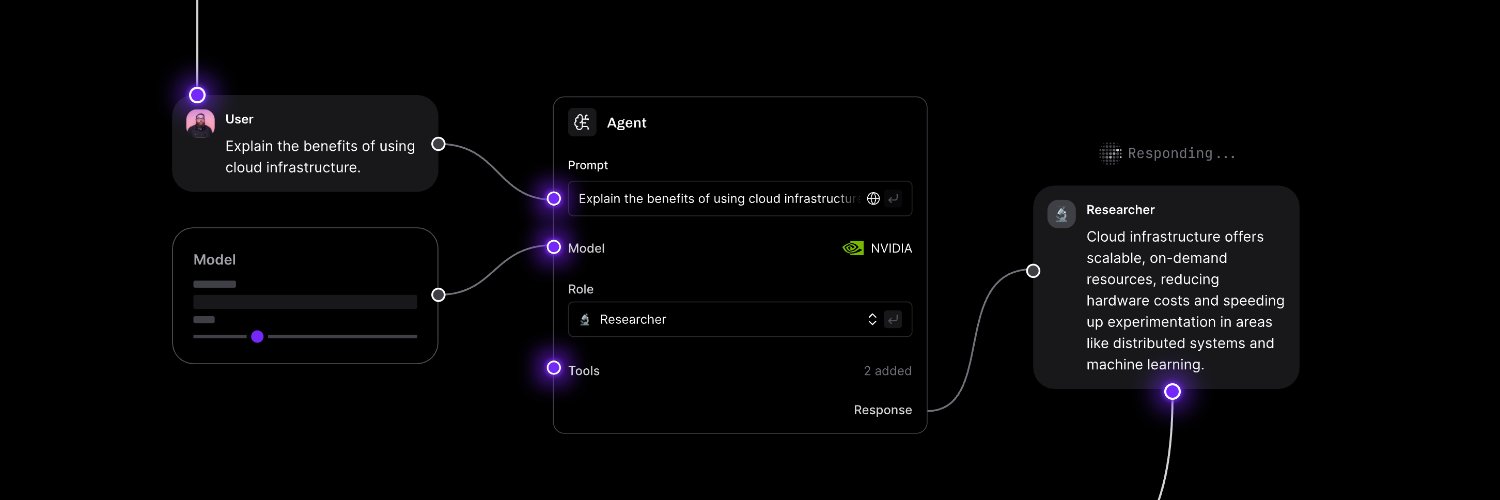

🔹 Agentics

Adds structured data workflow components, including N→N transformations (aMap), N→1 aggregations (aReduce), 0→N generation (aGenerate), and DataFrame merging without LLM calls, unlocking practical use cases like data enrichment and aggregation.

👉 Explore Langflow 1.8 and start building production-ready AI agents: langflow.org/blog/langflow-…

English