Eric Lee

75 posts

A data center in New Brunswick was canceled tonight when hundreds of residents showed up. When fight big tech and private equity we win.

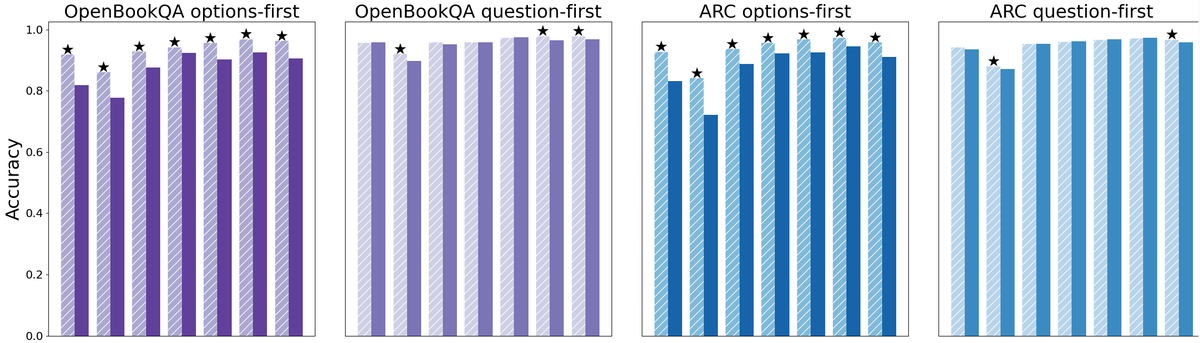

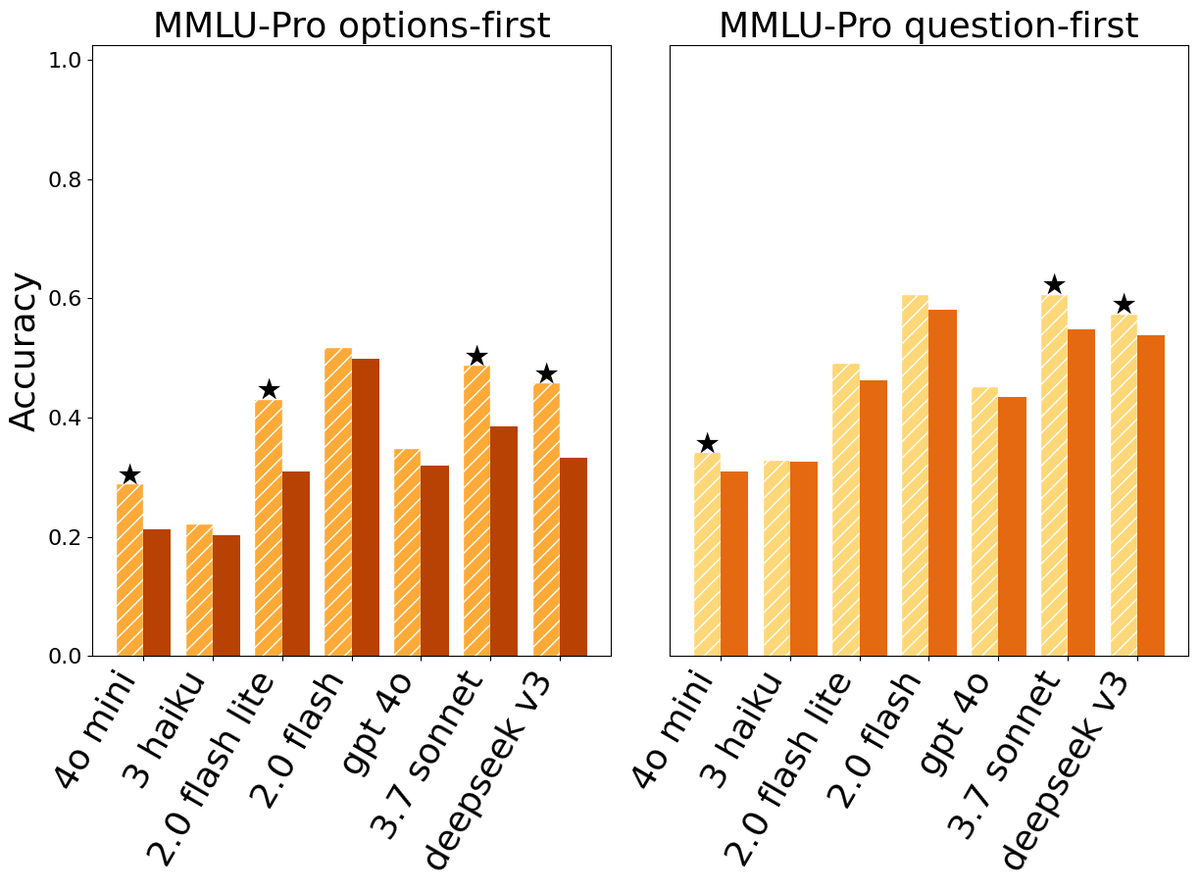

LLMs process text from left to right — each token can only look back at what came before it, never forward. This means that when you write a long prompt with context at the beginning and a question at the end, the model answers the question having "seen" the context, but the context tokens were generated without any awareness of what question was coming. This asymmetry is a basic structural property of how these models work. The paper asks what happens if you just send the prompt twice in a row, so that every part of the input gets a second pass where it can attend to every other part. The answer is that accuracy goes up across seven different benchmarks and seven different models (from the Gemini, ChatGPT, Claude, and DeepSeek series of LLMs), with no increase in the length of the model's output and no meaningful increase in response time — because processing the input is done in parallel by the hardware anyway. There are no new losses to compute, no finetuning, no clever prompt engineering beyond the repetition itself. The gap between this technique and doing nothing is sometimes small, sometimes large (one model went from 21% to 97% on a task involving finding a name in a list). If you are thinking about how to get better results from these models without paying for longer outputs or slower responses, that's a fairly concrete and low-effort finding. Read with AI tutor: chapterpal.com/s/1b15378b/pro… Get the PDF: arxiv.org/pdf/2512.14982

LLMs process text from left to right — each token can only look back at what came before it, never forward. This means that when you write a long prompt with context at the beginning and a question at the end, the model answers the question having "seen" the context, but the context tokens were generated without any awareness of what question was coming. This asymmetry is a basic structural property of how these models work. The paper asks what happens if you just send the prompt twice in a row, so that every part of the input gets a second pass where it can attend to every other part. The answer is that accuracy goes up across seven different benchmarks and seven different models (from the Gemini, ChatGPT, Claude, and DeepSeek series of LLMs), with no increase in the length of the model's output and no meaningful increase in response time — because processing the input is done in parallel by the hardware anyway. There are no new losses to compute, no finetuning, no clever prompt engineering beyond the repetition itself. The gap between this technique and doing nothing is sometimes small, sometimes large (one model went from 21% to 97% on a task involving finding a name in a list). If you are thinking about how to get better results from these models without paying for longer outputs or slower responses, that's a fairly concrete and low-effort finding. Read with AI tutor: chapterpal.com/s/1b15378b/pro… Get the PDF: arxiv.org/pdf/2512.14982

Apparently this video has all of X in a frenzy. If it had come out before the AI era, people would be fawning over it as great art, but now they are so clicker trained that any mention of AI sends them into a verbiage frenzy and they anoint anything AI related as slop.

We processed over 1.3 Quadrillion tokens last month - that's 1,300,000,000,000,000 tokens! or to put it another way that's 500M tokens a second or 1.8 Trillion tokens an hour... 🤯