Lei Ma

519 posts

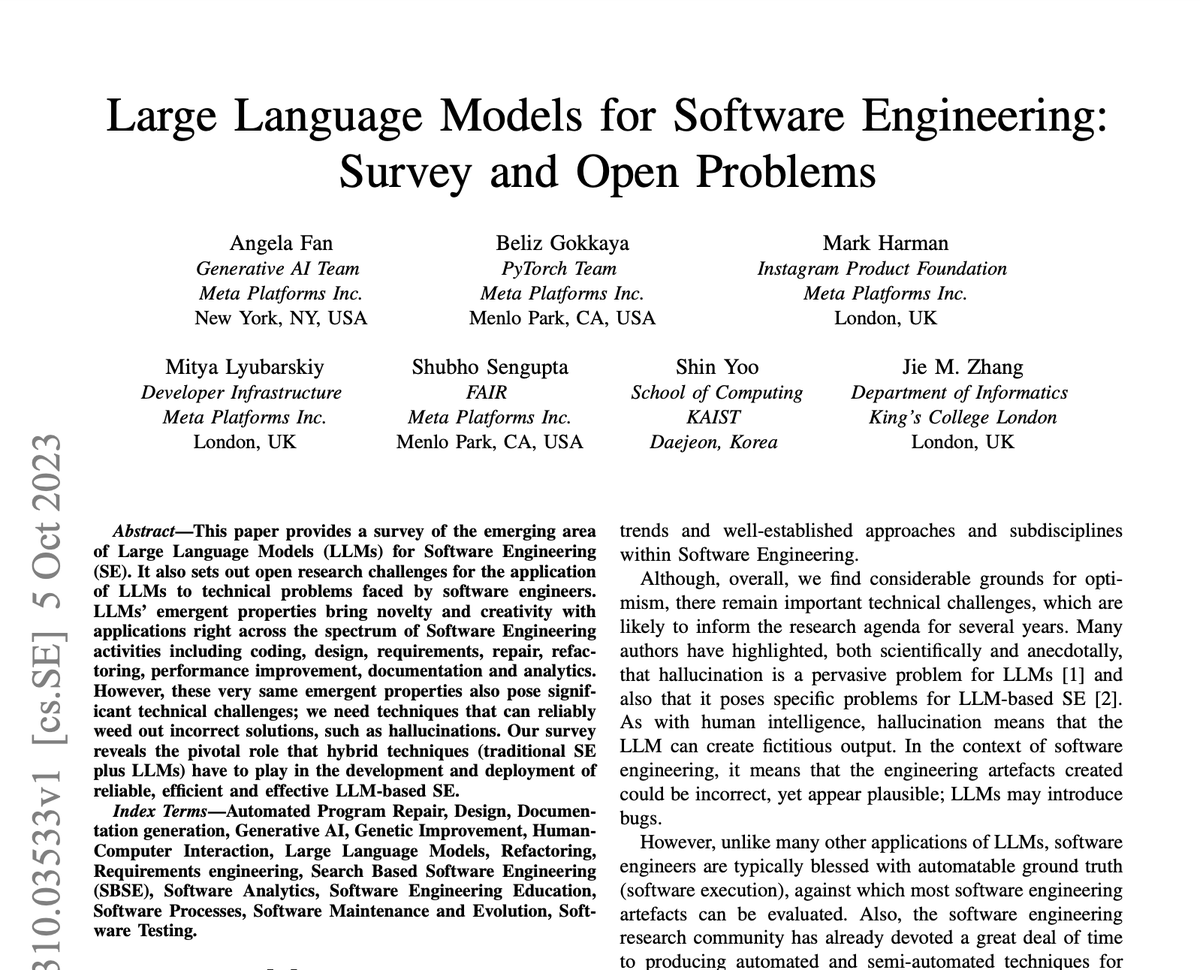

🔥 When talking about training LLMs, do you think of updating model parameters? In fact, you can use LLMs to learn a rule library. This not only improves multi-step reasoning, but also has many advantages: interpretability, transferability, and applicable to black-box LLMs. 🧵1/6

HyperHuman: Hyper-Realistic Human Generation with Latent Structural Diffusion paper page: huggingface.co/papers/2310.08… Despite significant advances in large-scale text-to-image models, achieving hyper-realistic human image generation remains a desirable yet unsolved task. Existing models like Stable Diffusion and DALL-E 2 tend to generate human images with incoherent parts or unnatural poses. To tackle these challenges, our key insight is that human image is inherently structural over multiple granularities, from the coarse-level body skeleton to fine-grained spatial geometry. Therefore, capturing such correlations between the explicit appearance and latent structure in one model is essential to generate coherent and natural human images. To this end, we propose a unified framework, HyperHuman, that generates in-the-wild human images of high realism and diverse layouts. Specifically, 1) we first build a large-scale human-centric dataset, named HumanVerse, which consists of 340M images with comprehensive annotations like human pose, depth, and surface normal. 2) Next, we propose a Latent Structural Diffusion Model that simultaneously denoises the depth and surface normal along with the synthesized RGB image. Our model enforces the joint learning of image appearance, spatial relationship, and geometry in a unified network, where each branch in the model complements to each other with both structural awareness and textural richness. 3) Finally, to further boost the visual quality, we propose a Structure-Guided Refiner to compose the predicted conditions for more detailed generation of higher resolution. Extensive experiments demonstrate that our framework yields the state-of-the-art performance, generating hyper-realistic human images under diverse scenarios.

#2 Great Keynote speakers at the 7th International @ICSEconf Workshop on Games and Software Engineering( GAS 2023) @Rauschii from @HS_EmdenLeer and @leima_2005 from @ualberta #games #software #engineering - you still have time to register! @MODIS_Unit @FBK_research

Thrilled to announce our presentation about interactive ML model debugging at 2:30 PM on Tuesday in Hall A #CHI2023 I will present paper “DeepSeer: Interactive RNN Explanation and Debugging via State Abstraction” Paper: arxiv.org/abs/2303.01576 Code: github.com/momentum-lab-w… (1/6)

Ever wondered why your NLP model performs worse after deployment? Come see our presentation on “DeepLens: Interactive Out-of-distribution Data Detection in NLP Models” at 2:30 PM on Tuesday in Hall A #CHI2023 Paper: arxiv.org/abs/2303.01577 Code: github.com/momentum-lab-w… (1/6)