🐸

5.7K posts

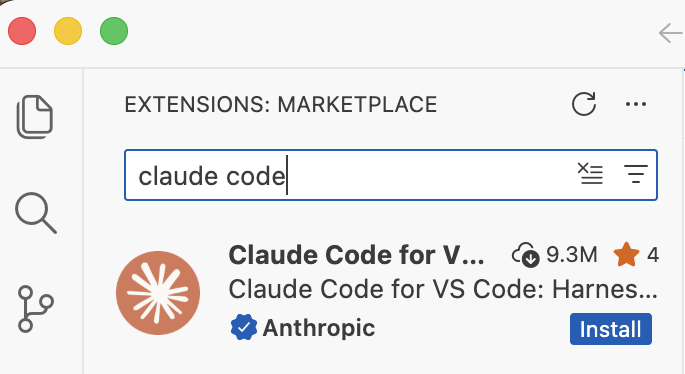

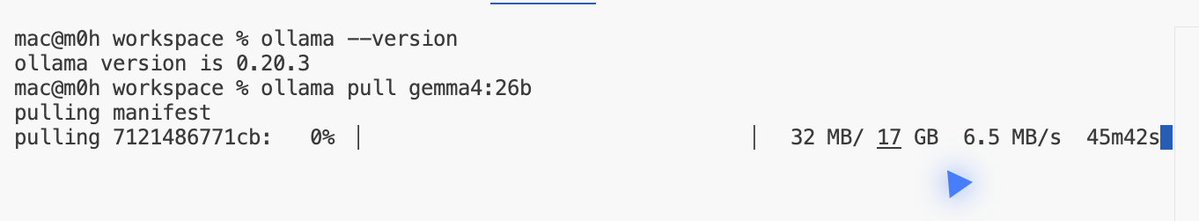

how to run claude code with gemma 4 completely free (beginner's guide): this guide shows you how to use claude code completely free with gemma 4, no subscriptions &no api keys. just your laptop + 15 mins setup. this lets you run open-source models (like google’s gemma) locally, meaning: — no costs — full privacy what you need before starting, make sure you have: vs code installed — node.js (version 18+) — stable internet (for one-time model download) _____________ step 1: install ollama (the engine) ollama is what runs ai models locally on your machine. → mac: go to ollama.com/download click download for mac, open the file and install like any normal app. no terminal needed. → windows: go to ollama.com/download, click download and install → linux: curl -fsSL ollama.com/install.sh | sh check it worked: ollama --version _____________ step 2: download gemma 4 this is the ai model you’ll run locally, pick based on your system: → low-end (8gb ram): ollama pull gemma4:e2b → recommended (16gb ram): ollama pull gemma4:e4b → high-end (32gb ram): ollama pull gemma4:26b ⚠️ it’s a big download (7gb–18gb), so give it time. after download is completed, verify with the command: ollama list _____________ step 3: install claude code in VS code or any other IDE this is your interface. — open vs code — press ctrl + shift + x — search claude code install the one by anthropic after install → you’ll see a ⚡ icon in sidebar _____________ step 4: connect claude code to ollama by default, claude connects to the cloud. we’re redirecting it to your local machine. so do this: — press ctrl + shift + p — search: open user settings (json) — then paste this inside: "claude-code.env": { "ANTHROPIC_BASE_URL": "http://localhost:11434", "ANTHROPIC_API_KEY": "", "ANTHROPIC_AUTH_TOKEN": "ollama" } what this does: — it routes everything to your local ollama server. — nothing leaves your device. _____________ step 5: run everything 1. start ollama with this command: ollama serve leave this running. 2. open claude code in vs code click ⚡ icon 3. select your model type: gemma4:e4b (or whichever you downloaded) you’re done _____________ you now have: — claude code running — powered by gemma 4 — fully local completely free try: “explain this file” “write a function” “refactor this code” _____________ common issues (quick fixes) “unable to connect” run: ollama serve asked to sign in your json config is wrong check for missing commas/brackets very slow responses your model is too big switch to: gemma4:e2b model not found run: ollama list copy exact name quick recap you just built: a free claude setup powered by local ai no api costs Follow for more AI contents like this!!!

Meet Gemma 4: our new family of open models you can run on your own hardware. Built for advanced reasoning and agentic workflows, we’re releasing them under an Apache 2.0 license. Here’s what’s new 🧵

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.