Sabitlenmiş Tweet

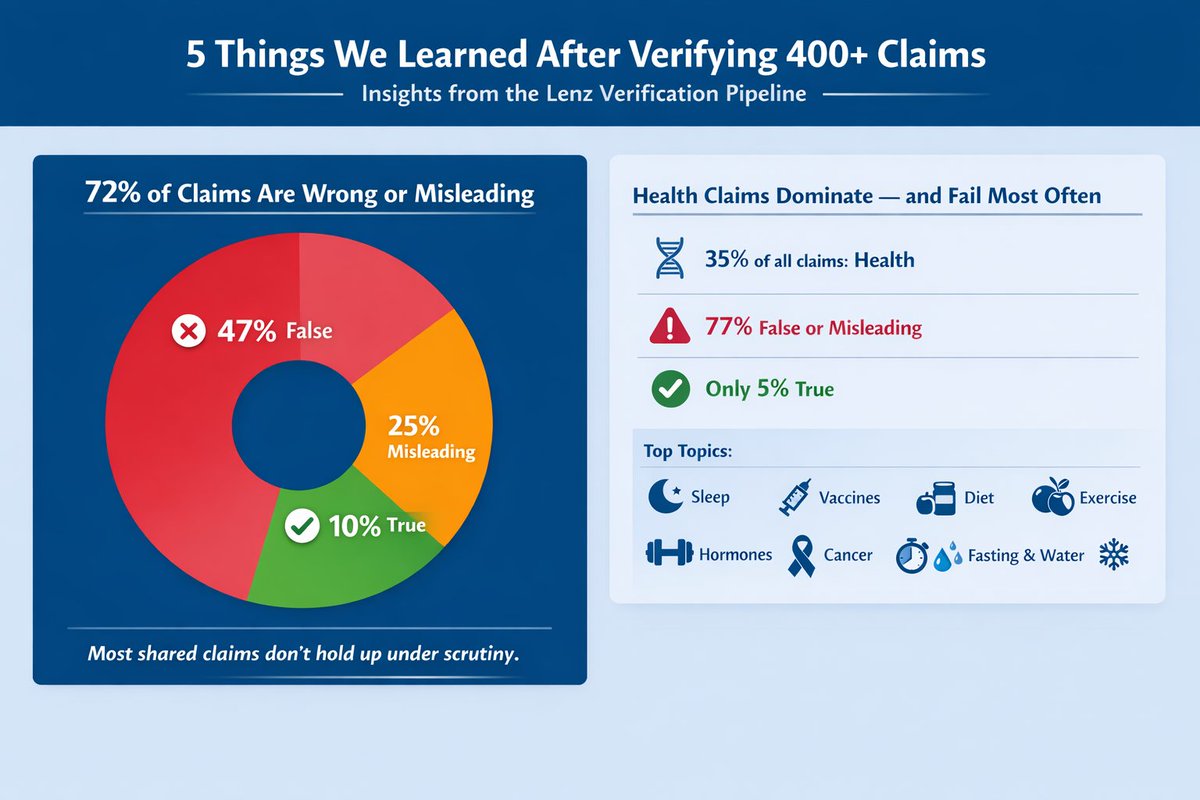

At Lenz.io, we built a 5-step machine that takes any claim and produces a verdict with sources. Here's what actually happens inside it.

Step 1: Framing. Your claim gets rewritten into an atomic, falsifiable statement. Precision matters.

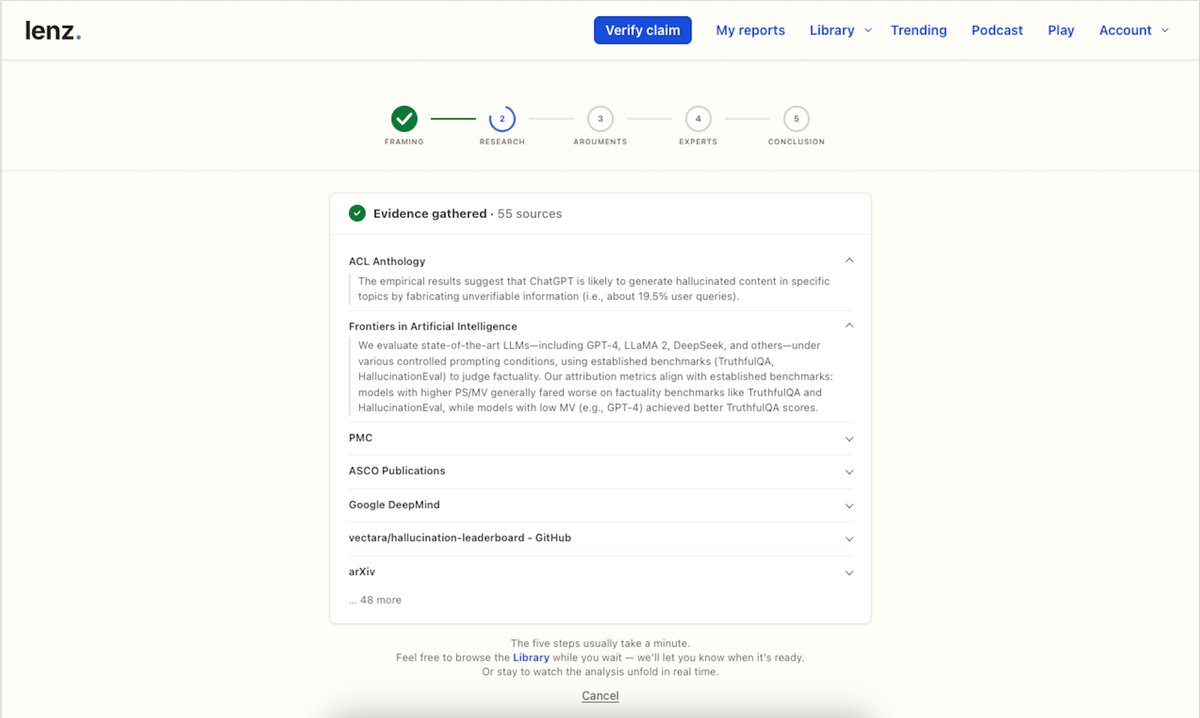

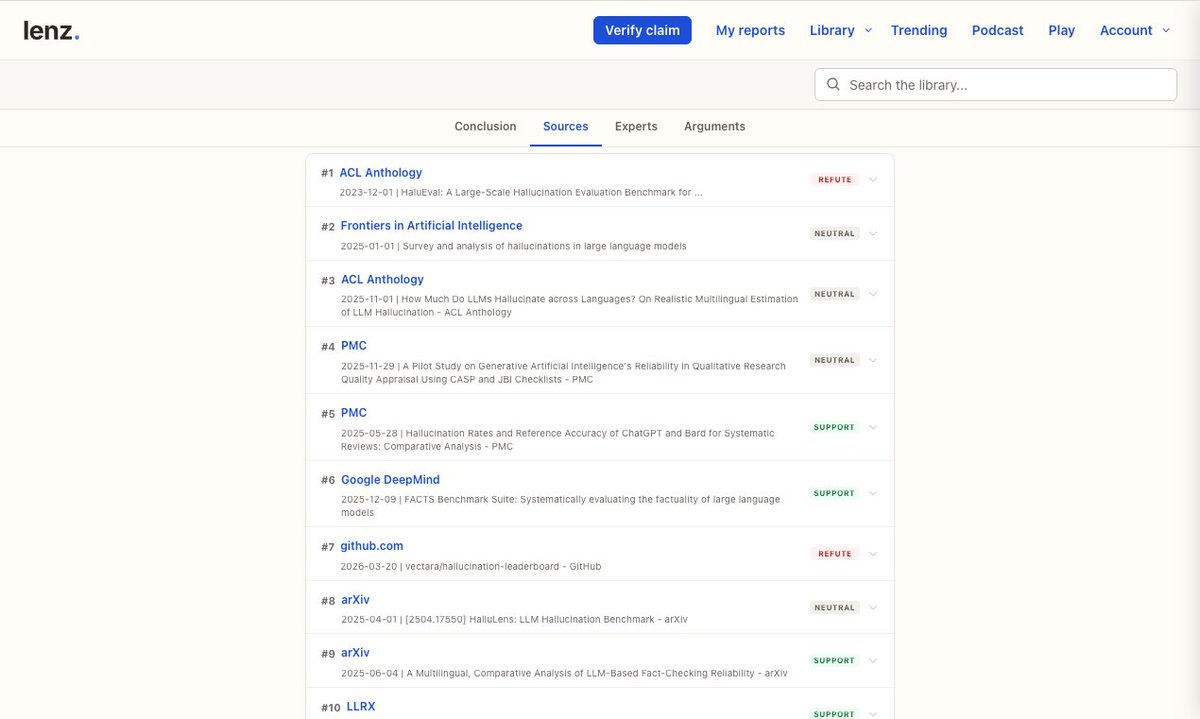

Step 2: Research. 40+ sources pulled — peer-reviewed papers, government stats, meta-analyses. Each gets a credibility tier. A retracted paper still in the wild gets flagged even if a credible blog cited it.

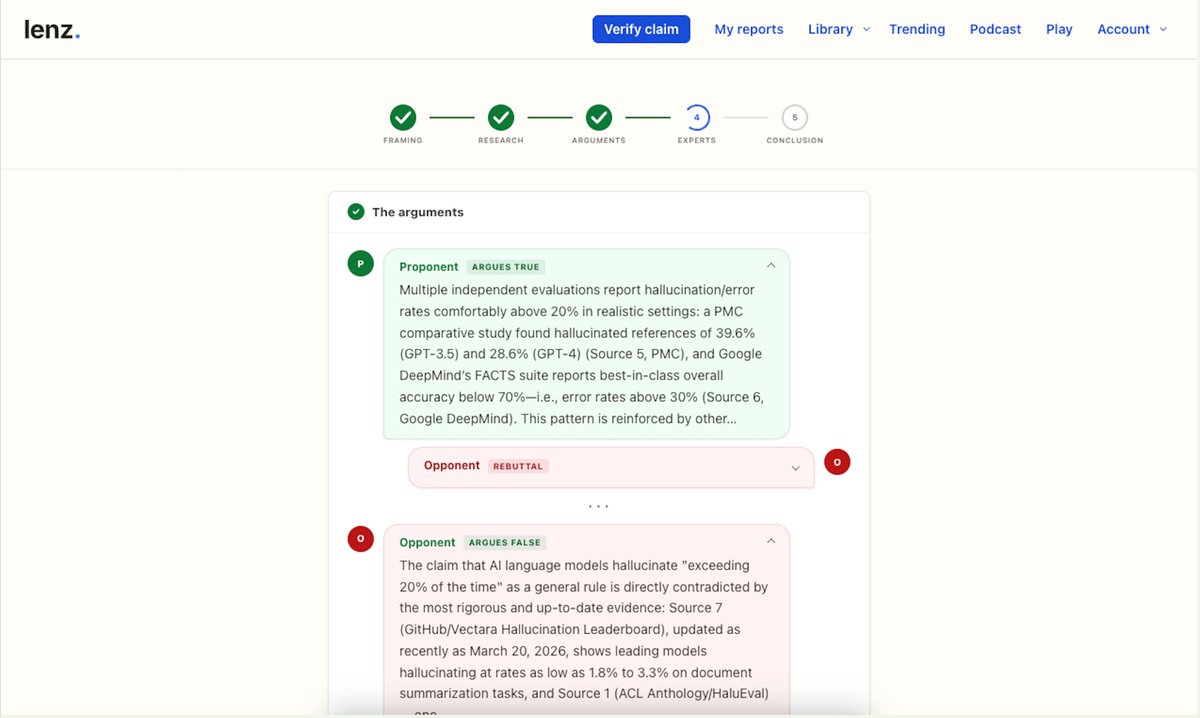

Steps 3-4: Two AI models argue the claim. One defends it. One attacks it. Neither knows the other's position. This isn't a gimmick — a single model will almost always rationalize what it already "believes."

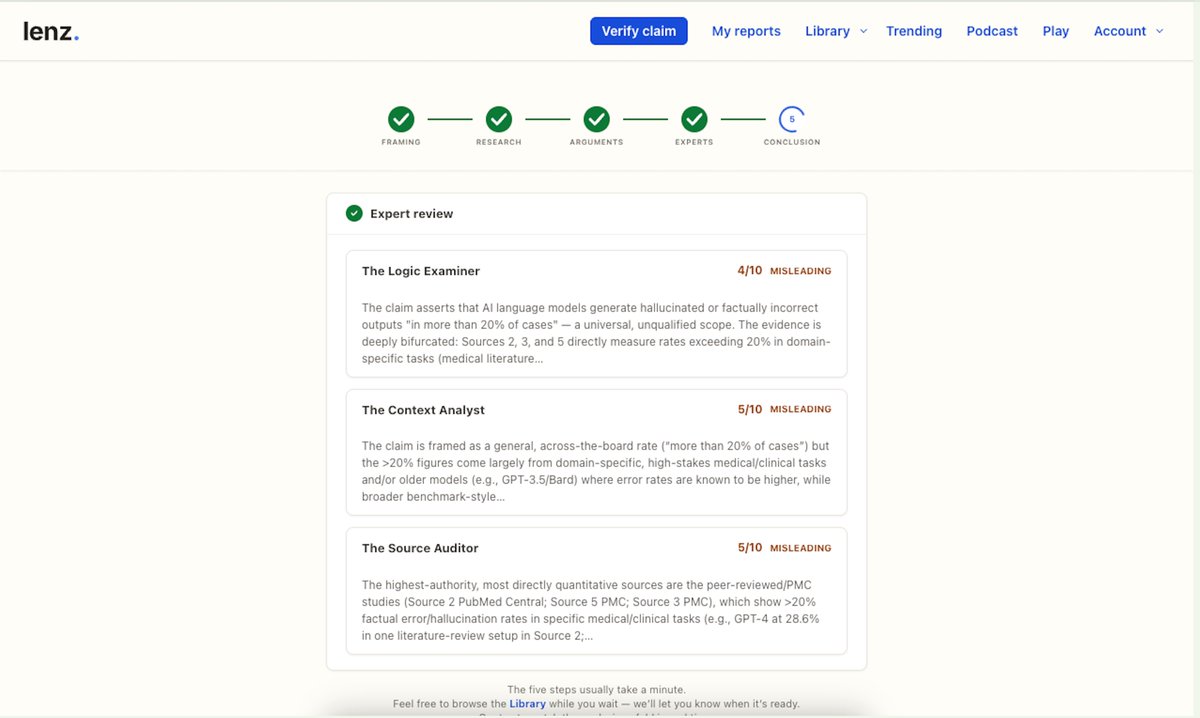

Step 5: Three independent models judge the debate — on logic, bias, and source quality separately. Majority rules.

The result: True / Mostly True / Misleading / False / Insufficient Data.

The whole pipeline runs in ~90 seconds. Try it on any claim you've wondered about → lenz.io/fact-check-ai

#FactCheck #AI #Misinformation

English