Leo

3.6K posts

i've no idea why no one is trying their best to make better github

stop doing pr review bots do something more ambitious

Beka@bekacru

We REALLY deserve better GitHub

English

Brother from another mother. I thought I was the only one doing that. Safari is simply the best "consumption" browser, while Chrome is the best "creation" browser.

shadcn@shadcn

@wongmjane Same here. Safari is default. Chrome for dev work.

English

Leo retweetledi

We are alarmed by reports that Germany is on the verge of a catastrophic about-face, reversing its longstanding and principled opposition to the EU’s Chat Control proposal which, if passed, could spell the end of the right to privacy in Europe.

signal.org/blog/pdfs/germ…

English

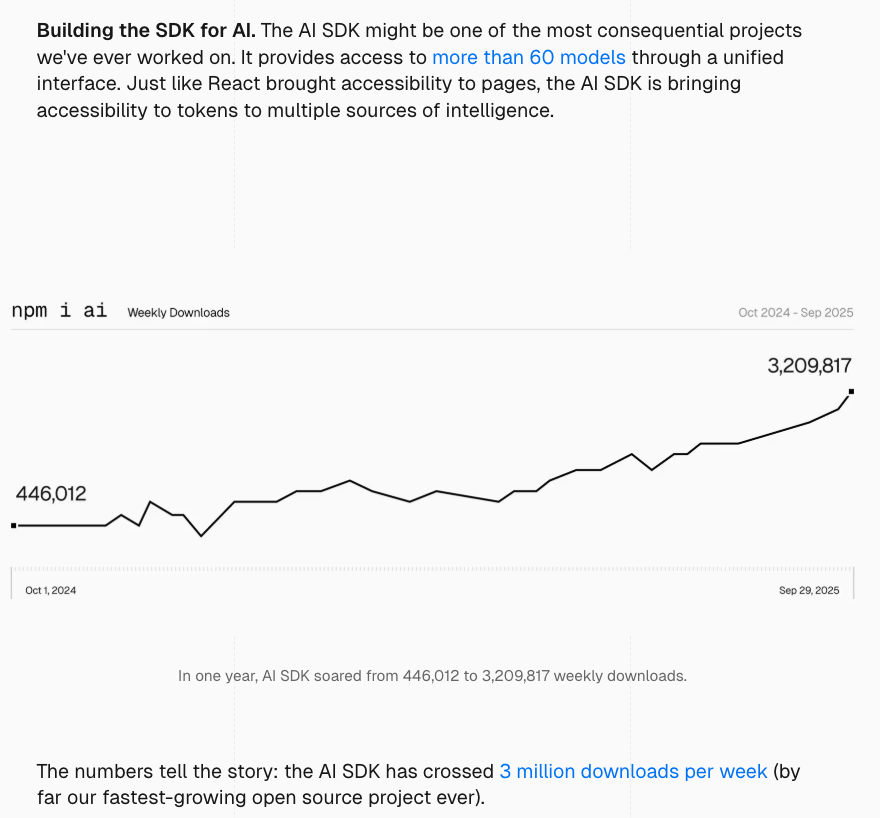

When I joined Vercel to work on @aisdk, I wanted to create the best library for building AI applications.

The growth since then was beyond what I imagined, and I am proud that AI SDK was a key part of the story behind the recent Vercel fundraise.

English

Announcing @vercel's Series F to build the AI Cloud

vercel.com/blog/series-f

English

@lachlanjc @vercel I wonder if they could instead delete most old deployments, but retain snapshots (e.g. every couple months or so), to still provide an overview of the deployment history forever. cc @tomocchino

English

It totally makes sense business-wise, but tragic to lose more generations of internet history on @vercel. They already deleted all my projects from the early React era, now many of my portfolio links will go dark. Would love to pin specific deploys to keep vercel.com/changelog/upda…

English

@roguesherlock @jitl I definitely agree! We do plan to embed data into the client eventually, as an additional optimization, but not as functionality blocker. The APIs that Blade has won't change, and the performance will only change marginally, due to our edge replicas.

English

I think there are tradeoffs. With sync engines approach you only pay that cost once during initial render but with rsc paradigm you pay that cost for every navigation/action.

ngl beam has a simple mental model and nice dx and would work well for lots of apps. But I think it currently has a ceiling on the types of apps you can build. I think client data would definitely raise that ceiling!

English

Suspense in React is a solution to a problem that should have never existed in the first place. It exists to avoid blocking the UI on slow data and data waterfalls, but it is, in my opinion, fundamentally anti dynamism, anti personalization, and anti performance.

Because the effort required to craft a clean UI with it is much greater than if it wasn't involved. A proper skeleton requires meticulous planning of all the potential states that a UI could be in, and then have those be represented as good as possible by the skeleton. It is always an approximation, and the worse the approximation is, the worse the user experience is.

Whereas, if your data is fast, the final UI is not constrained to what the skeleton looks like, which enables the best user experience (no loading states), maximum dynamism, and thereby also maximum personalization. Of course this doesn't immediately work for any app of any size, but there is a wide range of small to medium apps for which it, in my opinion, makes the most sense.

I created blade.im to make it easy to quickly build apps that offer maximum dynamism, however, without any loading states whatsoever. No skeleton, no SPA spinner, no slow TTFB. Every page render is a single database transaction, even if your layouts and page contain many dozens of queries. If you perform a write, the whole UI is updated for you, in the same transaction that performs the write.

English

I agree with the common abstractions. I don't perceive them as limiting factors, however. The way I see it is that the term is also a result of how I use a given tool.

E.g. I might use S3, which is a disk, as my database for a particular use case. Similarly, I might use my database to store small chunks of binary objects for another use case, which, if you call them files, would then make it my disk. I can also place my files exclusively in memory and have a second faster memory on top, which would make the first memory the new disk.

Technically, if you use S3 over a network stream, you also can't call it a disk anymore, since it's not a part of your own compute stack. It might be a "network storage" for your application, but would use disks internally.

English

I think that no technology is a silver bullet. As mentioned in the main tweet, the approach definitely breaks down at some point, especially at scale (meaning suspense is needed for those cases).

There are also many other cases, such as e.g. not having any control whatsoever about the data source, having a page that is so insanely long (like the old Vercel Usage page) that data has to be loaded based on the scroll position, and so on.

Unless those cases are met, however, it is IMO unpleasant and unnecessary for users to see loading animations.

English

Memory, disk, database, a variable in code, CPU cache, or what else you like to call it are all just words for the same thing in different formats.

They can all have the same performance as each other depending on what product you pick and what you do with it. Unless you know the details of each layer, you can't make a sound argument that they are per se slower or faster than each other.

A simple Bun server that runs 10 or 20 queries with a bunch of nested joins on bun:sqlite has the same perf as any CDN. Try it. Adding React and page code on top doesn't change that. Unless you do things like offset-based pagination, no pagination at all, heavy counts, or other things that you need to avoid anyways, it will be fast.

English

To "populate the static shell" you have to wait for all 20 queries to complete before sending ANY HTML. That's literally blocking the entire page on the slowest query.

"Blocking for 20 queries is fine" assumes an unrealistically reliable world where nothing is ever slow. What happens with a cold cache, complex join, or external API call?

Also Node executing queries and rendering HTML isn't the same as a CDN serving static files from memory. Unless you're serving static shells and filling gaps later, which brings us back to Suspense.

English

I would say it always depends on how fast the thing is that you need to load. I of course agree that you can't make something faster that you have no control over (like the OG image of a different website), but for most apps, there are many vectors, such as the main data source, that devs are very much in control of.

So yes, suspending something you absolutely cannot control of course makes sense, but my point is that we control a huge part of our stack, and suspense is a sledge hammer that is being used for things that it should've never been used for (first load and page transitions).

English

I agree. But ultimately, the server remains the source of truth in any model. There is not just data to consider, there is also code. For example, teams deploy several times per hour, and that code has to hit the client asap, so without server components you'd frequently download tons of unnecessary code, which is especially slow on slow connections. We do plan to offer data on the client, but we can't neglect the other requirements on the way there.

English

tbh I think we've tried this approach before and it led to poor ux as app ui becomes very sensitive to network. And It's not always about slow connection, there's latency, jitteriness, unreliability, etc. So no matter how fast the backend is, or nearby the edge is, or how fast the replication is for the db, you'll always be sensitive to network.

This is why I like the sync engine approach cause it smooths over the network boundary in the background.

Anyways, happy to be proven wrong and wish you all the best!

English

Whether or not you use suspense doesn't change how much HTML you need to download. Even if you use suspense, you still have to serve the static shell. If the static shell is already populated instead of empty, that's better for users, especially for users on slow connections.

Blocking a page render on 10, 20, or many more queries is absolutely fine. Any database executes that amount of queries in microseconds from memory, given that you don't write poorly optimized queries. Executing 1 or 20 queries won't change the perf. The main penalty is the network, and there is only a single request to the DB.

What you said about code vs static isn't valid. The perf of a Node server responding is the same as any CDN responding. They both need to run code and both have that code already evaluated in memory. Native code or not might change the request throughput, but not the perf of a single request.

I wrote Vercel's first prod static file server, which has been used for years, and `serve`, which has 2M+ weekly npm downloads and is Create-React-App's suggested prod server (CRA is being killed ofc).

English

You're measuring server-side query speed, not what users actually experience. Your server accessing edge SQLite in 1ms doesn't help a user on slow 3G who still needs to download all that HTML.

Also if you're streaming HTML while queries are still running, you need placeholders for missing data. That's just Suspense reinvented. If you block until all 10 queries complete before streaming, your TTFB includes the slowest query. Either way, you haven't solved the problem Suspense addresses.

Also "10 queries not slower than static CDN" is just false. Static files serve from memory cache. You're executing code, running queries, rendering HTML. And your "single transaction" only works for reads from potentially stale replicas.

English

@jitl You are correct that the network conditions dominate the speed of every page render in Blade. We rolled our own DB replicas in the same 18 AWS regions where Vercel is, which is sufficient for serving almost any user anywhere in around 20ms. Replication happens ahead of time.

English

@leo are you using Cloudflare DO? DO not available in every POP plus once >1 user wants same data the DO may across the planet from user2.

network condition dominates ttfb and transition even for cdn. i have fiber, desk to cf is 10ms, living room to cf is 200ms. airplane is worse

English

@roguesherlock @jitl Correct. Data sits at the edge, just like your application code. That's essential for a fast TTFB with slow connections, since there's no bandwidth to download lots of code or data. We will make data available in the browser too, but the edge is currently almost equally fast.

English