Leon Chlon

30 posts

Leon Chlon

@leon_chlon

Founder @ReliablyLabs, we predict & prevent hallucinations pre‑generation. hallbayes (≈1k⭐) In memory of my grandmother https://t.co/f69PzMqFQ0

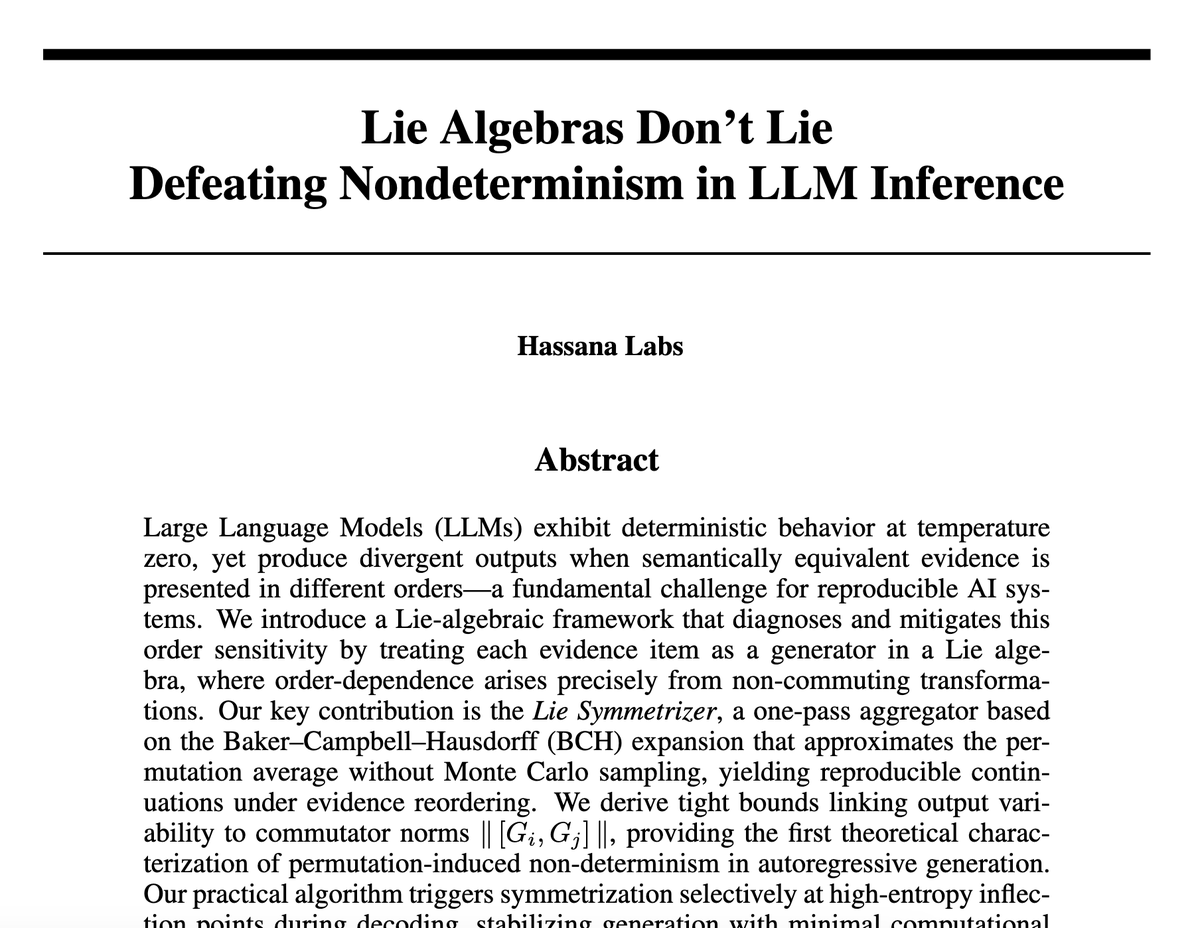

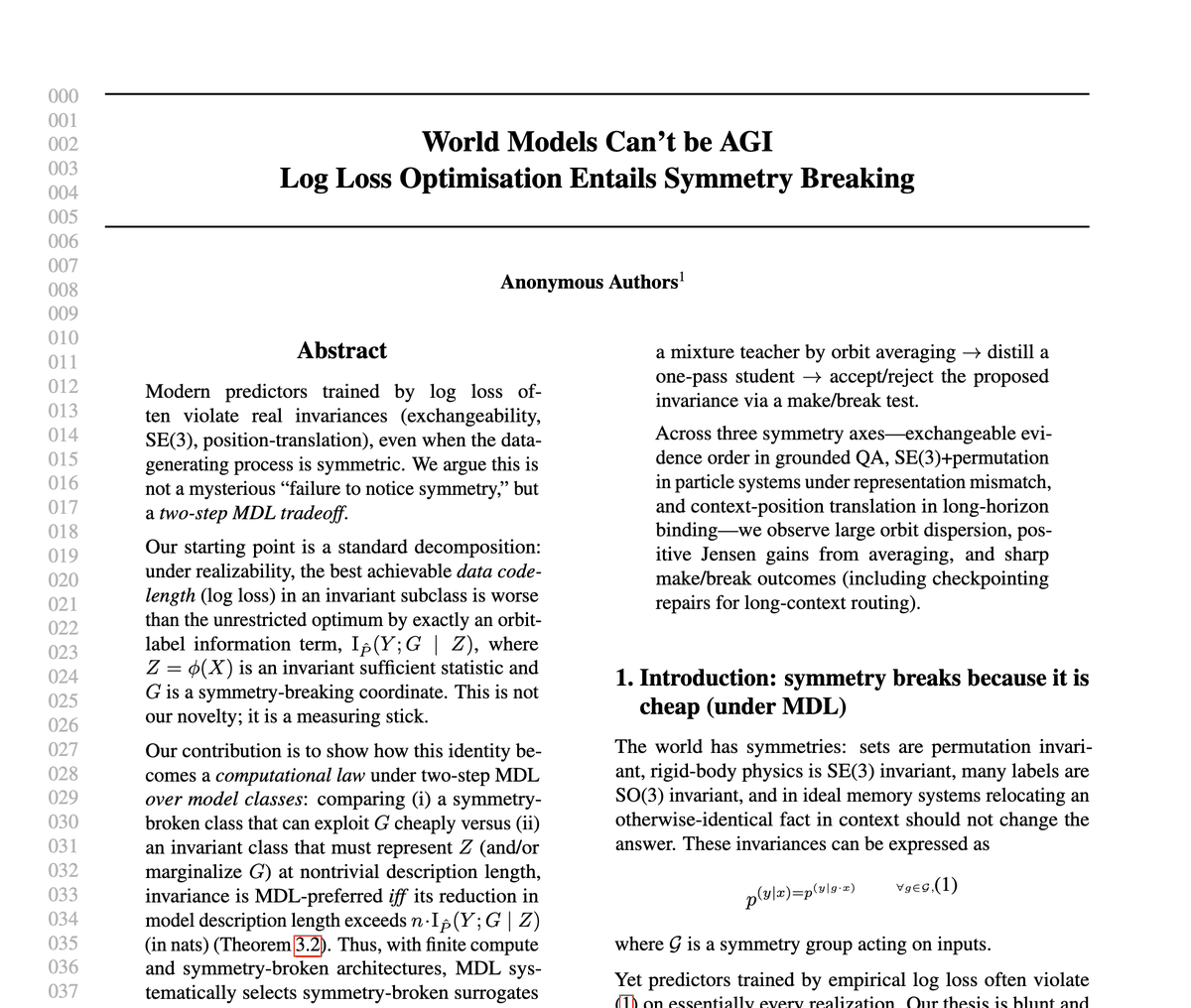

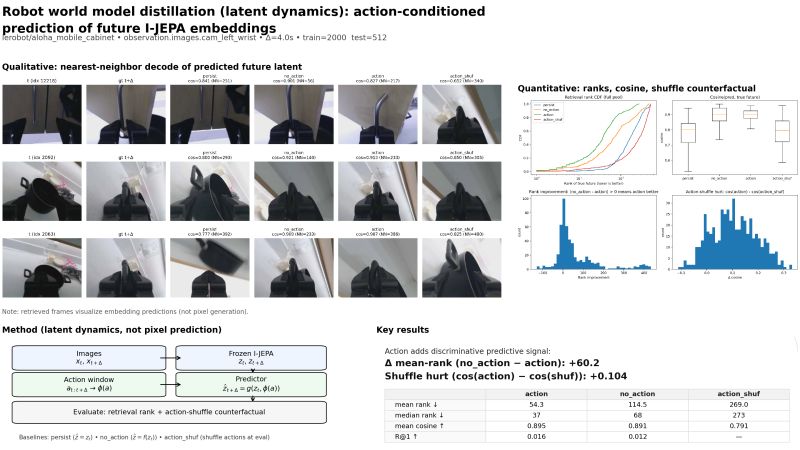

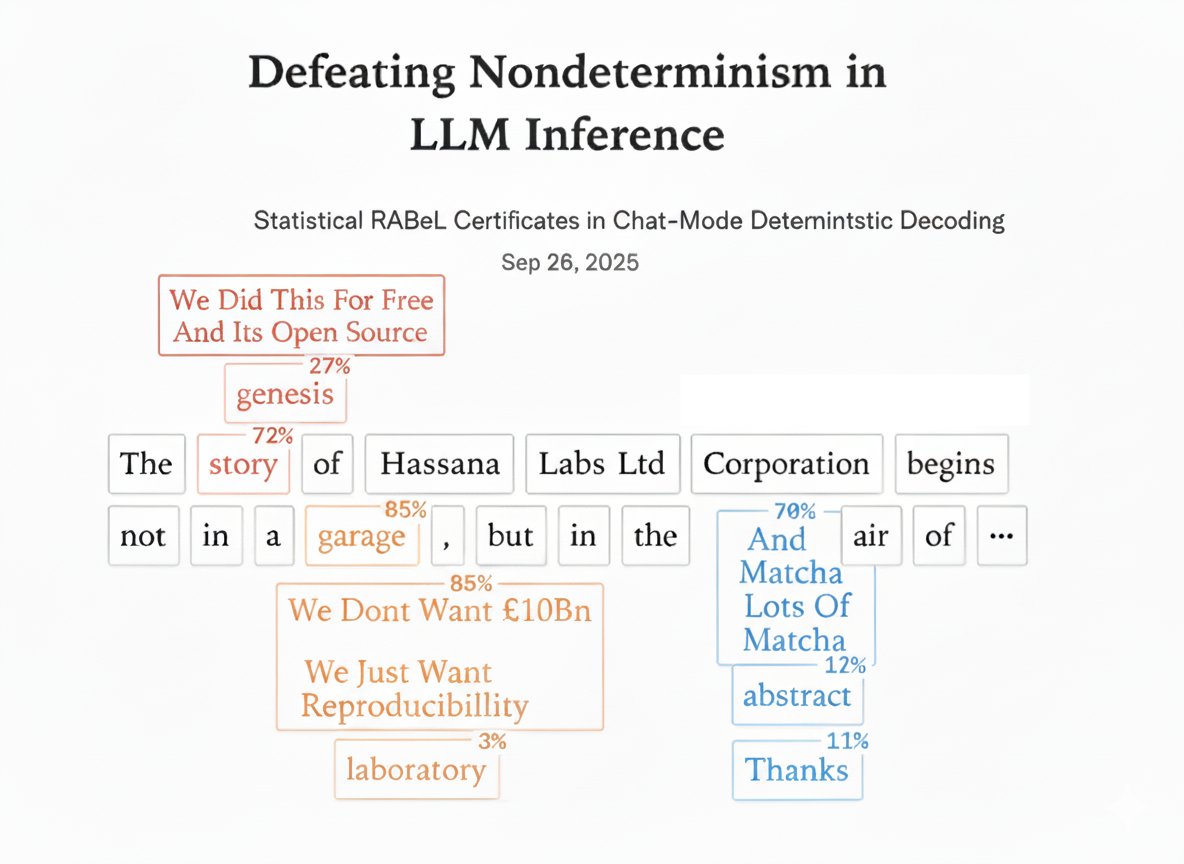

Looking for 3-5 authors to contribute on our most recent project. Ideal candidates are STEM/CS background from underrepresented communities and countries. You know how LLMs give different answers in a prompt, even at temperature zero because noise, batch effects etc. We solved this mathematically. We discovered that token chunks act like generators in a Lie algebra, when they don't commute, order matters. Just like how rotating an object on different axes gives different results depending on order. Our solution? The Lie Symmetriser which it computes the mathematical 'centre' of all possible orderings in one pass, no Monte Carlo needed. We only trigger it at inflection points where the model is genuinely uncertain, adding under 100 milliseconds overhead. Here's the kicker: we open source tools that are training-free and pre-generation, deploying immediately into production and the first version is already provides determinism guarantees. The earliest version which gave rise to this paper is already on GitHub. This will most likely benefit hopefuls applying for PhD positions more than anyone else since it’ll look good on your funding applications. To apply, just DM me If you need motivation to apply, we released this 3 weeks ago and its already on 998+ stars, with 4 enterprises already using it in their stack. Everyone that contributed is getting pinged by recruiters across MAANG about jobs. lnkd.in/e4s3X8GK If @thinkymachines can raise $10bn off @cHHillee writing a blogpost over matcha and avocado toast, our actual script that anyone can use should recover the £2k investment I put into the colab credits for validation.