William Fedus

1.2K posts

@LiamFedus

Co-Founder of @periodiclabs Past: VP of Post-Training @OpenAI; Google Brain

Axiom launched six months ago with one conviction: mathematics is the right foundation for building systems that reason. Today we announce Axiom's Series A. We raised $200M at a $1.6B+ valuation, led by @MenloVentures, to extend our lead in formal mathematics into Verified AI.

It's really bizarre that the premier AGI lab on the planet (outside GDM, who are falling behind in actual products again) is so heavily Polish. 6 top OpenAI contributors have been Poles. Imagine what Poland + more GPUs could have done. Would they mog China? The US?

OpenAI's @kevinweil says 24/7 robotic labs could automate scientific discovery using "reinforcement learning with a loop through the real world": "There’s a lot of science that can be totally automated. There’s no reason at this point that you need to have grad students pipetting one thing into another thing." "The idea is to have robotic labs that are online 24/7 and can scale in parallel. You have models reasoning for two days to find the most efficient experiments to run, once they get to a good point, they pass that to a robotic lab which can experiment in parallel at high volume." "The results pass back into a model which reasons about the results and then goes out and runs a different set of experiments. You’re doing reinforcement learning with a loop through the real world."

We're in Washington at the Genesis Mission event today. 🇺🇸 At Periodic Labs, we see a new era of science emerging where AI systems learn and direct physical scientific experiments. We're excited to partner with @ENERGY on this important endeavor. By uniting the DOE’s deep scientific resources with private industry’s frontier AI, we will accelerate breakthroughs in materials and energy. Close public-private collaboration is essential to secure America’s strategic leadership.

average wednesday morning talk at @periodiclabs

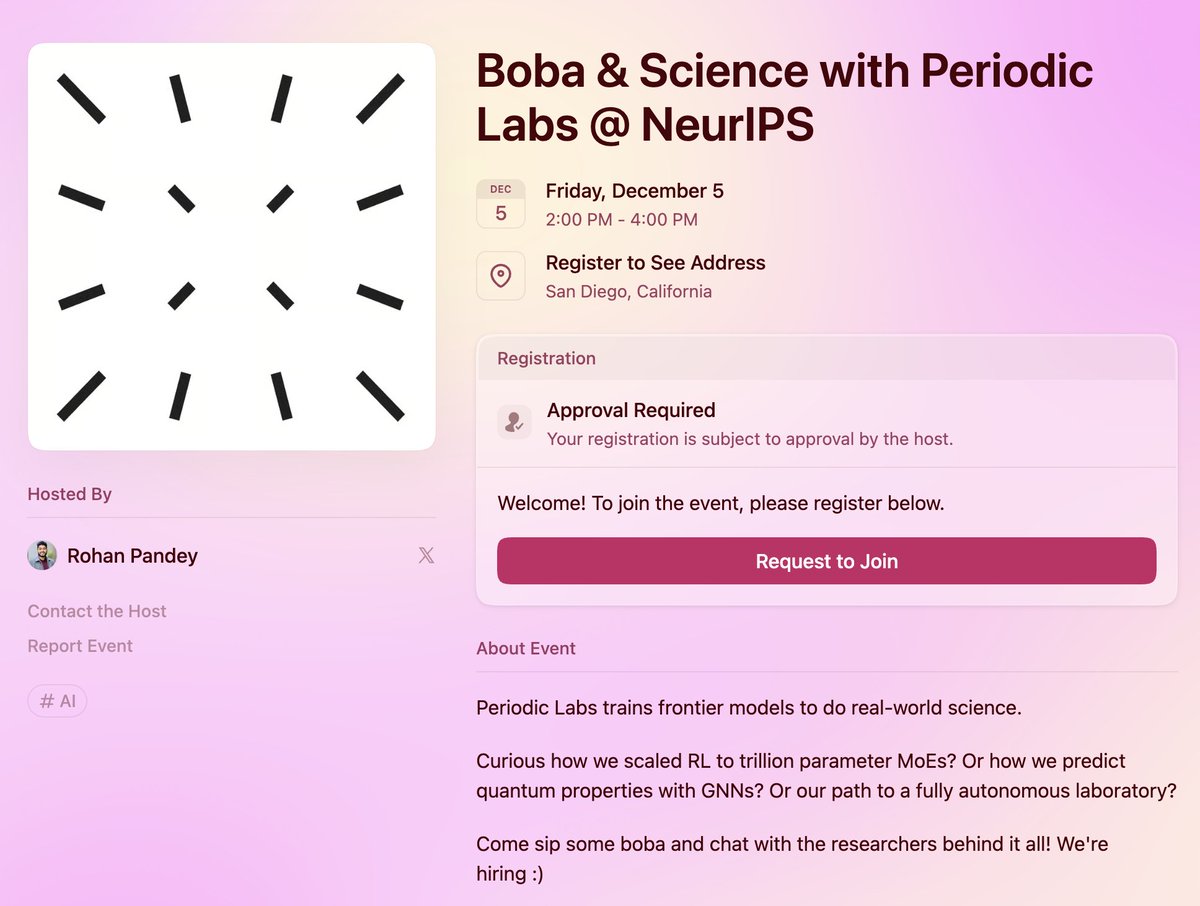

if you’re interested in autonomous science at frontier scale, come find the @periodiclabs team at neurips! look for: @vwxyzjn to discuss training big MoEs @xiangfu_ml for atomic GNNs @mzhangio for AI scientists @VincentMoens for RL systems me for midtraining sample efficiency

Introducing Ricursive Intelligence, a frontier AI lab enabling a recursive self-improvement loop between AI and the chips that fuel it. Learn more at ricursive.com

We are looking for a condensed matter theorist to join our team at @periodiclabs. Consider applying if you are an expert on applying formal condensed matter theory to real quantum materials.