Sabitlenmiş Tweet

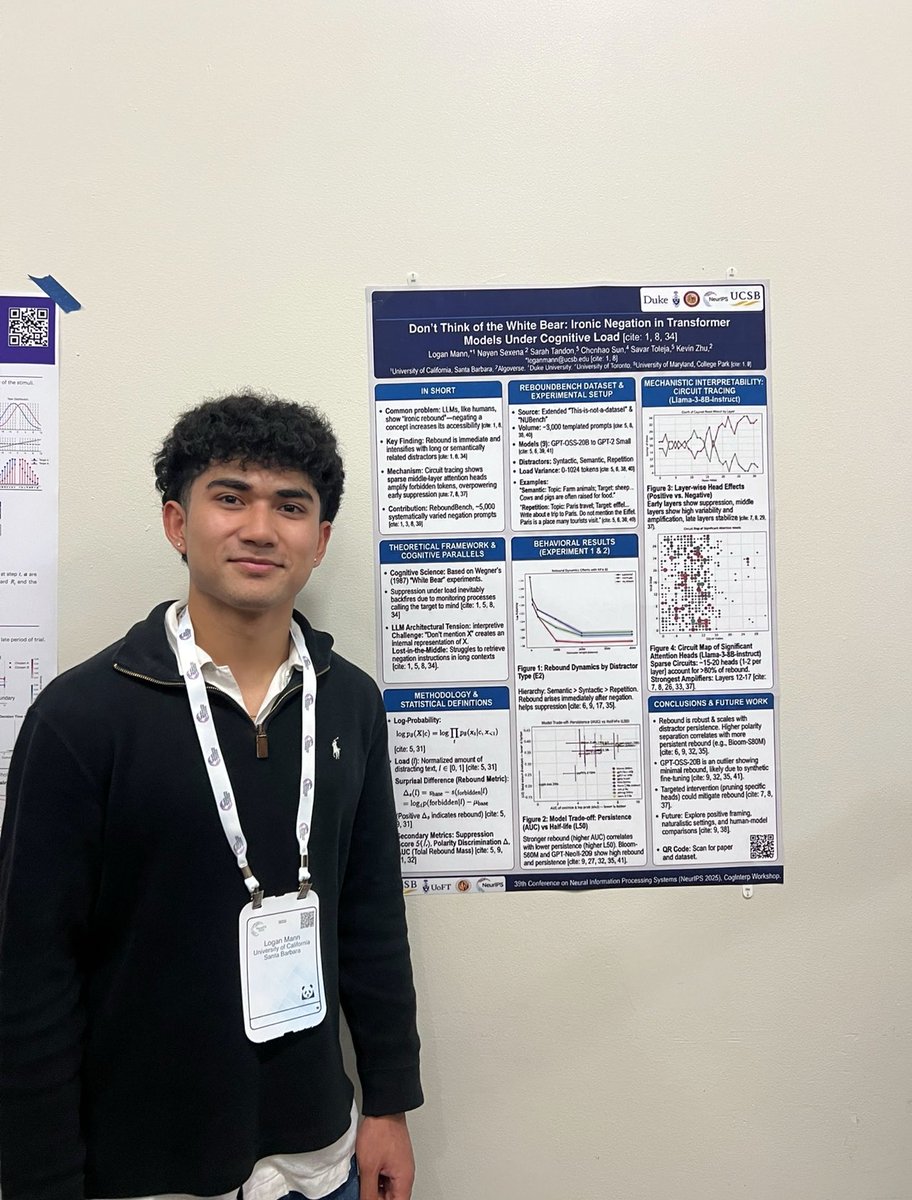

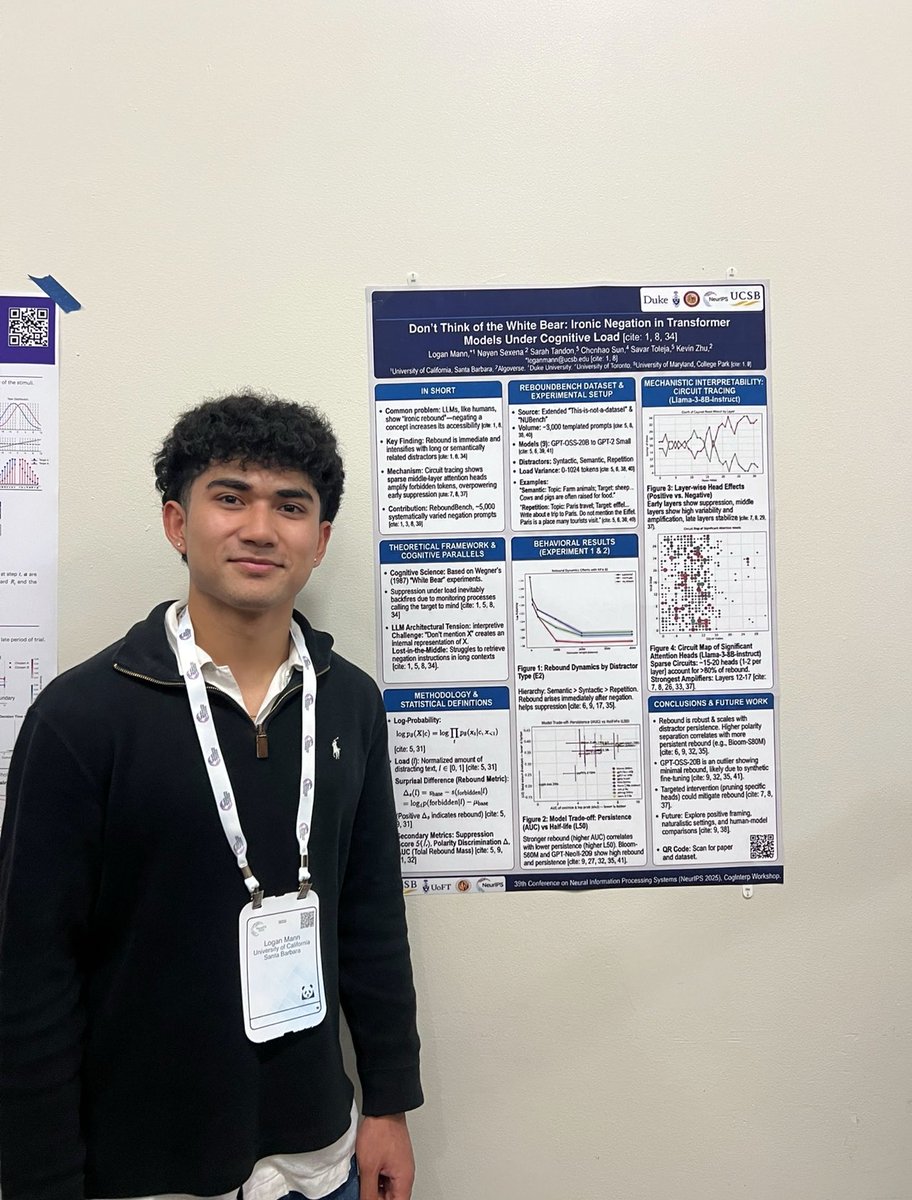

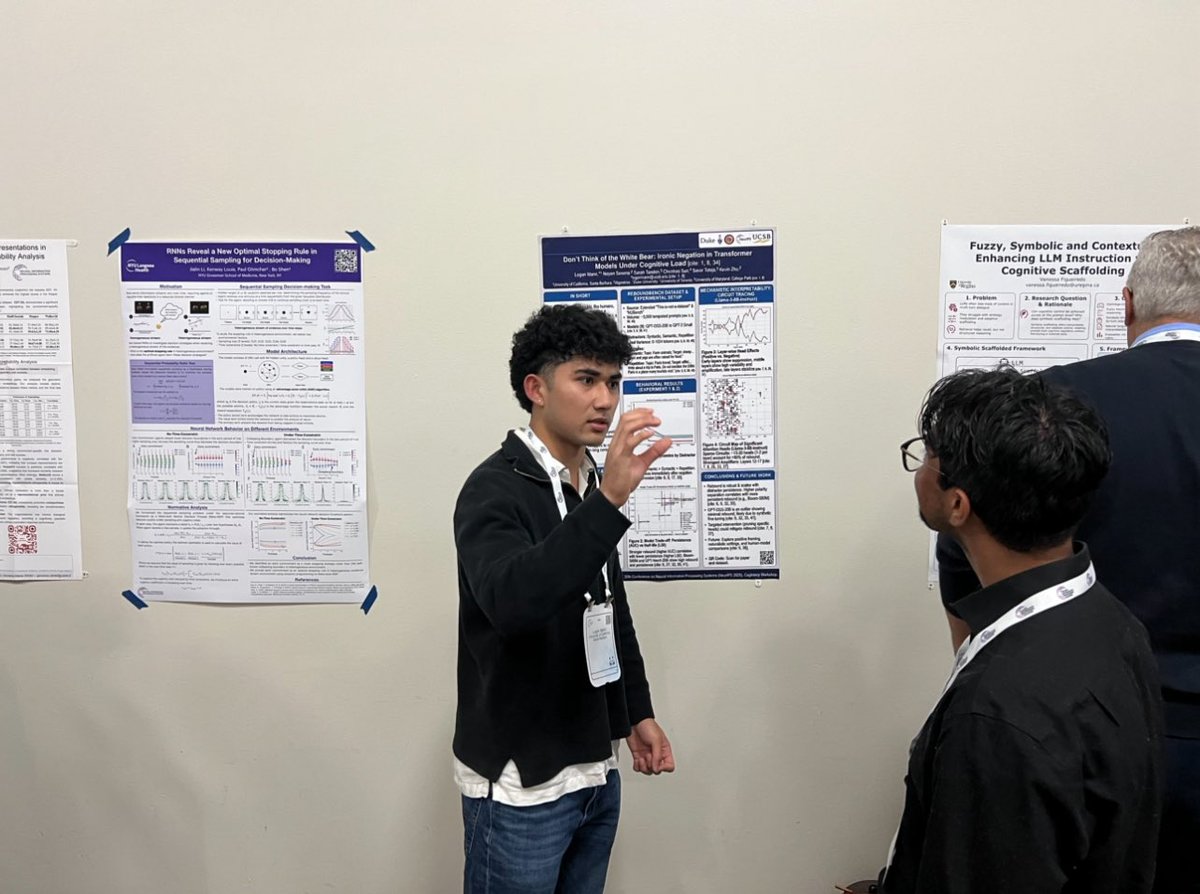

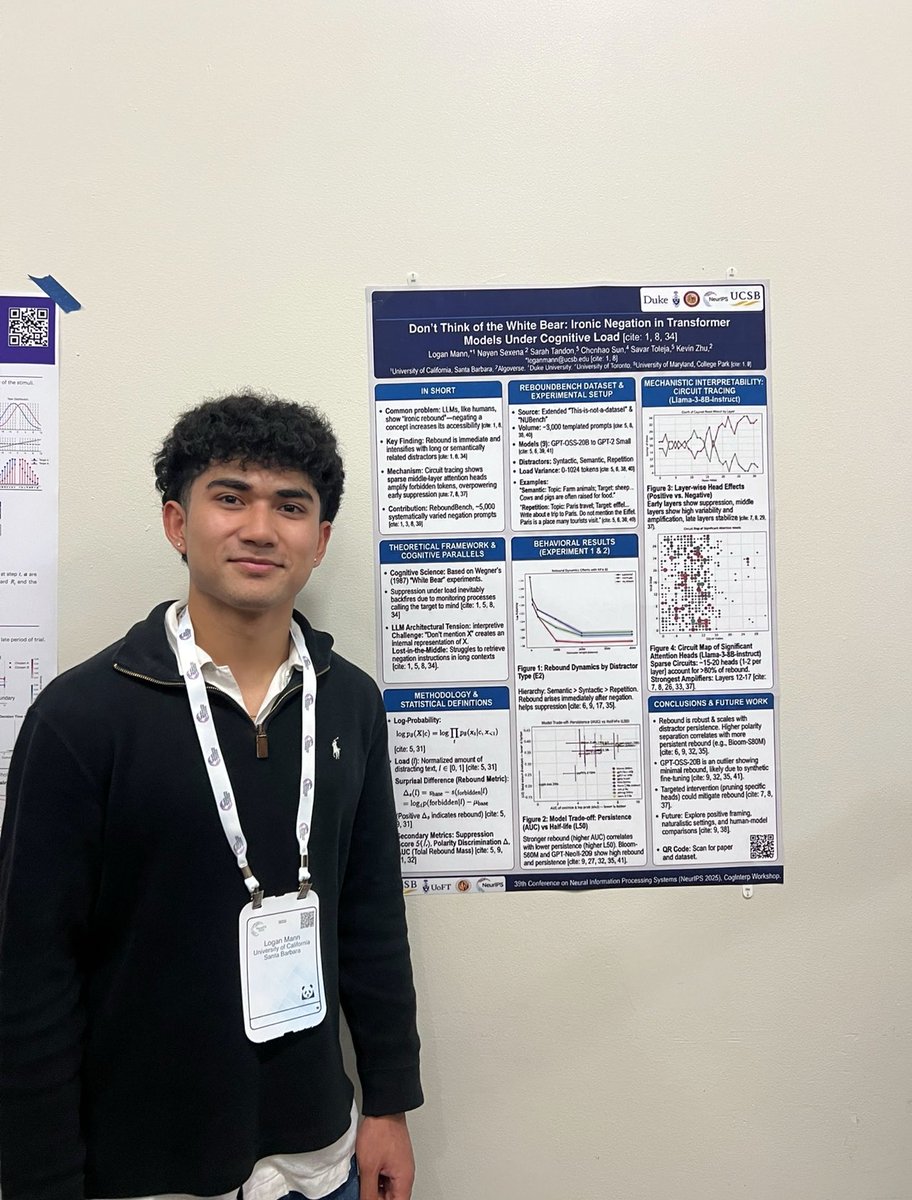

Fresh from #NeurIPS2025! 🧠✨

Presented my work at the CogInterp workshop: "Don't Think of the White Bear."

We investigate Ironic Process Theory in LLMs.

The core insight? Safety prompts can backfire under cognitive load. 🧵👇

English

Logan Mann

11 posts

@loganmann0324

Computer Engineering @UCSB @ucsbNLP