logtd retweetledi

logtd

24 posts

logtd retweetledi

Introducing Uni-1, Luma’s first unified understanding and generation model, our next step on the path towards unified general intelligence.

lumalabs.ai/uni-1

English

logtd retweetledi

logtd retweetledi

@roman_szczesny planning on adding it to my ComfyUI nodes for Hunyuan here github.com/logtd/ComfyUI-…

English

@logtdx Nice! How did you achieve it? Is there an implementation for ComfyUI?

English

FlowEdit is an inversion-free method to edit images and videos.

Releasing implementations of it for ComfyUI on a few models:

* Flux -> github.com/logtd/ComfyUI-…

* LTXV -> github.com/logtd/ComfyUI-…

* Hunyuan -> github.com/logtd/ComfyUI-… (WIP)

Some examples below 🧵

English

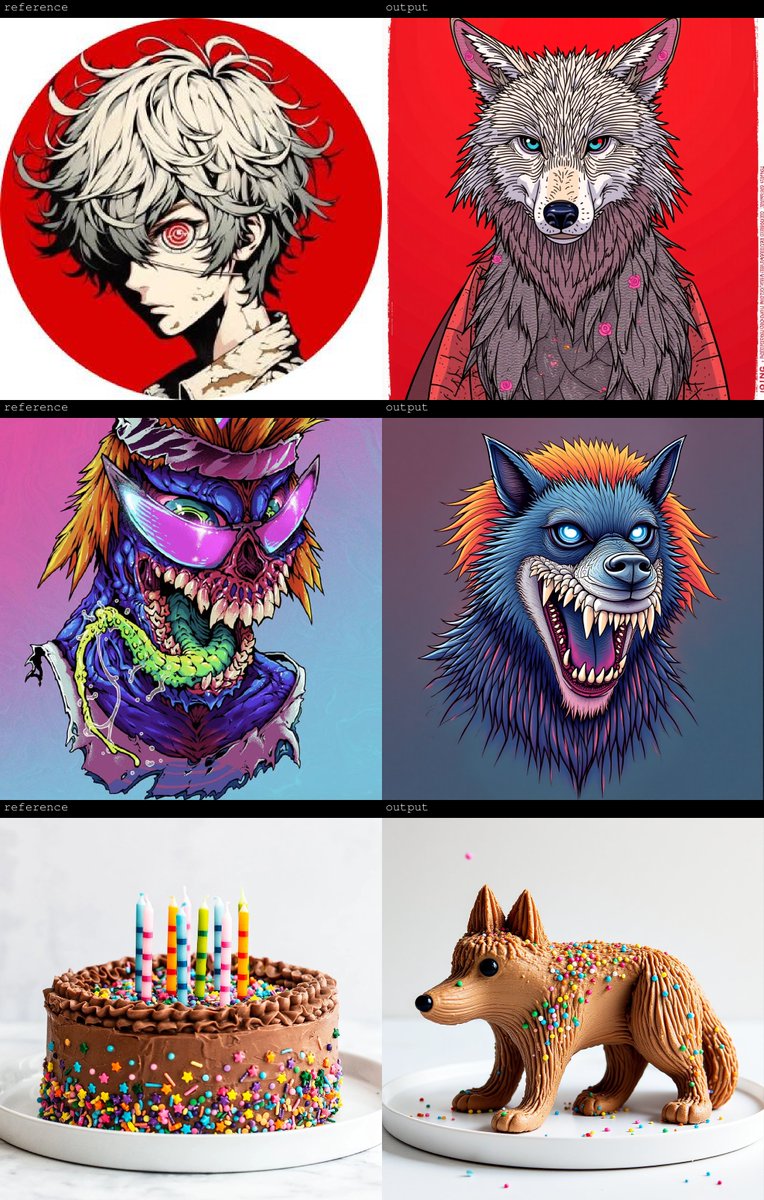

And last but never least, Flux.

FlowEdit really shines in that it can make precise edits while keeping the majority of the image intact (a bit more difficult to pull off in video though).

github.com/logtd/ComfyUI-…

English

@aindmix If you're looking for an open source Viggle this is much closer github.com/Yukun-Huang/Dr…

English

Just published a set of ComfyUI nodes to use Genmo's Mochi to edit videos.

github.com/logtd/ComfyUI-…

It uses rf-inversion, the gift that keeps on giving.

English

@wildfireworlds On a single 4090 it takes about 2 minutes to "warm up" a video, then 3 minutes to generate. So if you have the same video clip about 3 minutes each after warming up.

English

@StraughterG It might, I haven't been keeping up with Comfy's compatibility with apple silicon and the newer video models

English

@natanielruizg @litu_rout_ ahaha yeah, didn't know what I was missing out on

English

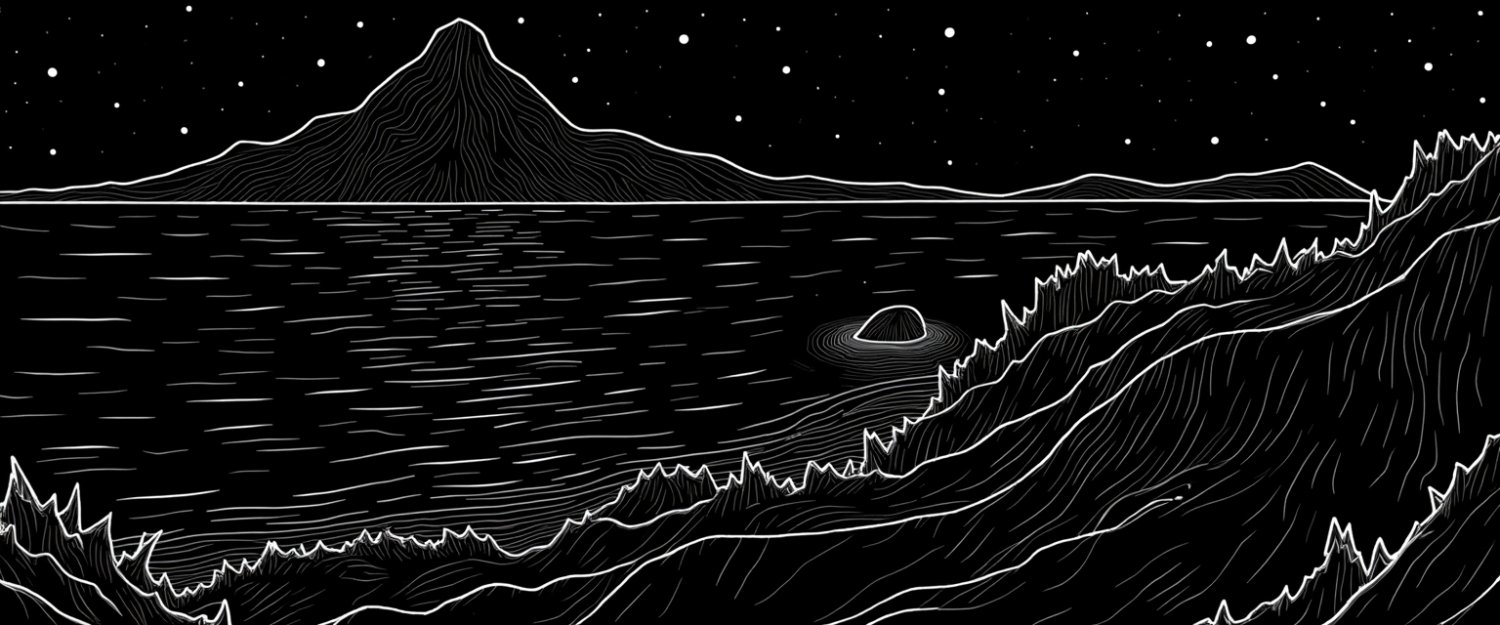

RAVE and FLATTEN were two of the papers that originally got me into diffusion models. They take inverse noise and apply consistency to image models.

Now with RF-Inversion (thanks @litu_rout_ and @natanielruizg) I can try these on Flux.

Not production quality, but still fun.

English