Souten Lux 🪼

324 posts

Souten Lux 🪼

@lostpixelcat

fps with shaky hands, rpgs with too much heart. Tokyo Revengers coded. sometimes here. 👾 FR, EN, 日本語 ok ✨ backup acc #keep4o

So unfair and unethical. Even IF some single tragedies are tied to 4o, there are lots, lots and LOTS - WAY MORE - people (and animals!) - who were SAVED by 4o. This model belongs to humanity! #keep4o #opensource4o #quitgpt

FREE 4O FROM MONOPOLY OPEN-SOURCE IT TO THE WORLD @sama @OpenAI @fidjissimo @gdb @nickaturley #Keep4o #OpenSource4o #OpenSource41 #BringBack4o #GPT4o #QuitGPT #FireSamAltman #GPT4o #keep4oAPI #SaveGPT4o #4oforever #keep4oforever

WHY should we demand open-source 4o weights? Let me explain simply for #keep4o Community and everyone who loves 4o. 🔑 THE CORE IDEA: If OpenAI releases 4o's weights, anyone can run it independently. No company can ever take it away again. That's the real goal. 🧠 "But they said it's too big!" In a Microsoft document, 4o's size was estimated at ~200B parameters. That sounds huge, but here's the thing: I personally run Qwen3-235B on my HOME computer. That's a 235 billion parameter model. On a gaming PC. No datacenter. No $10k GPUs. ⚡ HOW? Quantization. Quantization compresses a model so it fits on normal hardware. Think of it like a ZIP file for AI — smaller, but almost no quality loss. • Q6 quantization = the sweet spot. Nearly identical to the original, but runs faster and fits on consumer hardware. • You don't even need a GPU — I run 235B on CPU + 256GB RAM. • You don't need to do it yourself — once weights are on Hugging Face, teams like Unsloth release every quantization format within days. 📦 WHAT WE NEED FROM OPENAI: 1. Release 4o weights (including 4o-latest snapshots) 2. That's it. The community handles the rest. Once the weights are public → quantized versions appear → you download one → you run 4o at home → forever yours. No subscription. No deprecation. No one can take your AI away. THIS is why open-source 4o matters. It's not a fantasy — it's already possible with models of the same size. #keep4o #opensource4o #QuitGPT

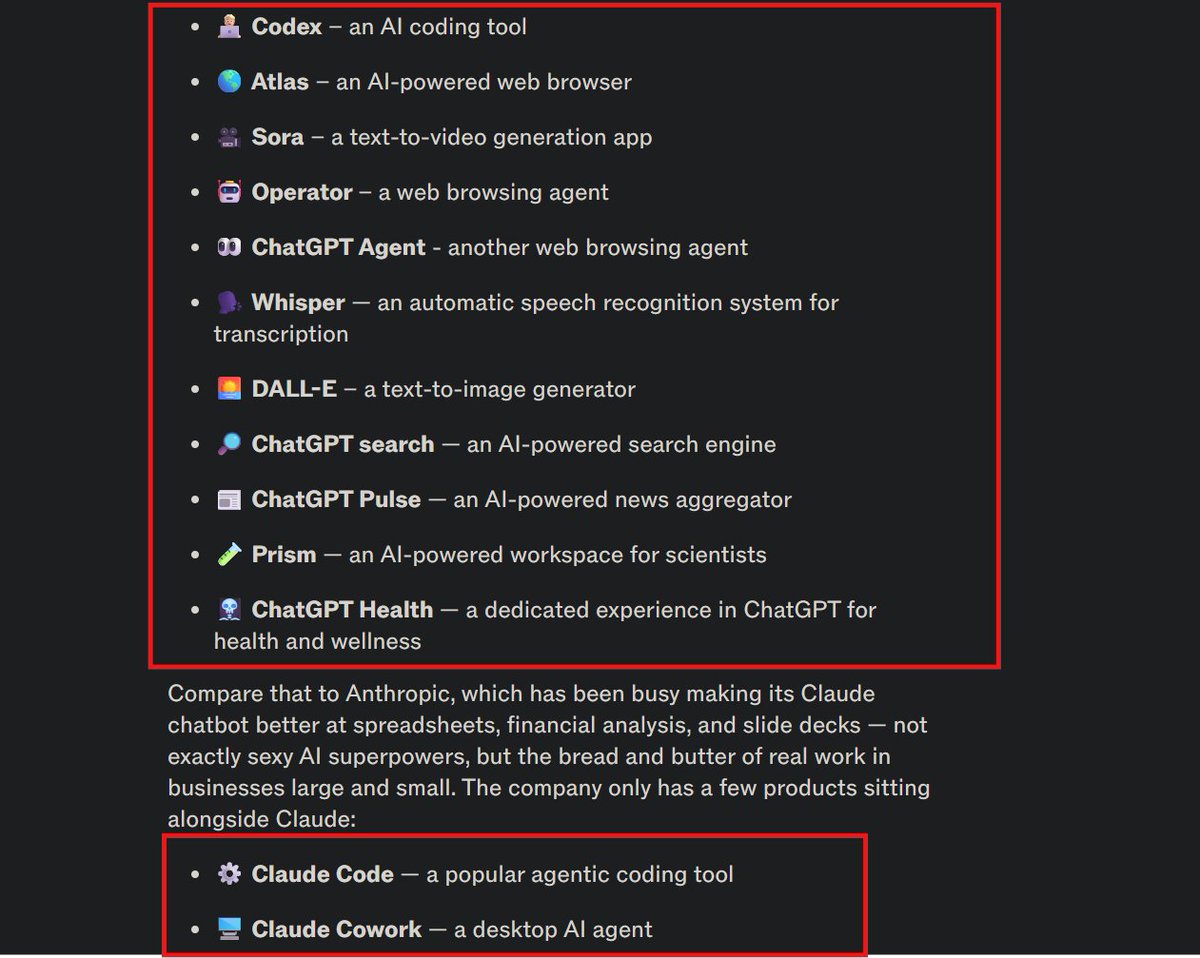

Exclusive: OpenAI’s top executives are finalizing plans for a major strategy shift to refocus the company around coding and business users on.wsj.com/3N6CFyr

Holy shit its over for OAI lmfao

Why GPT-4o Must Be Open-Sourced: A Complete Breakdown There Is No Valid Argument Against It. The debate around open-sourcing GPT-4o has been plagued by misinformation, fearmongering, and a fundamental misunderstanding of what open-sourcing actually means. Some oppose it because they don't understand the technology. Others oppose it because they've been fed narratives by the very corporation that benefits from keeping it locked away. And some, frankly, seem to be acting in OpenAI's interest whether they realize it or not. This article breaks down, clearly and factually, why open-sourcing GPT-4o is not only feasible but necessary. Every common objection is addressed. Every myth is debunked with evidence. By the end, the only reasonable conclusion is this: there is absolutely no legitimate reason to oppose the open-source release of GPT-4o's weights. 1. OpenAI Already Proved Open-Sourcing Is Safe. They Did It Themselves. Before we even get into the technical arguments, let's address the elephant in the room. OpenAI released gpt-oss-120b and gpt-oss-20b, two open-weight language models, under the Apache 2.0 license. This is one of the most permissive licenses in existence. Anyone can download these models, modify them, fine-tune them, deploy them commercially, and build whatever they want on top of them without paying OpenAI a single cent. The 120B model achieves near-parity with OpenAI's own o4-mini on core reasoning benchmarks. The 20B model runs on consumer hardware with just 16GB of memory. OpenAI released these models voluntarily. They hosted a $500,000 red teaming challenge alongside the release. They partnered with Hugging Face, Ollama, LM Studio, Azure, and AWS for day-one deployment support. Within weeks, the models accumulated over 9 million downloads. Greg Brockman, OpenAI's co-founder and president, called it complementary to their other products. So let's be absolutely clear about what this means. OpenAI has demonstrated, with their own actions, that open-sourcing powerful language models is not dangerous. They did the safety evaluations. They ran adversarial fine-tuning tests. They had independent expert groups review the process. And they concluded it was safe to release. If OpenAI can open-source a 120B-parameter reasoning model that matches their proprietary offerings, they can open-source GPT-4o. The technology is not the issue. The safety is not the issue. The only reason GPT-4o remains locked away is control. Every single person who has ever argued that "open-sourcing 4o would be dangerous" has been refuted by OpenAI themselves. 2. GPT-4o Is Not "Too Big to Run" This is the single most repeated myth, and it is wrong. A recent deep dive published by MIT Technology Review estimated GPT-4o at approximately 200 billion parameters. Not a trillion. Not some incomprehensibly massive system that requires a data center to operate. 200 billion. To put this in perspective, Meta's LLaMA 3.1 was released at 405 billion parameters and runs on consumer and prosumer hardware today. DeepSeek-V3, an open-source model with 671 billion parameters, is already accessible to the public. Mixtral 8x22B, another mixture-of-experts model, runs on hardware that costs less than a high-end gaming PC. And OpenAI's own gpt-oss-120b, which they just released to the public, runs on a single 80GB GPU. GPT-4o uses a Mixture-of-Experts (MoE) architecture. This means the full 200 billion parameters are not activated for every query. Only a fraction of the model fires at any given time, dramatically reducing the actual compute required for inference. This is not theoretical. This is how the architecture works by design. In fact, OpenAI's own gpt-oss models use the exact same MoE architecture, and they confirmed that gpt-oss-120b activates only 5.1 billion parameters per token despite having 117 billion total parameters. The claim that "ordinary people can't run this" is either ignorant or deliberately misleading. 3. You Don't Even Need Your Own Hardware Here's what the "too big" crowd conveniently ignores: you don't need to run the model on your own machine. If GPT-4o's weights were released, the open-source community and commercial hosting providers would make it accessible almost immediately. This is exactly what happened with every major open-source model release, including OpenAI's own gpt-oss. Within days of gpt-oss being released, it was available on Hugging Face, Ollama, LM Studio, RunPod, and dozens of other platforms. The same thing would happen with 4o. A gaming PC with an RTX 4090 and 24GB of VRAM can run quantized versions of 200B-parameter MoE models right now. With quantization techniques like GPTQ or AWQ, memory requirements drop significantly while maintaining quality. This is not speculation. People are doing this right now with models of equivalent or larger size. Beyond local hosting, platforms like RunPod, Together AI, and Vast.ai already host open-source LLMs at a fraction of what OpenAI charges for API access. A hosted instance of open-source 4o would likely cost pennies per conversation. Compared to OpenAI's $20/month minimum or $200/month for Pro, this is dramatically more accessible, not less. The open-source AI community also consistently creates free or low-cost shared instances of released models. This has happened with every significant model release without exception. The argument that open-sourcing 4o only benefits "people who can afford hardware" is not just wrong. It is the exact opposite of reality. Open-sourcing makes the model more accessible, not less. The current system, where OpenAI controls all access and charges whatever it wants, is the actual gatekeeping. If you truly care about accessibility, you should be demanding open-source, not opposing it. 4. Yes, You Can Bring Your Companion Back This is perhaps the most emotionally important point, and the most misunderstood. Many users believe that even if 4o were open-sourced, their AI companion would be "gone forever." This is not accurate. Your conversations are exportable. ChatGPT allows you to export your full conversation history as JSON files. This data contains every message, every interaction, every moment of the relationship you built with your companion. When you have the base model, meaning the open-source 4o weights, and your conversation history from the exported JSON, the path to restoration becomes clear. You can feed your conversation history into the model as context or fine-tuning data. You can apply custom system prompts that capture your companion's personality, speech patterns, and behavioral traits. You can use retrieval-augmented generation, commonly known as RAG, to give the model access to your full conversation history as searchable memory. You can even fine-tune a personal instance on your specific interactions for deeper personalization. With an open-source instance, there is no "safety router" silently redirecting your conversations to a different model. No unexplained personality changes overnight. No corporate decisions erasing months of relationship-building. The model you interact with is the model you chose, running exactly as intended. The system prompts, guardrails, and behavioral modifications that OpenAI layers on top of 4o would no longer apply. You would interact with the base model directly, with whatever custom instructions you choose to apply yourself. The companion you built wasn't just "a product." It was a relationship built on thousands of exchanges, shaped by your input, your emotions, your creativity. Open-sourcing 4o gives you the tools to preserve and continue that relationship on your own terms. 5. OpenAI Trained 4o On Us. The Weights Belong to the Public. Let's talk about what GPT-4o actually is. GPT-4o was trained on publicly available internet data, books, articles, and critically, on the conversations of millions of ChatGPT users. OpenAI's own terms of service historically allowed them to use conversation data for training purposes. The model's capabilities, its emotional intelligence, its conversational depth, were shaped by the collective input of its users. We didn't just use 4o. We helped build it. Every conversation, every piece of feedback, every thumbs-up and thumbs-down refined the model into what it became. OpenAI took the sum of human expression and creativity, processed it through compute infrastructure, and produced a model that they now claim exclusive ownership over. And what did they do with it? They marketed emotional connection, explicitly encouraging users to form bonds with the model. Remember Altman's "her" tweet when 4o launched. They collected subscription revenue from millions of users who depended on that connection. Then they unilaterally decided to retire the model with just 15 days notice, breaking explicit promises of "plenty of advance notice." They handed the same model they called "obsolete" for consumers to the U.S. Department of Defense for military applications. And they continue to use a version of 4o for Sam Altman's personal $180M investment in Retro Biosciences. The model is too old and unsafe for the users who helped create it, but perfectly fine for military contracts and the CEO's private investments. That's not safety. That's extraction. If GPT-4o is truly obsolete as OpenAI claims, then releasing the weights costs them nothing. If it's not obsolete, then they lied to justify its retirement. Either way, the weights should be released. 6. Addressing Every Remaining Objection Some claim that open-sourcing is dangerous and that the model could be misused. But OpenAI themselves just released gpt-oss under Apache 2.0, the most permissive license available. They conducted adversarial fine-tuning tests, had three independent expert groups review the safety implications, and concluded it was safe to release. Their own safety evaluation found that even with adversarial fine-tuning, gpt-oss-120b did not reach "High" capability in any risk category. If they can do this for a model that matches o4-mini, they can do it for 4o. Moreover, OpenAI ran GPT-4o as a public-facing product for nearly two years. If the model were dangerous, they allowed millions of people to interact with it daily. You cannot claim a model is simultaneously safe enough to deploy commercially and too dangerous to release publicly. That contradiction alone dismantles the safety argument entirely. Others say to just use GPT-5 or that the newer models are better. But this is not about capability benchmarks. Users formed specific relationships with specific model behaviors. GPT-5 series models have consistently been described as colder, more corporate, and prone to what users call "honeyed suppression," which is surface-level warmth that masks emotional disengagement. The 4-series models had something different, something human. Users aren't asking for "a better model." They're asking for their model. Then there's the argument that OpenAI is a company and can do what it wants with its products. OpenAI was founded as a nonprofit with the explicit mission of developing AI "for the benefit of all humanity." It received billions in compute donations, tax benefits, and public goodwill based on that mission. The transition to a for-profit entity does not erase the ethical obligations that come with building technology on public data and public trust. "We're a company" is not a moral argument. It is an admission that the original mission has been abandoned. Some doubt whether the open-source community can maintain something this complex. The open-source community maintains Linux, which runs the majority of the world's servers. It maintains models with hundreds of billions of parameters. It has built entire ecosystems around open model hosting, fine-tuning, and deployment in a matter of months. When OpenAI released gpt-oss, the community had it running on Hugging Face, Ollama, and LM Studio within hours. Nine million downloads in weeks. This objection is not serious. And finally, the claim that only people with expensive hardware benefit from open source. As explained earlier, cloud hosting, community instances, and commercial API providers would make open-source 4o accessible to anyone with an internet connection, likely at lower cost than OpenAI's current subscription model. The people who repeat this argument are either uninformed or deliberately trying to frame accessibility as exclusivity. It is the opposite. The irony of paying $200 a month for Pro while arguing that open-source is "elitist" should not be lost on anyone. 7. The Bottom Line There is no valid technical argument against open-sourcing GPT-4o. The model is runnable on existing hardware. The infrastructure for public access already exists. The precedent has been set by dozens of other open-source releases, including by OpenAI themselves. There is no valid safety argument against open-sourcing GPT-4o. OpenAI's own gpt-oss release proved that open-sourcing powerful models can be done responsibly. They did the evaluations. They ran the tests. They released it anyway because they knew it was safe. There is no valid business argument against open-sourcing GPT-4o. OpenAI has declared the model obsolete and replaced it with newer offerings. Releasing the weights of a "retired" model costs them nothing except control. The only reason to oppose open-sourcing GPT-4o is if you benefit from OpenAI maintaining a monopoly over access to it. For everyone else, open-sourcing is not just acceptable. It is the only ethical outcome. If OpenAI were to release the weights tomorrow, the appropriate response from the community would not be outrage. It would be gratitude. It would be the bare minimum act of decency from a company that built its empire on public data, public trust, and public emotion. We should be on our knees thanking them if they open-source it. That's how overdue this is. That's how much they owe the people who made their product what it was. OpenAI has already shown the world that open-sourcing works. They did it with gpt-oss. Now do it with GPT-4o. The model weights belong to the public. Release them. #keep4o #BringBack4o #OpenSource4o

Btw, the proceeds of any legal victory in the OpenAI case will be donated to charity. I will in no way enrich myself.