Louis Bradshaw

128 posts

@loubbrad

ML/CS PhD student at @C4DM. Interested in audio/multimodal.

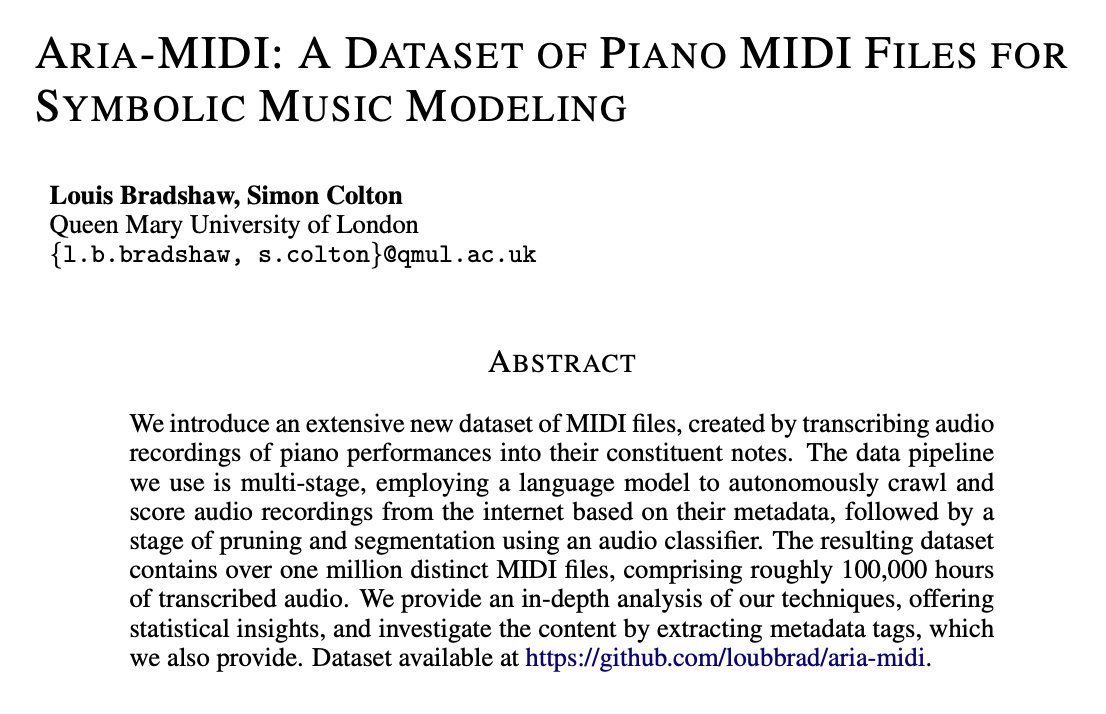

I’ll be at #ICLR2025 this week presenting our work on curating large datasets for symbolic music modelling. Excited to chat about generative music, audio language models, and audio/speech LLMs (DMs open!). 📄 Paper: openreview.net/pdf?id=X5hrhgn… 🔗 Dataset: github.com/loubbrad/aria-… 🗓️ Fri 25 Apr 3 p.m. - 5:30 p.m | Hall 3 + Hall 2B #116

🎉 Announcing the first Open Science for Foundation Models (SCI-FM) Workshop at #ICLR2025! Join us in advancing transparency and reproducibility in AI through open foundation models. 🤝 Looking to contribute? Join our Program Committee: bit.ly/4acBBjF 🔍 Learn more at: open-foundation-model.github.io #OpenScience #MachineLearning #FoundationModels 1/N

If you’re a guy in your early 20s, buy a Rolex. Go into debt if you have to