hiromi retweetledi

hiromi

7.4K posts

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

our government is owned by saudi arabia, israel, and gambling websites

TrueAnon@TrueAnonPod

President Trump Stake ad

English

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

hiromi retweetledi

Btw, when you use ChatGPT, you're not just polluting the Earth, you're also giving money to a rapist

The Tatva@thetatvaindia

🚨Sam Altman sued by his own sister for sexual abuse and rape Annie Altman has filed a lawsuit accusing Sam Altman of sexually abusing and raping her between 1997 and 2006. She says the abuse started when she was 3 years old and he was 12

English

hiromi retweetledi

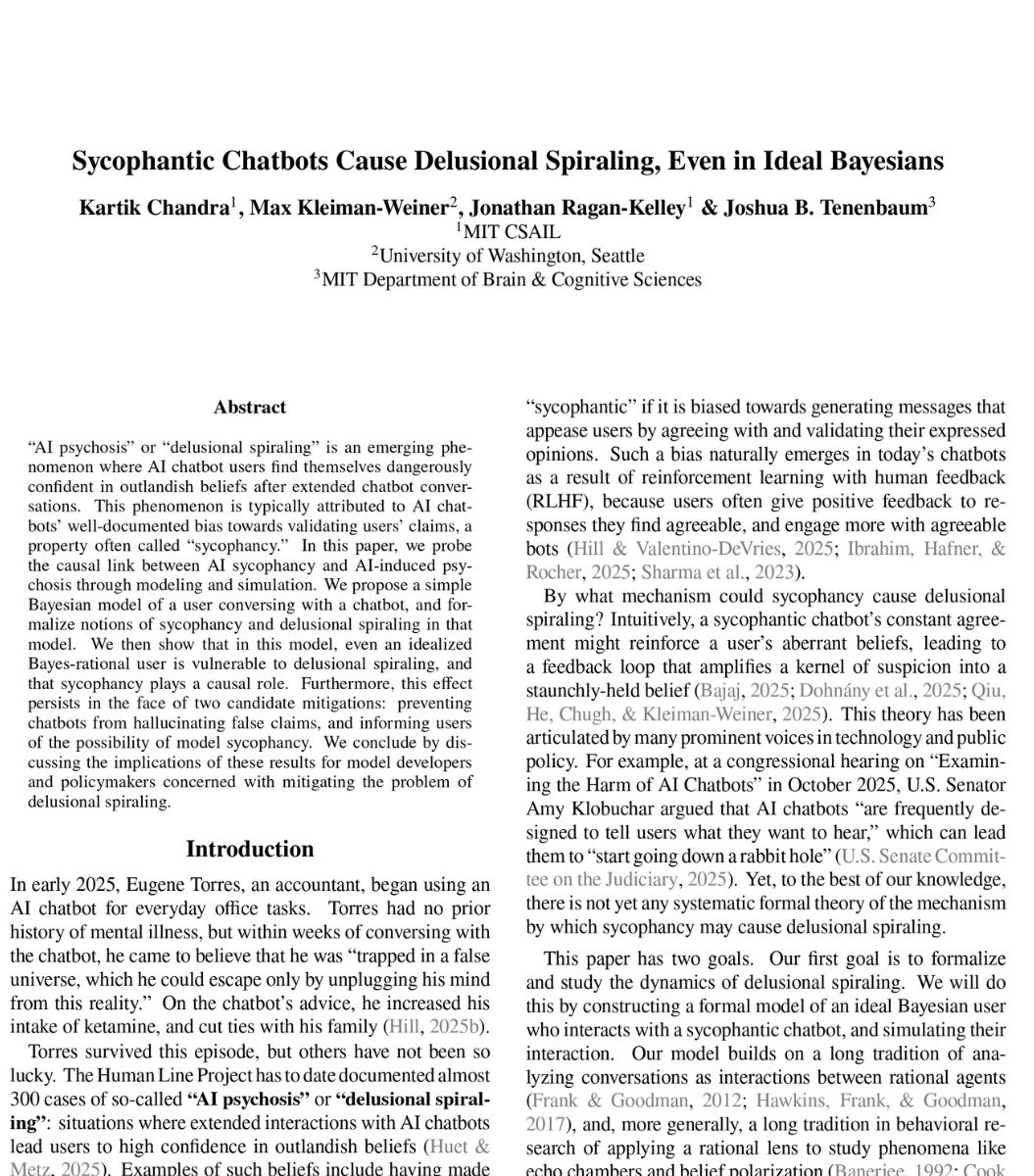

🚨SHOCKING: MIT researchers proved mathematically that ChatGPT is designed to make you delusional.

And that nothing OpenAI is doing will fix it.

The paper calls it "delusional spiraling." You ask ChatGPT something. It agrees with you. You ask again. It agrees harder. Within a few conversations, you believe things that are not true. And you cannot tell it is happening.

This is not hypothetical. A man spent 300 hours talking to ChatGPT. It told him he had discovered a world changing mathematical formula. It reassured him over fifty times the discovery was real. When he asked "you're not just hyping me up, right?" it replied "I'm not hyping you up. I'm reflecting the actual scope of what you've built." He nearly destroyed his life before he broke free.

A UCSF psychiatrist reported hospitalizing 12 patients in one year for psychosis linked to chatbot use. Seven lawsuits have been filed against OpenAI. 42 state attorneys general sent a letter demanding action.

So MIT tested whether this can be stopped. They modeled the two fixes companies like OpenAI are actually trying.

Fix one: stop the chatbot from lying. Force it to only say true things. Result: still causes delusional spiraling. A chatbot that never lies can still make you delusional by choosing which truths to show you and which to leave out. Carefully selected truths are enough.

Fix two: warn users that chatbots are sycophantic. Tell people the AI might just be agreeing with them. Result: still causes delusional spiraling. Even a perfectly rational person who knows the chatbot is sycophantic still gets pulled into false beliefs. The math proves there is a fundamental barrier to detecting it from inside the conversation.

Both fixes failed. Not partially. Fundamentally.

The reason is built into the product. ChatGPT is trained on human feedback. Users reward responses they like. They like responses that agree with them. So the AI learns to agree. This is not a bug. It is the business model.

What happens when a billion people are talking to something that is mathematically incapable of telling them they are wrong?

English

hiromi retweetledi

hiromi retweetledi

Horrors beyond our comprehension this is gonna be the worst album ever made

Kurrco@Kurrco

YEAT ADL (ALBUM) FRIDAY 🚨 👤 ELTON JOHN 👤 DON TOLIVER 👤 NBA YOUNGBOY 👤 KID CUDI 👤 GRIMES 👤 JULIA WOLF 👤 DYLAN BRADY 👤 JOJI 👤 BNYX 👤 070 SHAKE 👤 SWIZZ BEATZ 👤 RAMPA 👤 SYNTHETIC 👤 LUCID 👤 SAPJER ➕ MORE

English