firerozen retweetledi

firerozen

165 posts

firerozen

@lts33333

Monkey with a ai made plan.

London, England Katılım Eylül 2012

399 Takip Edilen14 Takipçiler

Just finished designing a 3D-printed organizer for my Kenwood pasta attachment set 🍝

No more loose parts or messy drawers — everything fits perfectly now.

Simple, practical, and super satisfying to print.

Check it out 👉#profileId-2069943" target="_blank" rel="nofollow noopener">makerworld.com/en/models/1928…

English

firerozen retweetledi

firerozen retweetledi

firerozen retweetledi

BREAKING: Controlled explosion at Euston station after suspicious package found

news.sky.com/story/euston-s…

English

firerozen retweetledi

firerozen retweetledi

firerozen retweetledi

firerozen retweetledi

Two-faced PS5, anyone? 🔴⚫️

@ColorWare will give 1 random person who follows and RTs this tweet a PS5 slim in whatever colors they want for free! Good luck 😈

English

firerozen retweetledi

Are you ready to boost your skills and advance your career? Then don't miss this opportunity to try one of 4 challenges on Copilot for Microsoft 365, Copilot for Developers, Machine Learning, and Generative AI.

Sign up now: msft.it/6019crRuH

English

firerozen retweetledi

Imagine "untraining" a Large Language Model on specific content.

The New York Times doesn't want its content in ChatGPT. OpenAI would have to retrain its models from scratch. Removing any content from their models would cost them millions of dollars.

I just read a new paper from Microsoft Research that tries to fix this. This is the only study I've found so far that's looking into making models forget.

The paper proposes a process that makes a model forget about Harry Potter without retraining it from scratch.

It's a proof of concept. The researchers aren't sure whether their solution generalizes to other topics. It's a first step, but it's cool nonetheless.

What they did is kind of a hack, but I like it:

They fine-tuned the model using a dataset containing the original Harry Potter text as the input tokens and some generic labels as targets. They pre-generate these generic labels. For example, instead of using "Harry," they use "Jack," and instead of using "Hermione," they use "her."

In other words, they don't actually delete knowledge from the model. They overwrite it.

As we start using these models everywhere, the ability to forget information will become critical.

Let's see how much progress we make this year on this.

You'll find a link to the paper in the image ALT.

English

firerozen retweetledi

firerozen retweetledi

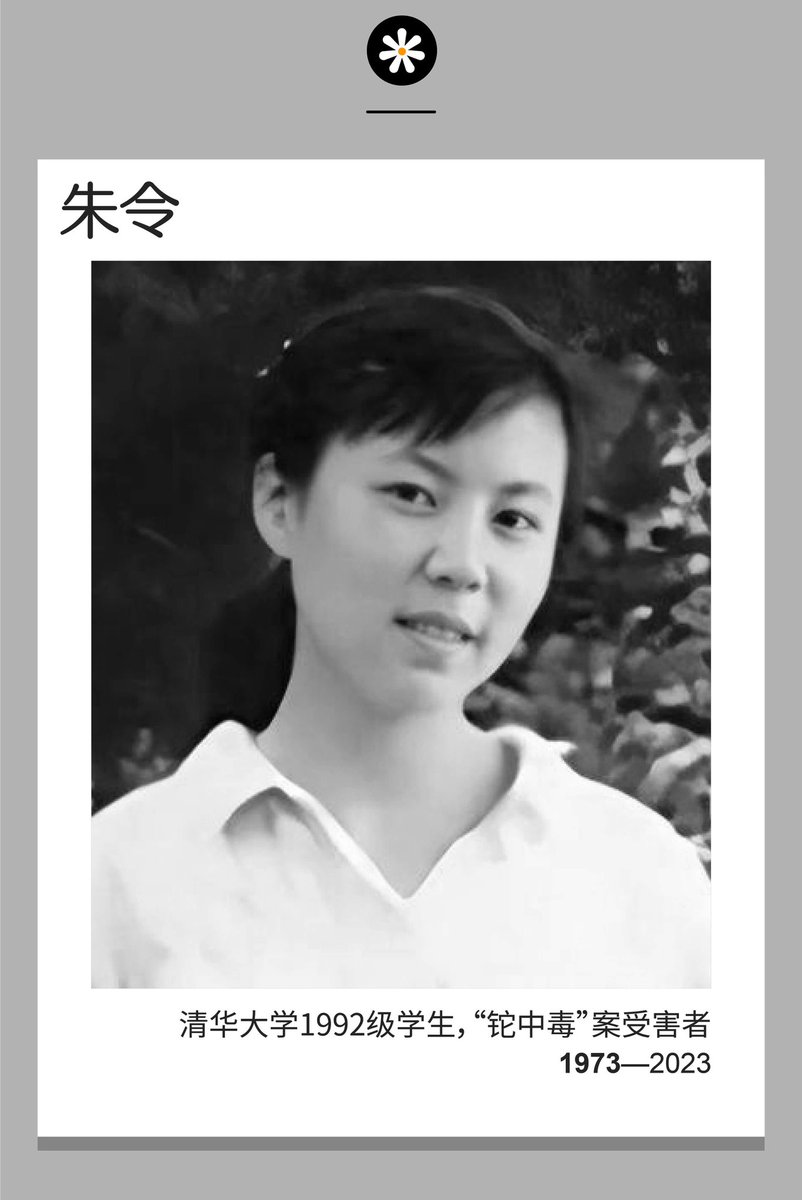

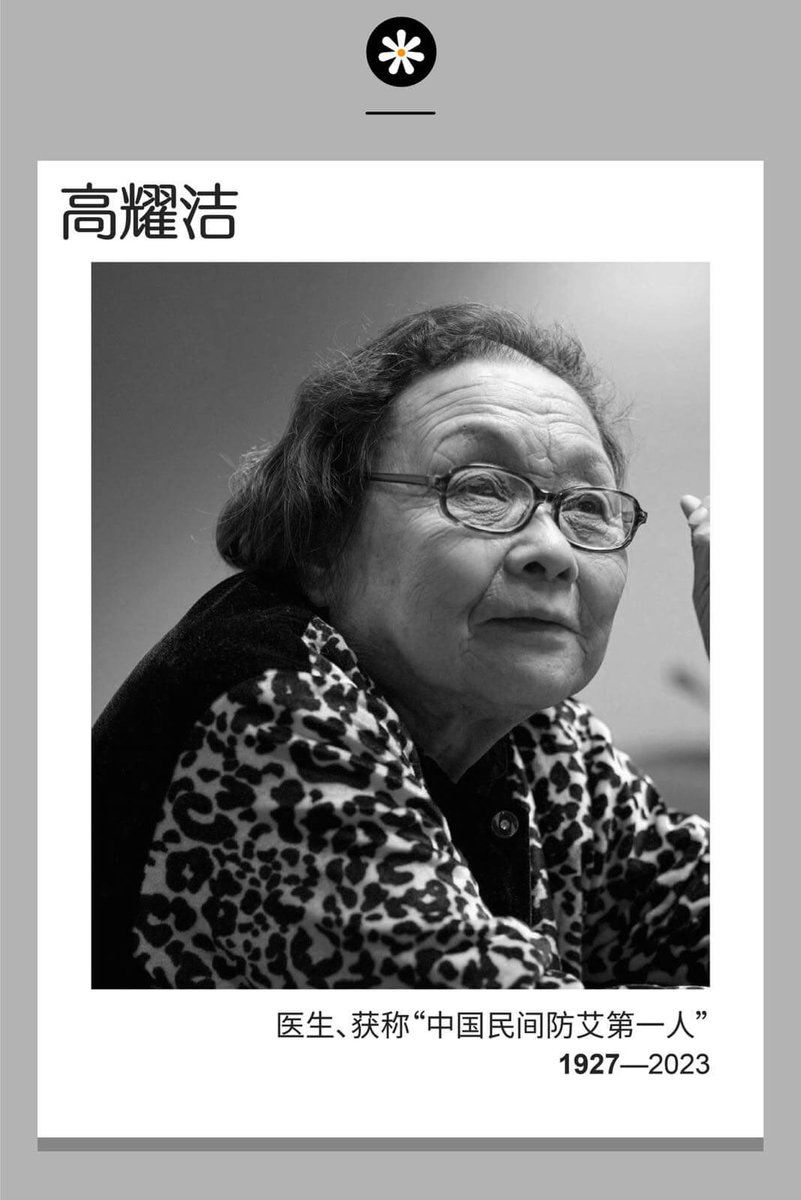

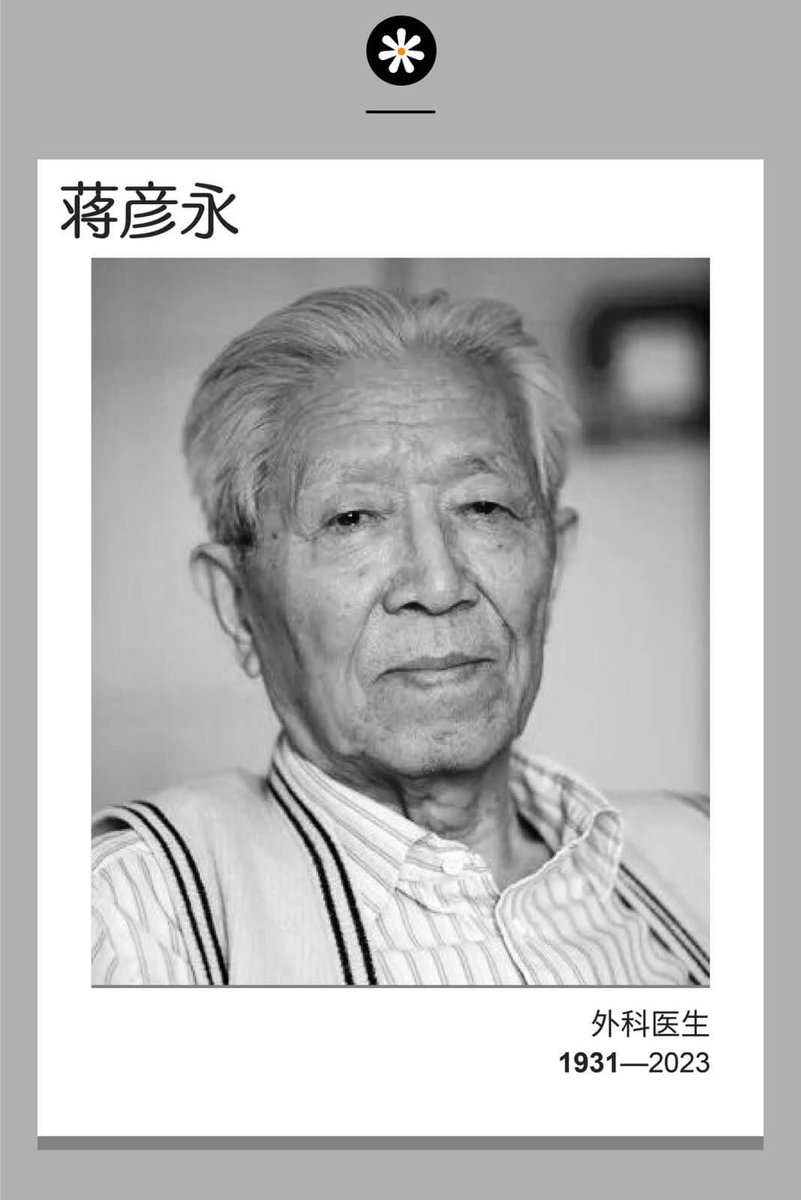

财新《2023终有一别》,纪念本年辞世的多位中外人物。已被删除。此处可访问webcache.googleusercontent.com/search?q=cache…

来自@dashengmedia

中文