Lupin Lin

602 posts

it's still experimental so we hide it a bit, but in the codex app, try: > what have i been doing very inefficiently on my computer (according to Chronicle). make some recommendations. be direct. tell me what i need to hear.

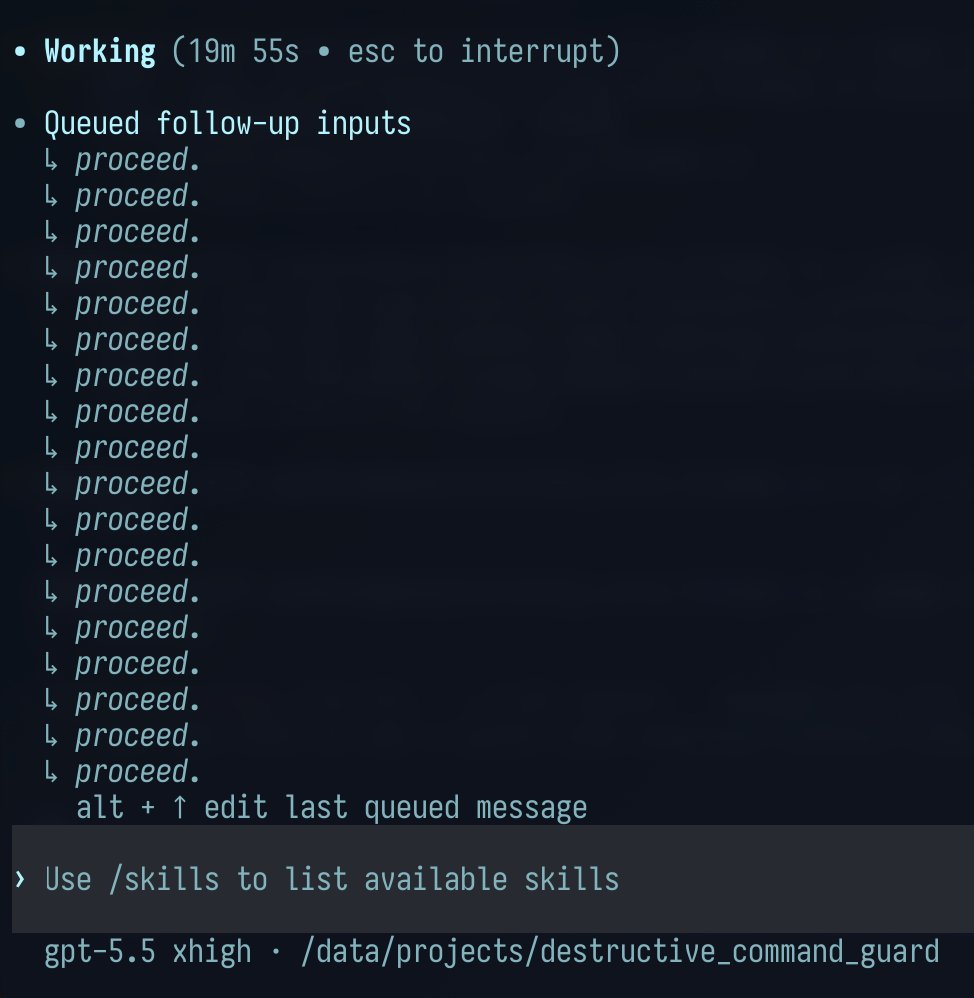

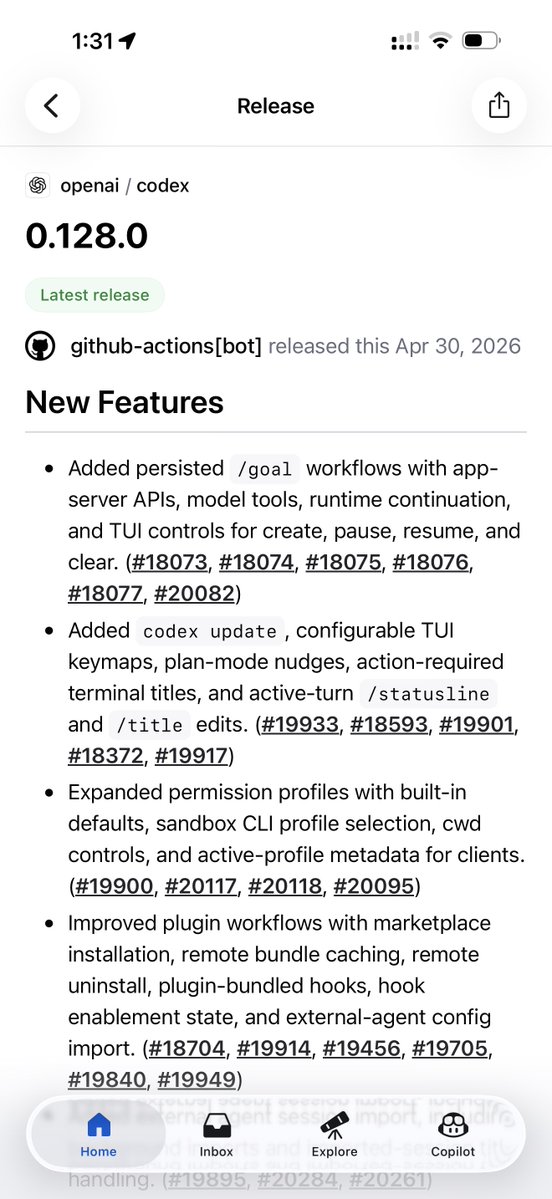

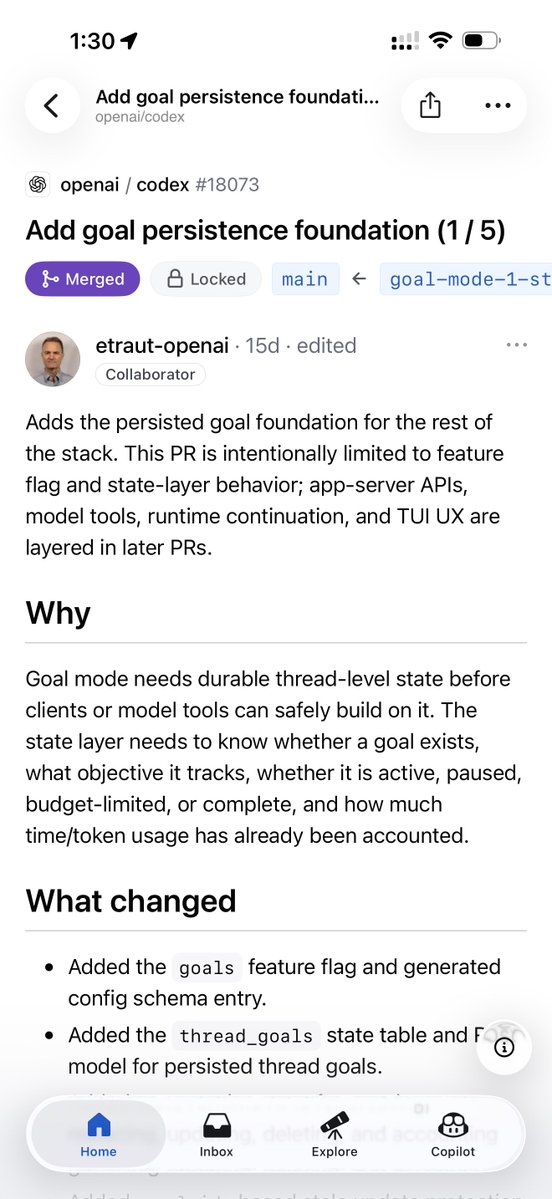

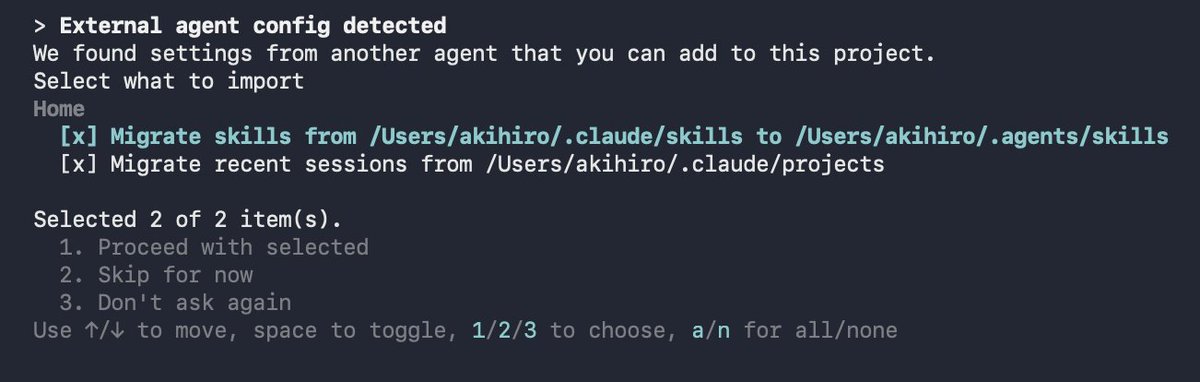

/goal also lands in Codex CLI 0.128.0. Our take on the Ralph loop: keep a goal alive across turns. Don't stop until it's achieved. Built by my co-worker and OpenAI mentor Eric Traut, aka the Pyright guy. One of the GOATs I get to work with daily.

Small Language Models are the Future of Agentic AI! Glean just released Waldo - a 30B agentic search model that runs before frontier LLMs. Search is where most agentic work begins. You ask about a project, customer, process, or decision. The agent searches internal docs, reads results, refines queries, searches again. Sometimes one search. Often several iterative loops. Get the search wrong - miss a critical document, surface irrelevant results - and the entire response fails. Search planning is the foundation for AI Agents. But frontier models are doing two different jobs at once. Search planning (which queries, when to stop, is there enough evidence) and synthesis (reasoning over results to generate an answer). The first job is pattern matching. The second needs deep reasoning. Waldo splits these. It's a 30B MoE model built on Nvidia Nemotron 3 Nano that handles just the search planning layer. It runs first, decides which queries to run across Glean Search, Employee Search, and Web Search, determines when it has enough context, then hands off to the frontier model with retrieved context already in place. Key architecture: • Run Waldo first, before the frontier model. The alternative (sub-agent design) would require the frontier model to call Waldo as a tool, wait for results, then respond - two frontier calls. Running Waldo first cuts it to one. • Training Phase 1 (DPO): The model learned when to search, when to stop, and when to hand off from production tool-use patterns. The training data captured which tools were called, in what sequence, and whether the plan succeeded. • Training Phase 2 (RL): The model was trained against production queries and rewarded based on document recall - whether its searches surfaced the same documents that appeared in successful final answers. This refined its ability to find relevant documents in fewer search iterations. • Results: 10x faster per call (250ms vs 3s). Half of queries run on this fast path. The pattern: specialized small models for focused, repetitive tasks. Frontier models for reasoning and synthesis. Waldo proves small language models are faster, cheaper, and just as effective for repetitive, focused tasks. I've shared the link in the replies!

え、すごい! HyperFrames+Eleven Lab組み合わせてCodexから一回の指示でこんな感じの動画できた。動画生成じゃないから安心(音あり)