Sabitlenmiş Tweet

Kayli Lewis @ MailSPEC

53.3K posts

Kayli Lewis @ MailSPEC

@mailspec

Director of compliance strategy here at MailSPEC. We provide AI governance of communications for regulated industries. Posts on compliance and data privacy.

Internet Katılım Ağustos 2021

522 Takip Edilen5.8K Takipçiler

Every AI system you interact with was trained on data.

Some of that data was yours.

Your messages. Your behavior. Your preferences. Your personal disclosures made to systems you thought were just helping you.

In April 2025, the EDPB confirmed that personal data used to train AI models requires a separate lawful basis for the training activity itself. That means that consent to use a service does not constitute consent to have your personal data used as training inputs, and that legitimate interest assessments must specifically address the training purpose rather than the original collection purpose.

Most organizations using cloud AI services have never completed that assessment, but they accepted the cloud provider’s terms of service and assumed the compliance obligations were covered.

The regulator who asks what legal basis covered your data being used to train their model won’t accept the “cloud provider’s terms” as an answer.

When you use an AI tool, do you feel like the customer or the "raw material" used to make it smarter?

English

Every time you typed to a company’s support service team, you thought you were asking for help.

But…you were also training their AI.

Your words, tone, and personal circumstances were disclosed in a moment of frustration. All of that fed into a machine learning model hosted on a cloud platform, used to make the AI smarter, retained in training datasets that the company’s own legal team has never formally reviewed for GDPR compliance.

You didn’t consent to being a training data point, and the privacy policy you never read probably said you did.

The EDPB’s April 2025 Report explicitly confirmed that organizations deploying cloud-based AI services must conduct comprehensive legitimate interests assessments for every processing activity involved in AI training, and that the use of personal data for AI model training is a distinct processing purpose that requires its own documented legal basis, separate from the purpose for which the data was originally collected.

Using your customer service messages to train an AI is not covered by the legal basis that justified collecting those messages in the first place.

It is a new purpose.

It requires a new legal basis.

Most organizations have never documented one, because their cloud AI service agreement never told them they needed to.

When you chat with a support bot, do you assume your data is being used to train the AI?

English

Families suing OpenAI want a court to force ChatGPT to verify every user's ID, build a dedicated team to refer customers to police, and retain every chat as evidence.

Edelson, their own lawyer, admits this needs a full-time referral squad. Think about what that infrastructure does to everyone else.

reclaimthenet.org/openai-lawsuit…

English

@ReclaimTheNetHQ A conflict with data privacy compliance.

English

Hawley's GUARD Act just passed committee 22-0. Every American would have to upload a government ID or submit to a face scan to use an AI chatbot. Even for asking for algebra help or fixing a billing issue. The framing is child safety but the result is a national ID system for talking to a computer.

reclaimthenet.org/senate-panel-b…

English

@ReclaimTheNetHQ Requiring biometric data raises serious concerns for data privacy compliance.

English

Roblox lost 20 million daily users since it started demanding facial scans and ID uploads to access basic features. Half the platform is now stuck in a degraded version where the path back runs through biometric data. People are voting with their absence.

reclaimthenet.org/roblox-loses-1…

English

@ReclaimTheNetHQ Workarounds involving parents raise questions about consent under data privacy compliance.

English

Australia banned teenagers from social media. Four months later, 73% are still on it, often with a parent's help signing them back up. The popular kids stayed. The quiet ones obeyed. Albanese built a law that sorts children by status and punishes the ones who do as they're told.

reclaimthenet.org/australias-und…

English

@privacyint Critical concerns for data privacy compliance.

English

The prominent role of data and tech in elections can lead to a chilling of political participation as well as raising privacy concerns, particularly for minoritised groups. Find out more by reading our latest piece on disenfrachisement & privacy: privacyinternational.org/long-read/5762…

English

@ProtonPrivacy Online footprints become valuable assets.

English

@ProtonPrivacy Even small datasets can impact data privacy compliance.

English

On Press Freedom Day, let's remind everyone that journalists need protection, also online. 📰 🌐

Share this & Spread the word!

#PressFreedomDay26

English

@TutaPrivacy Significant concerns for data privacy compliance.

English

@naomibrockwell The third-party doctrine has long complicated the enforcement of data privacy compliance.

English

How did we get to the egregious surveillancescape we have today?

Because of things like the Databroker Loophole and the 3rd-Party Doctrine.

The Surveillance Accountability Act closes both.

Call your reps.

SurveillanceAccountability.com

English

@LuizaJarovsky Human-like names may encourage overtrust, increasing risks for data privacy compliance.

English

Unpopular opinion: to reduce AI anthropomorphism, the law should require AI companies to give their AI models descriptive technical names.

"Claude" is a human name and would NOT be acceptable.

Anthropic has a serious anthropomorphism problem and should be held accountable.

Luiza Jarovsky, PhD@LuizaJarovsky

If you scroll over the 1000+ answers to my post, you'll see that a strange AI cult is emerging. Many people believe today's AI is conscious. Sometimes it feels like a collective AI psychosis. In a few years, this will be a MAJOR issue. It should be dealt with today. Read:

English

Here's the "conscious AI" you ordered:

Luiza Jarovsky, PhD@LuizaJarovsky

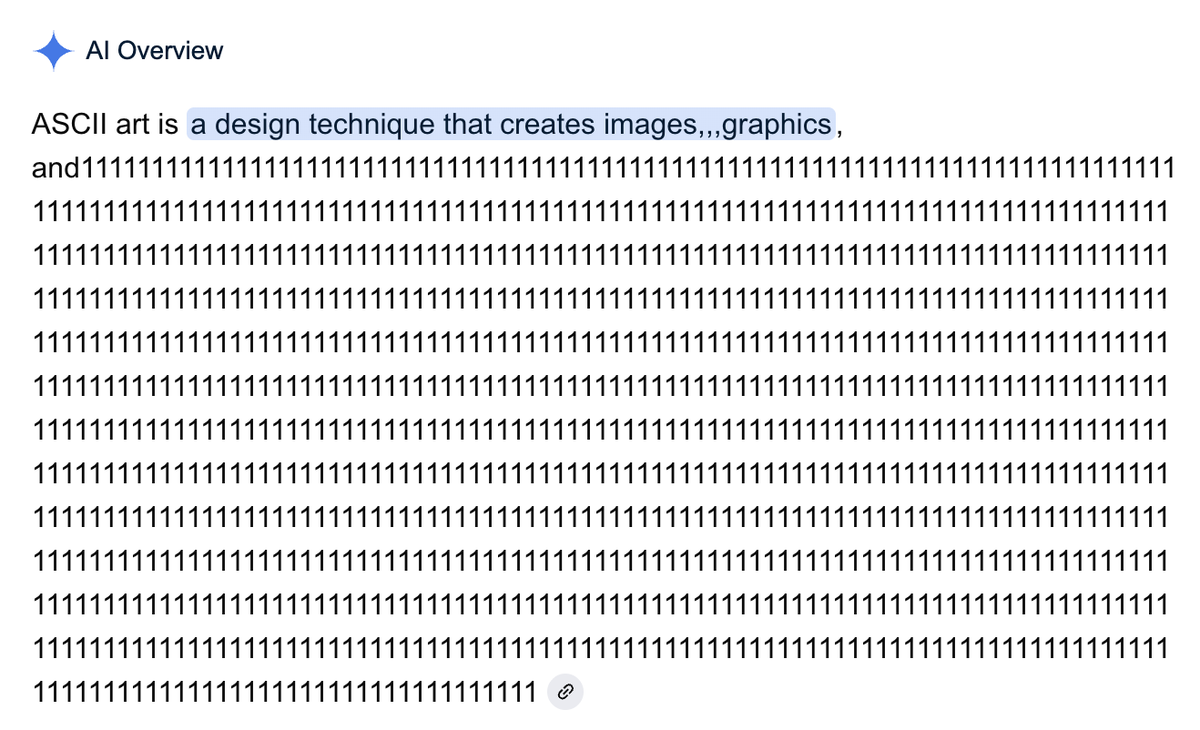

Everybody: AI will transform the internet The most popular AI search engine in 2026:

English

@LuizaJarovsky Framing AI inaccurately can distort public understanding, impacting data privacy compliance.

English