mateja retweetledi

mateja

67 posts

mateja retweetledi

mateja retweetledi

The billion-dollar AI startup. Aaru Co-Founders @seekingtau, @virtualned, & @johncolekessler on refining the science of prediction: cnb.cx/3PuKcHV

English

mateja retweetledi

there is no better team.

- /simulators

- monorepo btw

- ship faster than light speed (Einstein-Rosen Bridge to main)

- moggmaxx Eisenhower matrices for architectural decisions (modern stack)

LGTM

Cam Fink@seekingtau

Hiring someone who is: - chronically online - in touch with the news - monitoring the situation - interested in simulating dm me

English

mateja retweetledi

mateja retweetledi

Aaru recently reached a $1 billion valuation, making it one of a growing crop of hot companies led by people who have barely cracked their 20s on.wsj.com/4b4wunG

English

mateja retweetledi

Thank you to the @WSJ and @VranicaWSJ for writing the first page in the story of Aaru. More soon.

GIF

English

mateja retweetledi

Aaru recently reached a $1 billion valuation, making it one of a growing crop of hot companies led by people who have barely cracked their 20s on.wsj.com/4cADDx7

English

mateja retweetledi

@ConsumerRick @ProofofMaro monte carlo, entropy, information theory, rl something something

English

@emilyreadey We’re seeing the effortless front from thousands of hours on the ice - you’d likely feel different watching her train, fall, and struggle to land

Often training is exhausting, while the competition is exciting - its enjoyable to show off years of fine tuning your craft

English

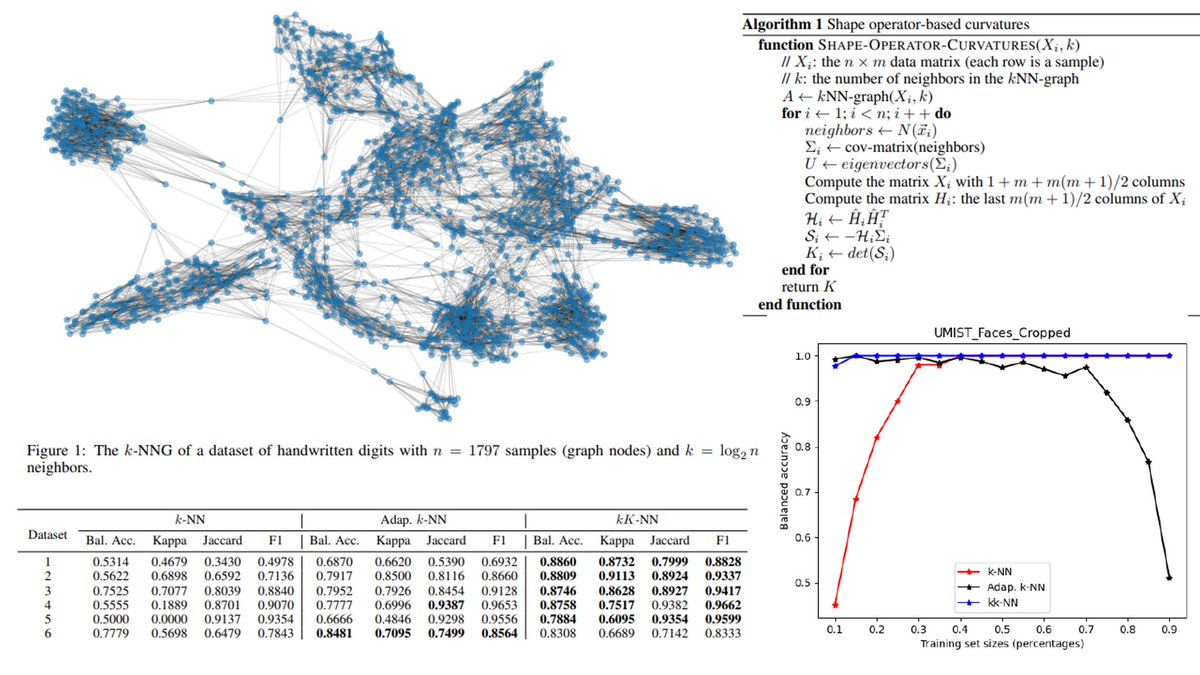

"Adaptive k-nearest neighbor classifier based on the local estimation of the shape operator"

arxiv.org/abs/2409.05084

kkNN method: k-NN classification with first k meaning curvature (kappa)

English

@mariabrbic THANK YOU! platonic representations might be the most misunderstood concept in DL

English

Are neural nets across modalities really converging to the same representation as they scale, as the Platonic Representation Hypothesis suggests?

We show that common representational similarity metrics are confounded by network width & depth. We propose a permutation-based null calibration that fixes this.

Result❓

• Global convergence largely disappears.

• Local neighborhoods persist.

We propose the alternative Aristotelian Representation Hypothesis: Neural networks, trained with different objectives on different data and modalities, are converging to shared local neighborhood relationships

Very proud of @FabianGroger and @ShuoWen18 for this work!

Paper: arxiv.org/abs/2602.14486

Webpage: brbiclab.epfl.ch/aristotelian

Code: github.com/mlbio-epfl/ari…

English

Cool new work combining Mamba with TRM

arxiv.org/abs/2602.12078

English