Marco Bagatella

75 posts

@mar_baga

ETH/CLS PhD candidate, interested in reinforcement learning and pizza making.

Excited to share that our paper "Optimistic Task Inference for Behavior Foundation Models" was accepted for ICLR 2026. BFMs are great at zero-shot RL, but task inference requires a dataset with reward labels. Our method OpTI-BFM offers an online alternative. (1/5)

Training LLMs with verifiable rewards uses 1bit signal per generated response. This hides why the model failed. Today, we introduce a simple algorithm that enables the model to learn from any rich feedback! And then turns it into dense supervision. (1/n)

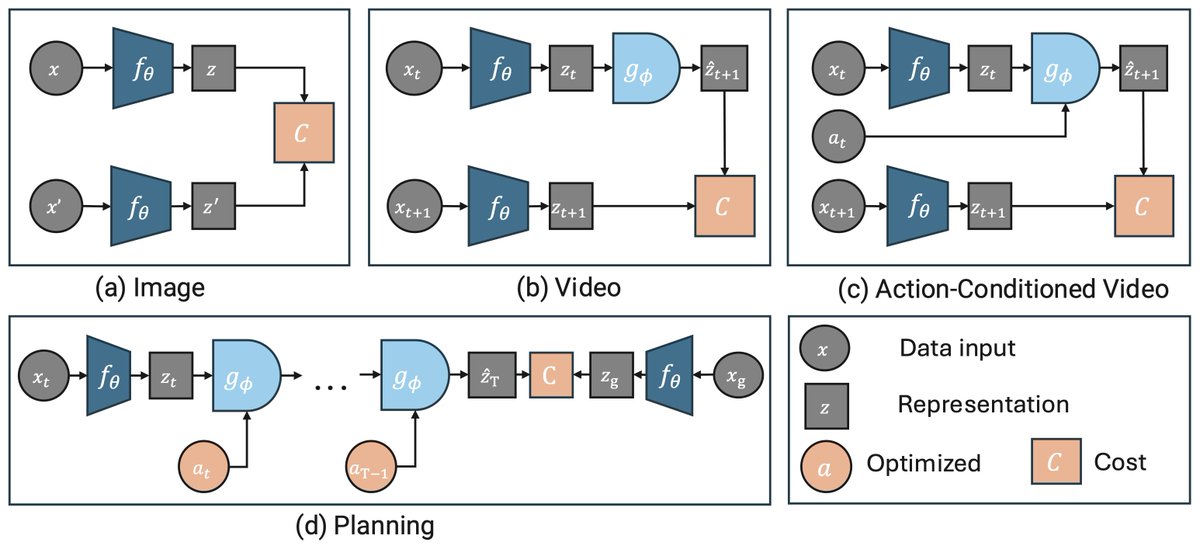

🚨 New reinforcement learning algorithms 🚨 Excited to announce MaxInfoRL, a class of model-free RL algorithms that solves complex continuous control tasks (including vision-based!) by steering exploration towards informative transitions. Details in the thread 👇