Marc Benedí

135 posts

@marcbenedi

PhD Candidate @ TU Munich Visual Computing & Artificial Intelligence Group w/ Matthias Niessner. Previously CS @ UPC - FIB

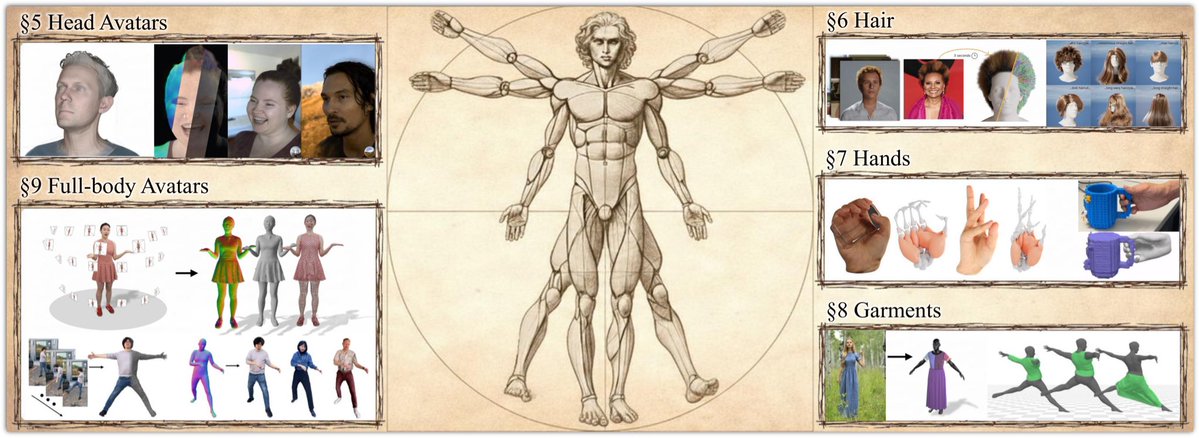

📢📢 𝐀𝐯𝐚𝐭𝟑𝐫 📢📢 Avat3r creates high-quality 3D head avatars from just a few input images in a single forward pass with a new dynamic 3DGS reconstruction model. Video: youtu.be/P3zNVx15gYs Project: tobias-kirschstein.github.io/avat3r Our core idea is to make Gaussian Reconstruction Models animatable. We find that a simple cross-attention to an expression code sequence is already sufficient to model complex facial expressions. We then incorporate position maps from DUSt3R and feature maps from Sapiens to facilitate the prediction task. While DUSt3R's position maps act as a pixel-aligned initialization for the Gaussians' positions, the Sapiens feature maps help the cross-view transformer to match corresponding image tokens in the 4 input images. One major challenge in creating a 3D head avatar from smartphone images comes from inconsistent facial expressions when the subject could not remain perfectly static during the capture. We eliminate this static requirement by simply showing our model input images with different facial expressions during training. This technique makes our model robust to inconsistent input images later on. Finally, we show that despite the model has been trained with 4 input images, one can even create a 3D head avatar when only a single image is available. To achieve this, we employ a pre-trained 3D GAN to lift the single image to 3D and then render the 4 input images for our model. This allows us to create 3D head avatars from single images and even highly out-of-distribution examples like AI generated faces, paintings or statues. Great work by @TobiasKirschst1 from his internship at Meta with Javier Romero, @ASevastopolsky, and @psyth91

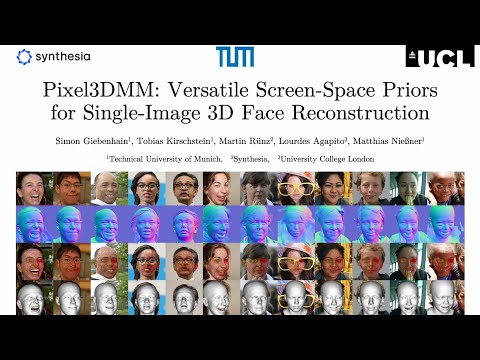

📢Announcing our 3D head avatar benchmark📢 Two tasks with hidden test sets: - Dynamic Novel View Synthesis on Heads - Monocular FLAME-driven Head Avatar Reconstruction Our goal is to make research on 3D head avatars more comparable and ultimately increase the realism of digital humans. The benchmark studies distinct phenomena of 3D head avatar creation, such as extreme facial expressions, slow motion captures of shaking long hair, or complicated light reflection and refraction patterns of glasses. The two benchmark tasks assess two core desiderata of 3D avatars: While the novel view synthesis challenge focuses on best possible rendering quality of complex moving scenes, the avatar animation challenge is concerned with how well a driving signal is translated into an avatar. Evaluations are light-weight and consist of diverse video recordings from the popular NeRSemble dataset with a hidden test set. Participation in the benchmark is therefore straight-forward and requires only 5 reconstructions per task. Leaderboard and benchmark submission: kaldir.vc.in.tum.de/nersemble_benc… Benchmark data access and toolkit: github.com/tobias-kirschs… Great work by @TobiasKirschst1 @SGiebenhain