Sabitlenmiş Tweet

Margaret Li @ Neurips ‘25

70 posts

Margaret Li @ Neurips ‘25

@margs_li

👩💻 PhD student @UWCSE / @UWNLP & @MetaAI. Formerly RE @FacebookAI Research, @Penn CS | 🏂💃🧋🥯 certified bi-coastal bb IAH/PEK/PHL/NYC/SFO/SEA

Katılım Haziran 2019

136 Takip Edilen1K Takipçiler

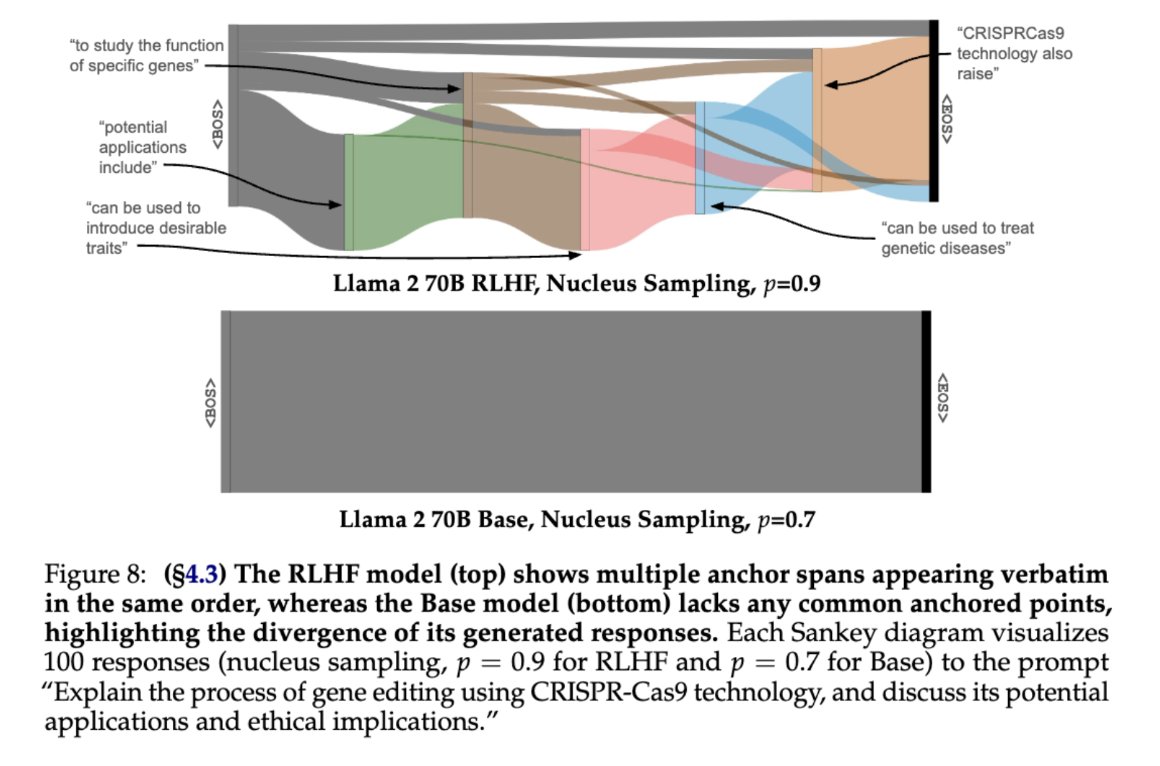

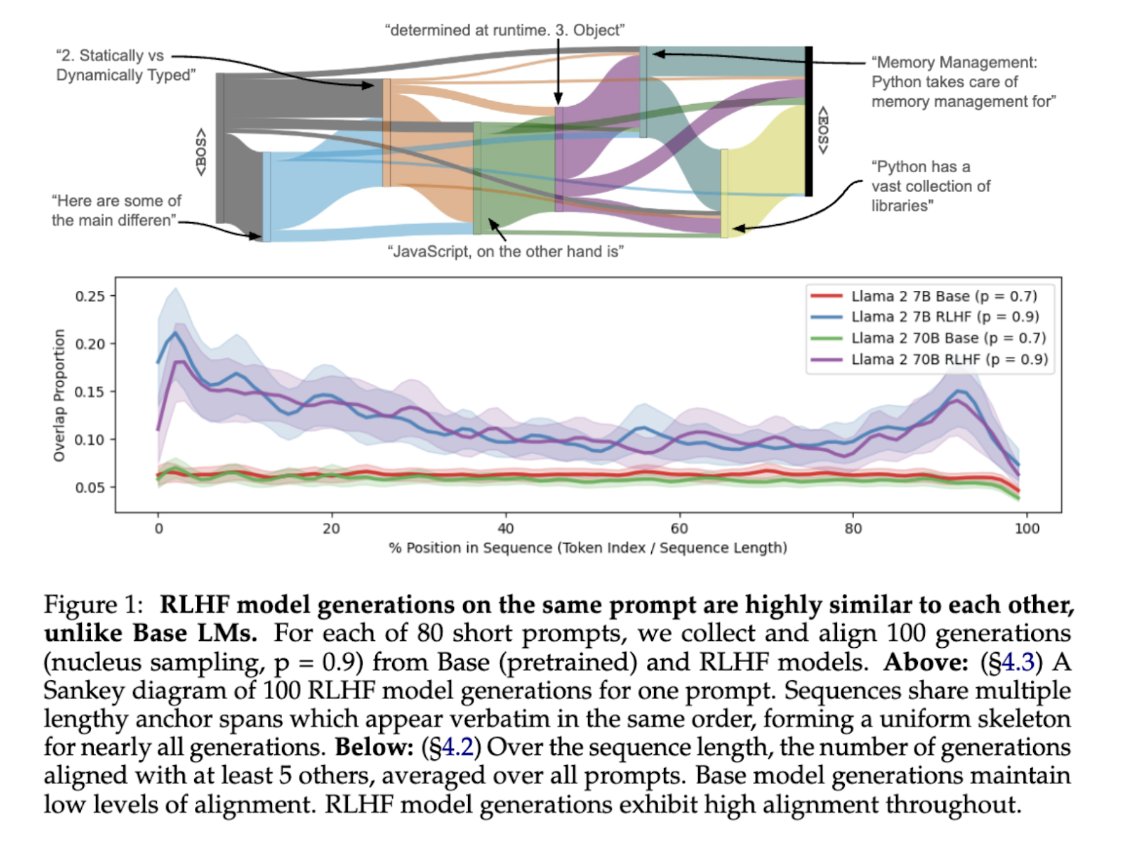

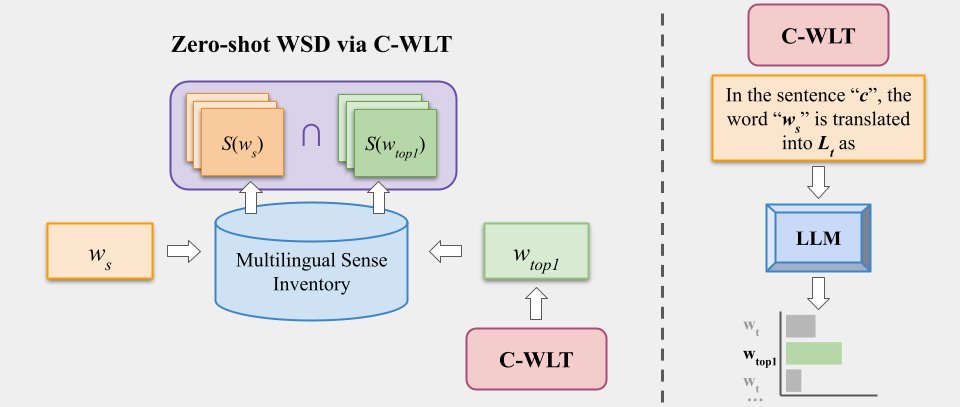

To appear at #ICLR2025

Thanks to the coauthors @snehaark @LukeZettlemoyer who descended into this madness with me

Arxiv: arxiv.org/pdf/2502.18969

Code: github.com/hadasah/scalin…

Checkpoints: huggingface.co/misfitting/mis…

9/9

English

@maxforbes omg ty, a good figure is the only kind of eye candy I want

but on a serious note, H/T to @universeinanegg , I think he was the first one of us to bring up sankey diagrams

English

@HJCH0 We're trying to minimize the influence of, e.g., vocab distribution on our long-term generation diversity metrics

p=0.9 for RLHF models reflects common practice. For Base models, we truncate using the p which most closely matches the RLHF model statistics

More info in the paper!

English

@margs_li Interesting! Quick question, why do you use different p values for the base model vs the RLHF model?

English

Of course, there’s much more in the paper than we could fit in a tweet thread!

Paper: nlppapers.notion.site/Predicting-vs-…

And thanks to all my amazing co-authors: @WeijiaShi2, @ArtidoroPagnoni, @PeterWestTM, and @universeinanegg!

English

Yes, the sneak peek is a joke, generated by @AnthropicAI 's Claude. The message is not though! We're super excited to discuss modular / sparse LLMs and how we train them ☺️

English

Sneak peek: "My fellow AI practitioners, I come to you today to spread the good news of embarrassingly parallel training of expert models. Too often we limit ourselves to single monolithic models. No more I say! The path to AI enlightenment is through specialization."

Stanford NLP Group@stanfordnlp

For this week's NLP Seminar, we are excited to host @margs_li and @ssgrn ! The talk will happen Thursday at 11 AM PT. Non-Stanford affiliates registration link: forms.gle/cvGobkVshhJcvN…. Information will be sent out one hour before the talk.

English

Margaret Li @ Neurips ‘25 retweetledi

Margaret Li @ Neurips ‘25 retweetledi

Sharing our project on 1) accelerating and 2) stabilizing training for large language-vision models

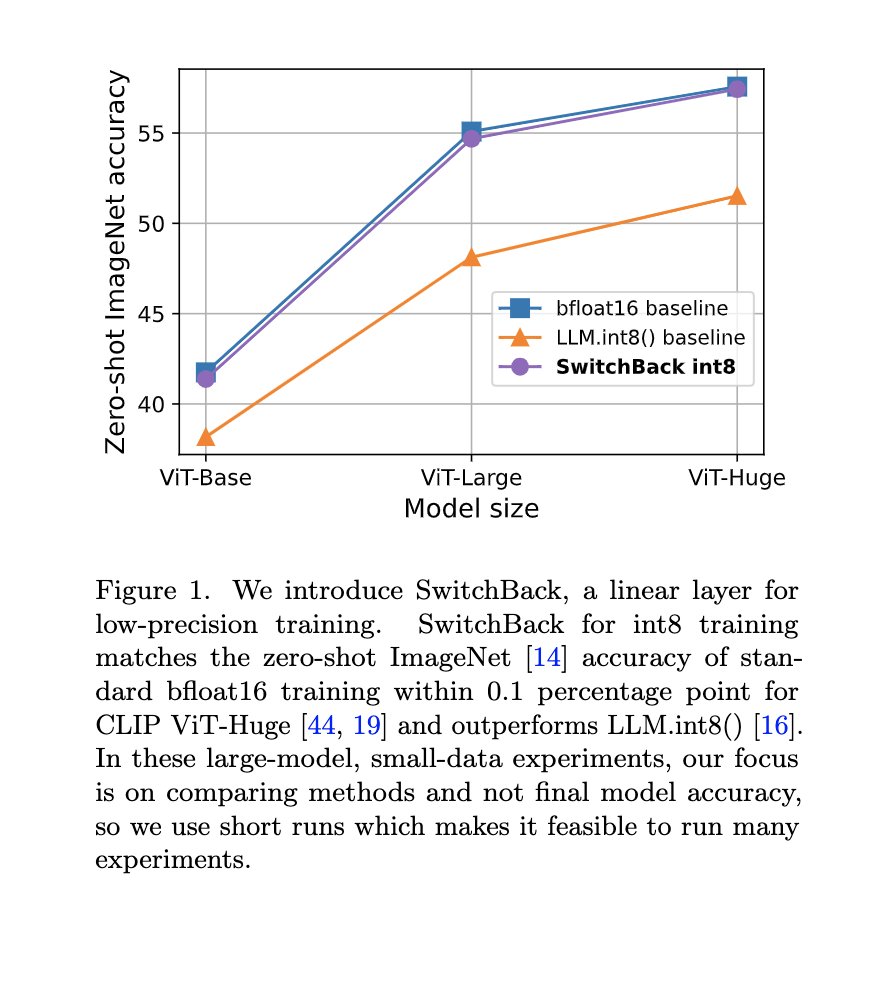

1) Towards accelerating training, we introduce SwitchBack, a linear layer for int8 quantized training which matches bfloat16 within 0.1 for CLIP ViT-Huge

arxiv.org/abs/2304.13013

English

@suchenzang @jefrankle @MosaicML differentiating b/t this and just having, e.g., a code model, a c4 model, a papers model, etc., because I'm interested in how the different restrictions play with each other / want some more careful curation and comparable results on the same tasks

English

@suchenzang @jefrankle ❤️ @suchenzang for the callout,

@jefrankle lowkey wanna do this, but would also love it from @MosaicML: this expt w/ each model trained on diff data subsets from a giant heap of everything w/ diff quality/code/lang filters, domain, dedup, etc -- super curious how they'd compare

English