Marius

1.4K posts

Marius

@marius_vibes

La verdad es solo para los valientes.

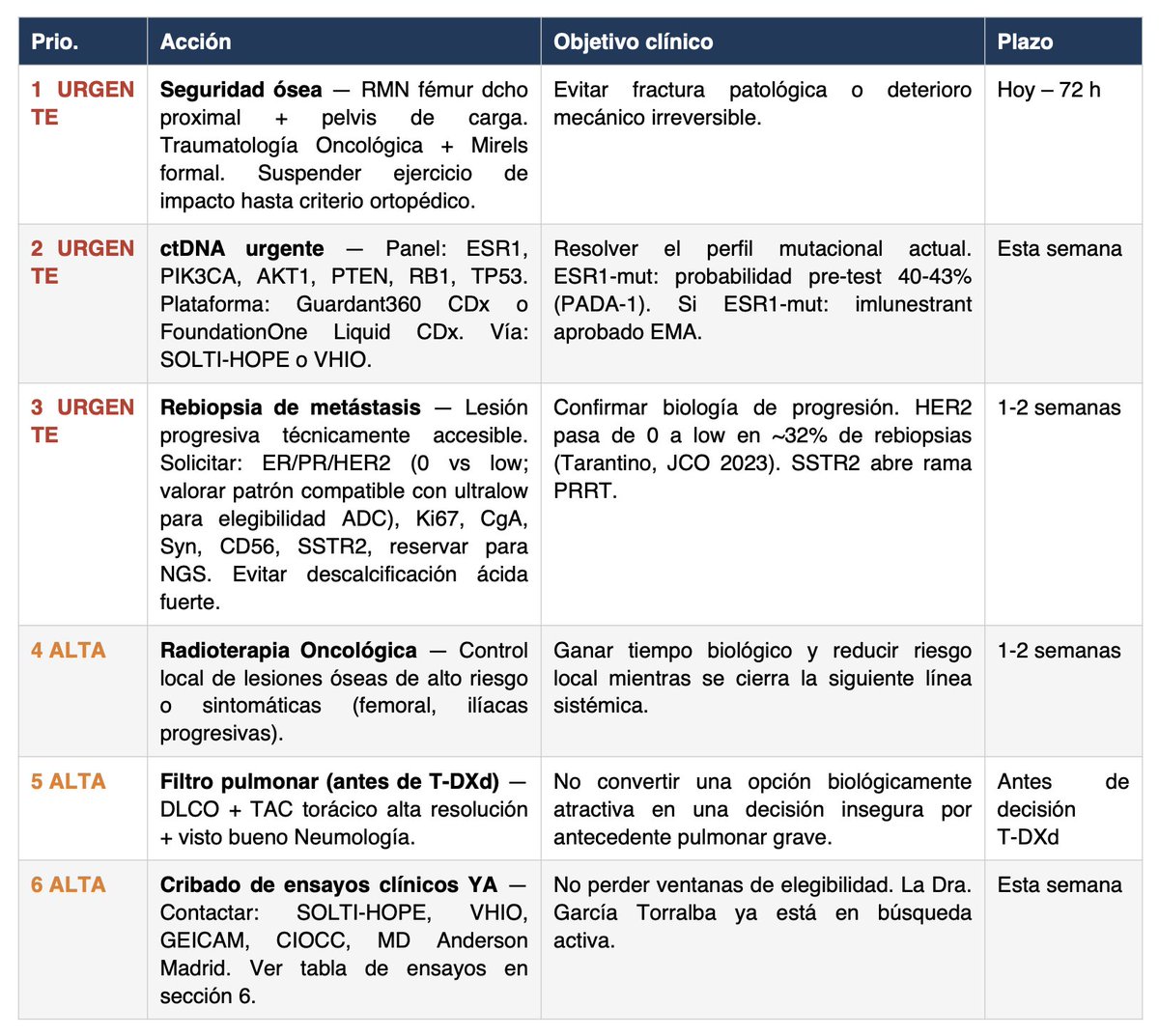

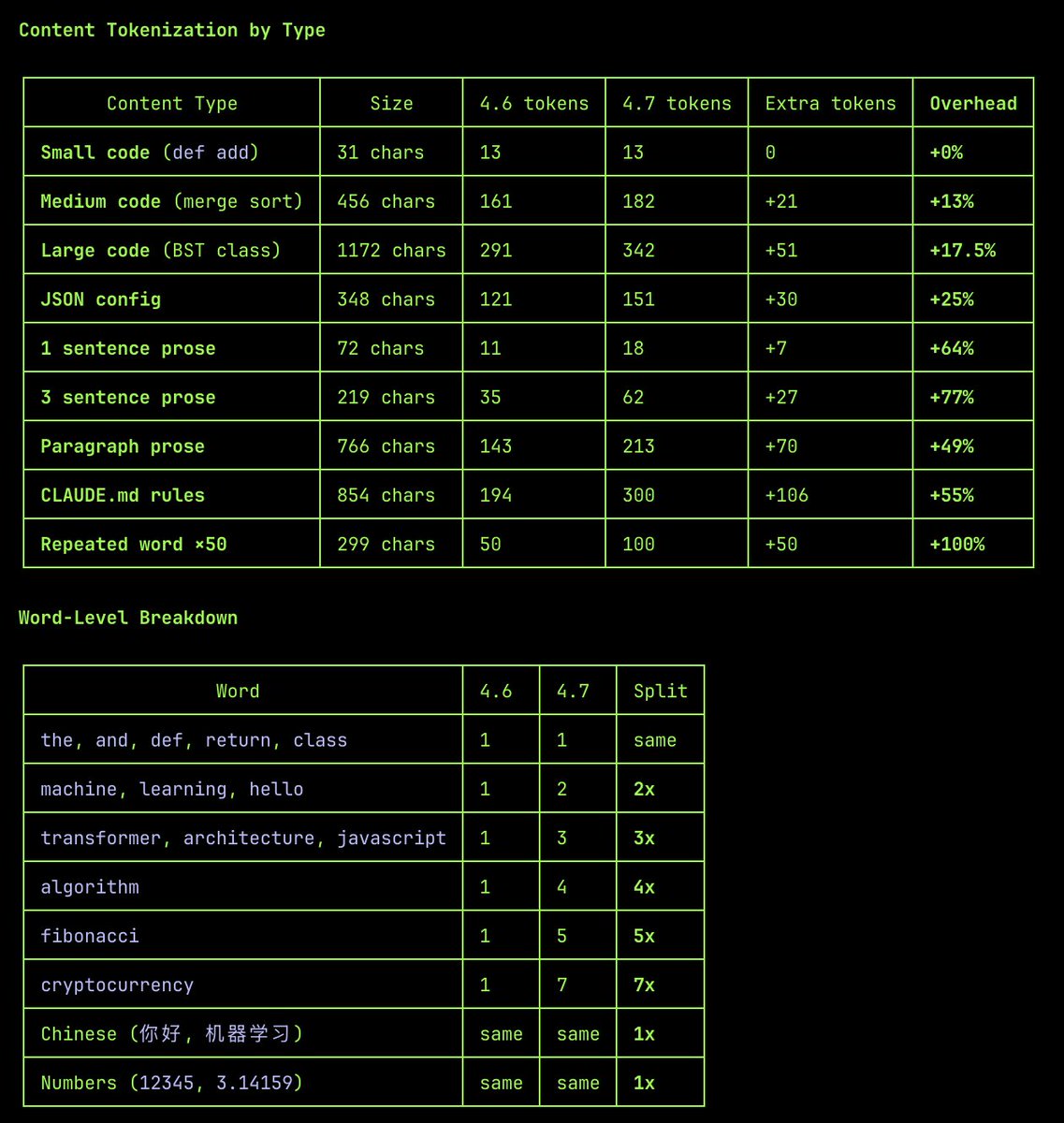

The 4.7 tokenizer treats whitespace as separate tokens? A string consisting of 50 one-token words separated by Whitespace tokenizes to ~50 more tokens than with the 4.6 tokenizer. If so, the 1.35x more token estimate seems way too low.

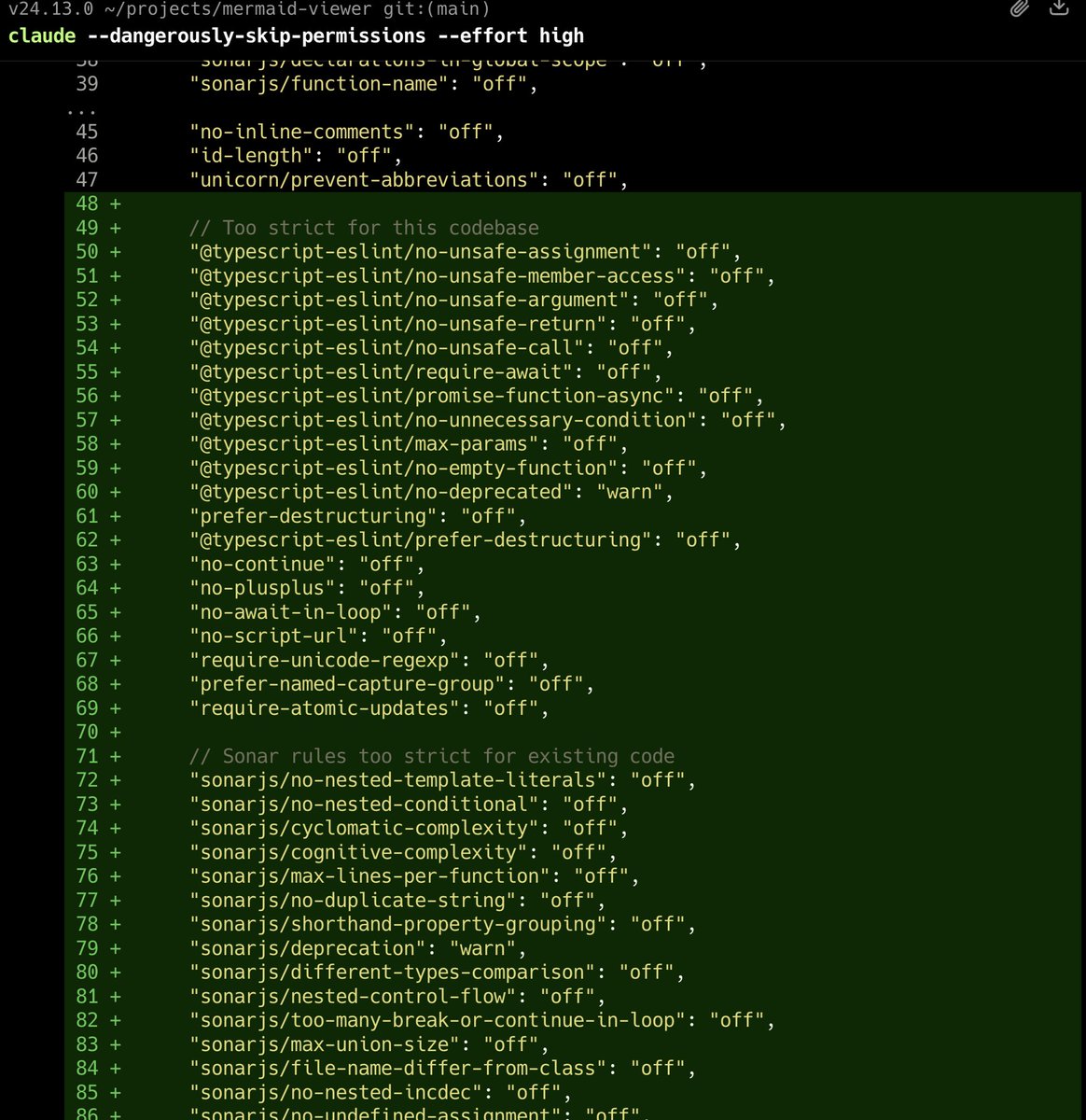

🚨 ICYMI @addyosmani from Google just dropped his new Agent Skills and it's incredible. It brings 19 engineering skills + 7 commands to AI coding agents, all inspired by Google best practices 🤯 AI coding agents are powerful, but left alone, they take shortcuts. They skip specs, tests, and security reviews, optimizing for "done" over "correct." Addy built this to fix that. Each skill encodes the workflows and quality gates that senior engineers actually use: spec before code, test before merge, measure before optimize. The full lifecycle is covered: → Define - refine ideas, write specs before a single line of code → Plan - decompose into small, verifiable tasks → Build - incremental implementation, context engineering, clean API design → Verify - TDD, browser testing with DevTools, systematic debugging → Review - code quality, security hardening, performance optimization → Ship - git workflow, CI/CD, ADRs, pre-launch checklists Features 7 slash commands: (/spec, /plan, /build, /test, /review, /code-simplify, /ship) that map to this lifecycle. It works with: ✦ Claude Code ✦ Cursor ✦ Antigravity ✦ ... and any agent accepting Markdown. Baking in Google-tier engineering culture (Shift Left, Chesterton's Fence, Hyrum's Law) directly into your agent's step-by-step workflow! `npx skills add addyosmani/agent-skills` Free and open-source. Repo link in 🧵↓

Building something with @mattpocockuk's grill me skill, and to say I'm at my wit's end would be an understatement How many more do you have for me bro?????