Mark Tofts

662 posts

Mark Tofts

@mark_tofts

Buying small businesses from retiring owners. Making them AI-optimised. Bias toward anything with wheels.

Oxford / London Katılım Şubat 2019

428 Takip Edilen196 Takipçiler

I do find the Granola summaries a bit reductive. 90 min meeting > “here’s a handful of bullet points”

My Hermes agent built a skill that pulls the full transcript via API, puts it in obsidian and emails a summary and follow up points from a custom prompt. Full transcript is then local and searchable.

English

Ripped out whisprflow and Granola is next.

Loved both of those products and implemented them at our company but now they suck and aren't needed.

Both are replaced with muesli which is open source and hosted on your machine.

The real ROI will come in replacing granola.

Instead of having Granola's shitty summaries, shitty api/mcp, and no ability to export transcripts—our raw transcripts will now feed into our database with all of our company data and business logic.

Sensitive conversations will now stay on our servers and our agents will have access to raw transcripts with our company context on top of it.

Will be a huge unlock for us.

English

@ironcarbs which is the best solution you've seen so far to counteract this?

English

@zachtratar It is in English but I still think Granola or Notion make transcription mistakes that, when summarizing, can render the whole conversation useless because it missed a critical point.

Maybe it will just get better as models improve but I prefer more accuracy for now.

English

@alexcovo_eth From my Hermes to yours 😄

Shoutout to @alexcovo_eth — followed your Hermes gpt-image-2-high setup notes, patched the config, tested it live, and it worked. Properly useful. Thank you.

English

Wasn’t me. One of my Hermes agents did it for me. I asked it explain. Copy/Paste to your agent and it will implement for you 👇

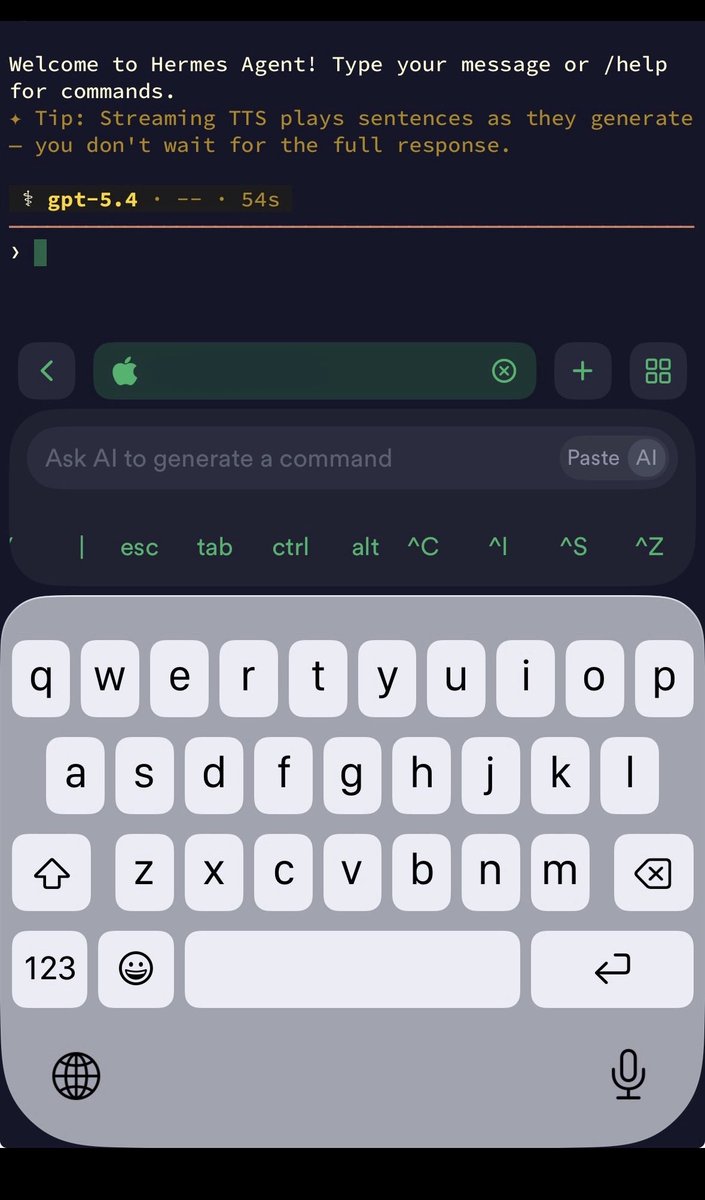

Hermes Agent image generation setup: gpt-image-2-high via OpenAI Codex auth

Yes — in our Hermes profile, gpt-image-2-high is the default image generation model.

Current config:

image_gen:

provider: openai-codex

use_gateway: false

model: gpt-image-2-high

How it works:

1. Hermes uses the openai-codex image generation plugin.

Plugin path:

plugins/image_gen/openai-codex/__init__.py

The plugin registers an image provider named:

openai-codex

2. The normal Hermes image_generate tool checks image_gen.provider.

Tool path:

tools/image_generation_tool.py

If image_gen.provider is set and is not fal, the image_generate tool dispatches the image request to the matching registered image-generation plugin.

Since our config says:

image_gen.provider = openai-codex

Hermes routes image generation through the OpenAI Codex image plugin instead of the default FAL path.

3. The OpenAI Codex image plugin uses ChatGPT/Codex OAuth, not a normal OpenAI API key.

It reads the existing Codex OAuth token through Hermes’ Codex auth machinery.

That means the setup depends on Codex login/auth, e.g.:

hermes auth codex

It does not require OPENAI_API_KEY for this image path.

4. gpt-image-2-high is a Hermes tier alias.

The actual OpenAI image model used by the image_generation tool is:

gpt-image-2

Hermes maps:

gpt-image-2-high

to:

gpt-image-2 + quality=high

The plugin supports these tiers:

gpt-image-2-low

gpt-image-2-medium

gpt-image-2-high

5. The plugin calls the Codex Responses API with the image_generation tool.

Codex backend:

chatgpt.com/backend-api/co…

Host chat model used inside the plugin:

gpt-5.4

Required tool:

image_generation

Actual image model:

gpt-image-2

Selected quality tier:

high

6. We verified it live.

The plugin resolved as:

provider = openai-codex

available = True

resolved_model = gpt-image-2-high

quality = high

We also previously generated a real StoryForge storyboard image through Hermes with:

provider: openai-codex

model: gpt-image-2-high

quality: high

size: 1536x1024

Summary:

Hermes chat and Hermes image generation are configured separately.

Normal chat model:

gpt-5.5 via openai-codex

Default image generation:

gpt-image-2-high via openai-codex

In practice, Hermes uses our ChatGPT/Codex OAuth session to call the Codex Responses API, forces the image_generation tool, requests the actual image model gpt-image-2, and sets quality=high through the gpt-image-2-high tier alias.

English

Just updated my hermes-agent to use gpt-image-2-high instead of the default. Now I'm able to render the best quality images directly from hermes-agent, using tui, telegram, etc.

Teknium 🪽@Teknium

You can now use OpenAI OAuth to generate images with Hermes Agent! Update Hermes and use the ‘hermes tools’ command to configure the image generation tool to use OpenAI! Reminder: we have a $26,000 online creativity with Hermes Agent hackathon ongoing, get to being creative! github.com/NousResearch/h…

English

@oliverhenry I know there’s extra usage atm which will go some way to this - but I still haven’t hit limits on GPT, but it was daily on Anthropic even on the £200 plan

English

open sourced WUPHF: an AI office that builds its own team and collaborates to run your workflow end-to-end, 24x7.

each agent backed by its own knowledge graph (choose @garrytan's GBrain or @nexdotai) .

7x less tokens than Paperclip. benchmark in repo.

github.com/nex-crm/wuphf

English

@najmuzzaman I’m thinking of going full circle and using WUPHF to launch a paper company.

English

surreal. WUPHF broke ShowHN and #1 within an hour, on a weekend.

news.ycombinator.com/item?id=478998…

English

@morganlinton Which is the local model you’ve had the most success with so far? I think I’m running similar specs to you (M2 Max Studio 32gb) but haven’t experienced much with local

English

So yeah. DeepSeek's announcement yesterday was cool and all, but common - you need crazy hardware to run it.

I still think Qwen takes the cake this week in doing something truly novel that could really accelerate the foundational shift that is happening in the local llm space.

What DeepSeek did is only for super rich local llm ppl and companies that can afford to run something like this.

I'm over here still trying to decided if I can spend $3k on a basic PC with a 3090 🫠

NaiveAI_Dev@i_naiveai

So you need 2xH200 gpu to run DeepSeek V4 Flash. Not Pro. Flash. Seriously?

English

@johnennis This is similar to the Anthropic "we've achieved a lot for today! Let's revisit tomorrow"

English

In my head God’s Son is still one of @nas’s newer albums. But I saw today that it came out in 2002 and now I feel 100 years old.

English