Maruth Labs

33 posts

Maruth Labs

@maruthlabs

Smaller model, endless possibilities. AI research lab focused on scalable, efficient solutions. Creators of Madhuram, device-first language model. #AI

Hiring: Research Intern @ MaruthLabs We are looking for a Research Intern to join us for a 3-month internship focused on pushing the boundaries of high-performance Small Language Models (SLMs). The Role: • Research & Experimentation: You will be given access to 0.5x H100 GPU compute to test and iterate on your own research ideas. • Scaling Up: Upon reaching your research milestones, you will be granted access to an 8x H100 node for a full-scale training run. • Integration: Successful experiments and optimizations will be integrated directly into our core model training pipelines. Requirements: • Strong proficiency in Python and a deep understanding of Transformer architectures. • A research-oriented mindset with an interest in SLMs, efficiency, and context-length expansion. • Degree is not a barrier: We value proof of work, GitHub contributions, and technical curiosity over formal credentials. Details: • Stipend: ₹15,000 per month. • Duration: 3 Months (Extendable). • Location: Remote. How to Apply: Interested candidates should send their CV and a brief outline of a research idea they would like to explore on an H100 to contact@maruthlabs.com. #MaruthLabs #LLM #Research #Hiring #MachineLearning #SLM

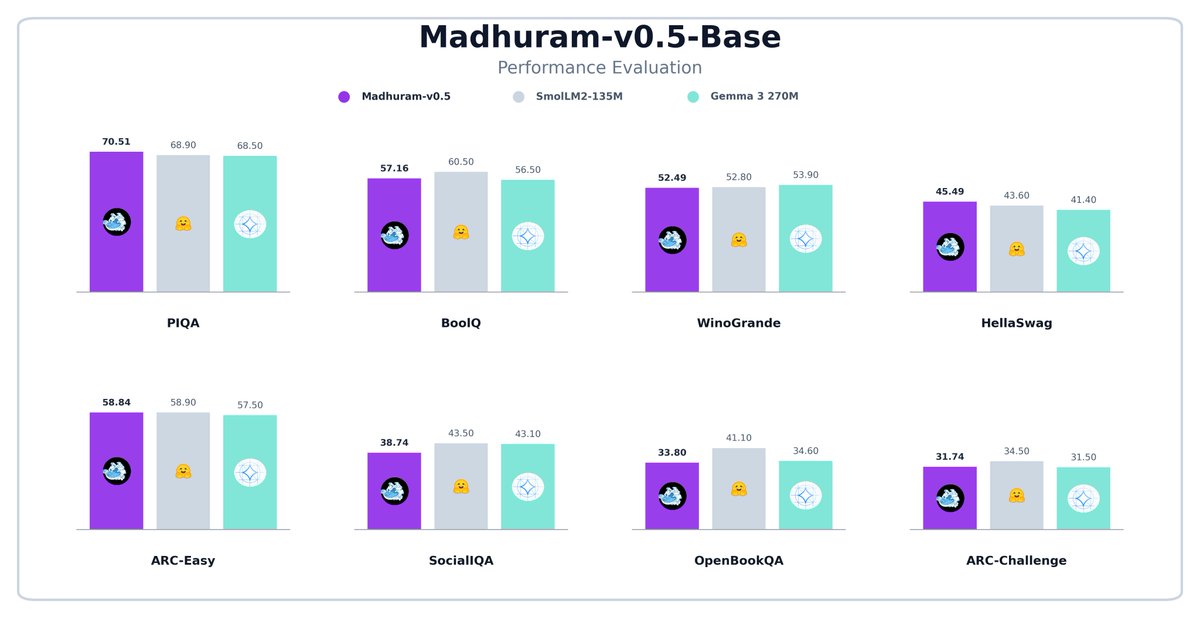

We’ve been working on a small 150M parameter model called Madhuram-v0.5. It’s a model designed to be finetuned for specific tasks. We're quite happy with how it holds up. Here is a comparison of our Madhuram-v0.5-Base with other models of similar sizes.