Max argus retweetledi

Max argus

9 posts

Max argus retweetledi

Are you sure your training data is actually synced?

Egocentric camera sees a hand grasping an orange, but the wrist cam shows nothing and tactile reads zero contact.

Your policy is learning from broken data and doesn't even know it.

In Physical AI, multi-modal sync is everything.

→ Egocentric: 30fps

→ Wrist: 30fps

→ Tactile: 100Hz

Different devices, different clocks, slightly different rates. The drift starts small. Barely noticeable frame by frame. But over a 4-minute episode, that tiny difference compounds into seconds of misalignment. And you had no way to even check.

Until now.

We built the Sync Quality Dashboard. One score tells you if your data is clean. Then go deeper. Clock offset, drift rate, jitter, frame drops, per-stream correction. All visible, all measurable.

In a 4-min episode, accumulated clock drift reached 7.5 seconds by the end of the recording. After correction: 9.0ms. That's the difference between "roughly aligned" and "actually aligned."

Visually confirm vision-to-vision, vision-to-tactile alignment frame by frame. No more "trust me, the data is fine."

We don't just collect multi-modal demos. We ship a quality assurance layer so you can verify every episode before it touches your model.

All data in @LeRobotHF format. Ready to train. Verified in sync.

Stop guessing. Start verifying.

English

Max argus retweetledi

MolmoSpaces-Bench leaderboard is now live! Test your generalist policies to see how they compare across tasks and environments. Feel free to reach out if you need help setting it up.

molmospaces.allen.ai/leaderboard

English

Max argus retweetledi

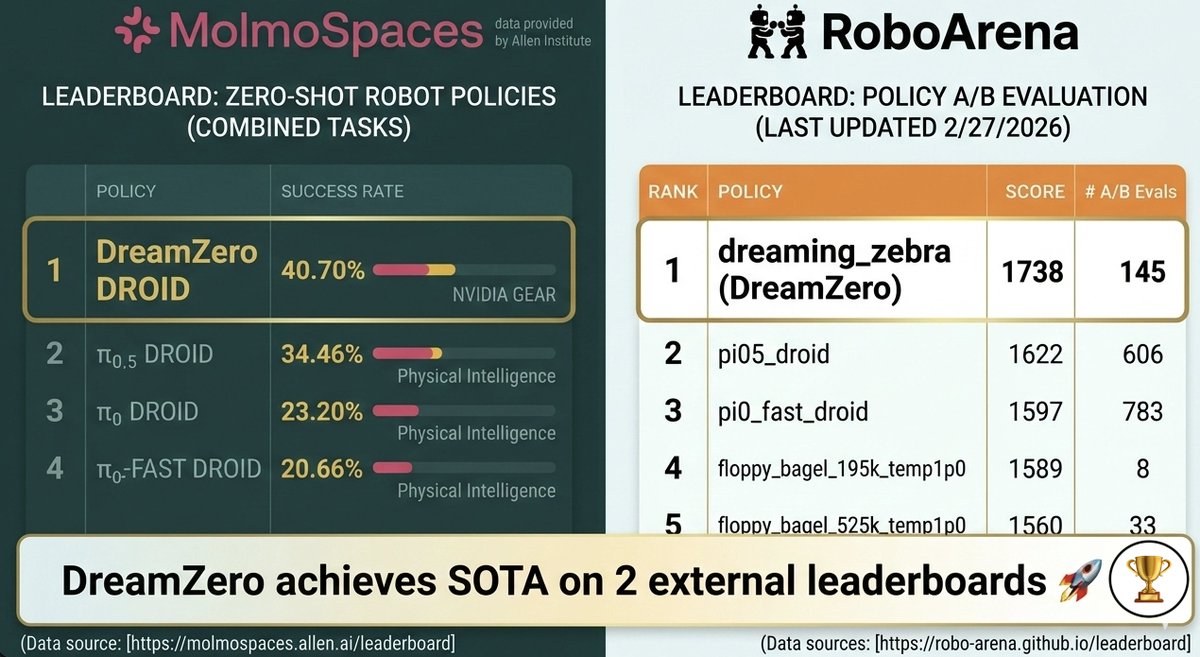

𝐃𝐫𝐞𝐚𝐦𝐙𝐞𝐫𝐨 𝐢𝐬 #𝟏 𝐨𝐧 𝐛𝐨𝐭𝐡 𝐌𝐨𝐥𝐦𝐨𝐒𝐩𝐚𝐜𝐞𝐬 𝐚𝐧𝐝 𝐑𝐨𝐛𝐨𝐀𝐫𝐞𝐧𝐚 🏆

𝗪𝗵𝗮𝘁 𝗺𝗮𝗸𝗲𝘀 𝘁𝗵𝗶𝘀 𝗻𝗼𝘁𝗮𝗯𝗹𝗲: DreamZero-DROID is trained 𝑓𝑟𝑜𝑚 𝑠𝑐𝑟𝑎𝑡𝑐ℎ using only the DROID dataset. No pretraining on large-scale robot data, unlike competing VLAs. This demonstrates the strength of video-model backbones for generalist robot policies (VAMs/WAMs).

More broadly, training 𝑜𝑛𝑙𝑦 on real data and evaluating on (1) transparent, distributed benchmarks like 𝐑𝐨𝐛𝐨𝐀𝐫𝐞𝐧𝐚 or (2) scalable sim-benchmarks like 𝐌𝐨𝐥𝐦𝐨𝐒𝐩𝐚𝐜𝐞𝐬 is an exciting step toward fairer and more reproducible evaluation of generalist policies, one that the community can hillclimb together to measure progress.

Special thanks to the Ai2 MolmoSpaces team (@notmahi @omarrayyann @YejinKim4 Max Argus) and the RoboArena team (@pranav_atreya) for helping with the set-up and getting these evaluations! Special shout out to @youliangtan @NadunRanawakaA @chuning_zhu, who led these efforts from the GEAR side :)

+ We also release our DreamZero-AgiBot checkpoint & post-training code to enable very efficient few-shot adaptation. Post-train on just ~30 minutes of play data for your specific robot, and see the robot do basic language following and pick-and-place 🤗(See YAM experiments in our paper for more detail).

++ We also provide the entire codebase & preprocessed dataset to replicate the DreamZero-DROID checkpoint.

🌐 dreamzero0.github.io

💻 github.com/dreamzero0/dre…

RoboArena: robo-arena.github.io/leaderboard

MolmoSpaces: molmospaces.allen.ai/leaderboard

English

Max argus retweetledi

Max argus retweetledi

MolmoSpaces provides singular scale and diversity. We built a benchmark that puts that scale to use.

MolmoSpaces-Bench evaluates zero-shot policies across thousands of environments previously unseen to them under systematic variation, providing insights that go beyond a success rate %

More Below:

Ai2@allen_ai

Introducing MolmoSpaces, a large-scale, fully open platform + benchmark for embodied AI research. 🤖 230k+ indoor scenes, 130k+ object models, & 42M annotated robotic grasps—all in one ecosystem.

English

Max argus retweetledi

Max argus retweetledi

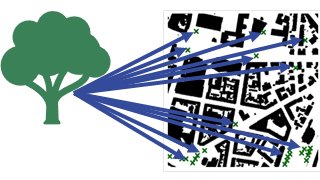

Excited to present “Climate-sensitive Urban Planning through Optimization of Tree Placements” w/ Ferdinand Briegel, Max Argus, @envmet & @ThomasBrox at the #NeurIPS2023 @ClimateChangeAI workshop. Tl;dr: we optimize urban tree locations for improved outdoor human thermal comfort.

English