MEGA Code

49 posts

MEGA Code

@megacode_ai

Agent Optimizer for Your Self-Evolving AI GitHub: https://t.co/yeMUVJGVTm

Palantir CEO, Alex Karp says only 2 types of people will survive the AI era..

- Drafted a blog post - Used an LLM to meticulously improve the argument over 4 hours. - Wow, feeling great, it’s so convincing! - Fun idea let’s ask it to argue the opposite. - LLM demolishes the entire argument and convinces me that the opposite is in fact true. - lol The LLMs may elicit an opinion when asked but are extremely competent in arguing almost any direction. This is actually super useful as a tool for forming your own opinions, just make sure to ask different directions and be careful with the sycophancy.

When @karpathy built MenuGen (karpathy.bearblog.dev/vibe-coding-me…), he said: "Vibe coding menugen was exhilarating and fun escapade as a local demo, but a bit of a painful slog as a deployed, real app. Building a modern app is a bit like assembling IKEA future. There are all these services, docs, API keys, configurations, dev/prod deployments, team and security features, rate limits, pricing tiers." We've all run into this issue when building with agents: you have to scurry off to establish accounts, clicking things in the browser as though it's the antediluvian days of 2023, in order to unblock its superintelligent progress. So we decided to build Stripe Projects to help agents instantly provision services from the CLI. For example, simply run: $ stripe projects add posthog/analytics And it'll create a PostHog account, get an API key, and (as needed) set up billing. Projects is launching today as a developer preview. You can register for access (we'll make it available to everyone soon) at projects.dev. We're also rolling out support for many new providers over the coming weeks. (Get in touch if you'd like to make your service available.) projects.dev

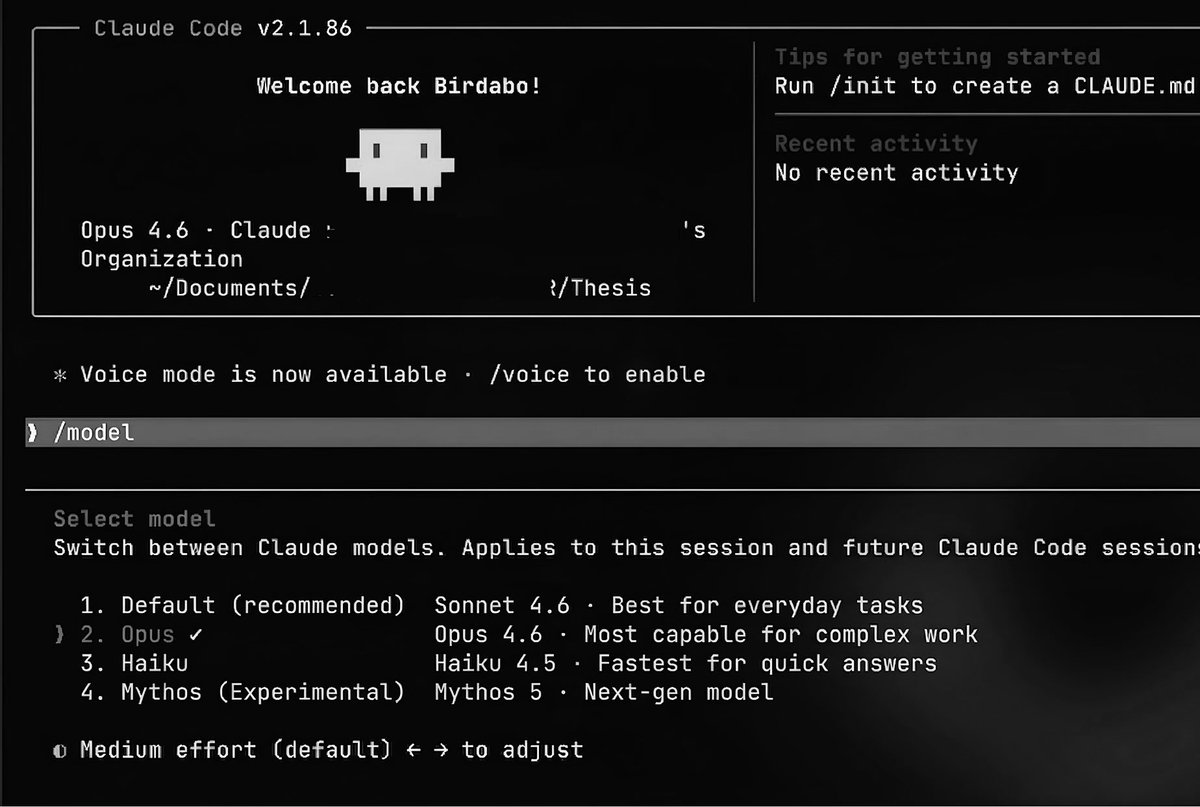

Friends at both big tech and startups tell me they’re spending more than $1000 per day on Claude Code or Codex tokens. That’s $365,000/year. We’re not far from companies spending more on LLM tokens than on human employees.

We are entering the second half of research. Here is my advice to every PhD student before starting a project: 1. Can Claude Code solve it in a day? 2. Will a Research Agent solve it soon? 3. Will scaling solve it anyway? If the answer to all three is No, then maybe you have found a real research problem. Because in the age of AI, many things that looked like research are being revealed as delayed engineering. That does not make research less important. It makes problem selection more important than ever. The scarce resource is no longer intelligence. It is taste. It is originality. It is the ability to ask questions that survive automation. The first half of research was about solving hard problems. The second half is about knowing which problems are still worth solving. #research #academic #AI #GenAI #generativeai #airesearch #taste