MiBakh retweetledi

MiBakh

1.1K posts

MiBakh retweetledi

Wondering how we give life to our robots?

Just released a blog on how we bring our robotics ideas from initial sketch to functional product in a matter of days!

Fable Engineering #4 — From Sketch to Product in Days, not Weeks open.substack.com/pub/fableengin…

In a world where software interfaces are being vibe-coded, fast hardware execution is key to compress iteration loops.

#Robotics #Startup #AI

English

MiBakh retweetledi

MiBakh retweetledi

I also wanted to take a second to reiterate the 75% price drop on embeddings. This is actually pretty crazy. You used to be able to embed the whole internet for ~$50M, now it is down to ~$12.5M.

twitter.com/BorisMPower/st…

Boris Power@BorisMPower

Some more napkin math - size of the Internet is ~10^11 pages of text*, this would cost (only?) $50M to embed. Who wants to take on Google?

English

MiBakh retweetledi

MiBakh retweetledi

One of the biggest open questions with AI is its impact on software business models over time. What seems to be under-appreciated about AI is how it can enable significant TAM expansion for a large number of categories over time when software can deliver outcomes, and not just enable existing work.

Right now the dominant business model in SaaS is a per seat model, which inevitably means that the total number of seats you can sell is limited to the number of employees in the organization that are relevant for your particular software. Legal software is roughly capped by the size of the legal team, audit software is capped by the size of the audit team, and so on. The implication of this is that the customer generally *already* has to have not only a need for your solution, but also the existing headcount in the organization to become users of your software. Incidentally, this is often why so many SaaS products tend to go after horizontal productivity categories, because this maximizes the number of potential users you have access to in an organization.

AI flips this on its head, especially with the power of AI Agents, and you get a new form of “outcome-as-a-service”. When AI is actually doing the work within the software itself, you're no longer constrained by the number of employees inside the organization to use the actual software. The software is quite literally bringing along the work with it and delivering a particular business outcome. It's clear the full potential of this playing out is not fully understood, as this represents a massive transformation of software as an industry.

When you are no longer constrained by the size of a team or department to use your software, markets are no longer arbitrarily capped in size. In this new era, software that powers a legal workflow actually brings the equivalent of legal knowledge work along with it, and software for audits brings the equivalent of audit work with it. All of a sudden small businesses, under-resourced teams in large enterprises, and all new geographies begin to open to up as markets. AI will enable otherwise niche categories of software to become much larger, and already large categories of software to become even bigger.

This transformation is similar to what we've seen in other markets where a new innovation has unlocked the size of a market well beyond its original demand. For instance, most investors and economists would have thought the size of Uber or Lyft's market was the size of the existing Taxi market, when in fact the market size was orders of magnitude larger once the shape of the product changed to make the offering easier to consume. We’ve seen this effect time and time again in areas like SaaS, cloud computing, a variety of mobile categories, and more.

We’re only in the earliest of stages of figuring out what this all means for the future of the software business model. Clearly all new variables of monetization will need to emerge when you start to pay software vendors for outcomes as opposed to just the software itself. But inevitably, when you remove the existing dominant constraint of enterprise software, TAM expansion will follow.

English

MiBakh retweetledi

MiBakh retweetledi

👀 Have you explored Agentc yet?

Agentc is a ChatGPT-like tool built on The Graph’s Uniswap data! For the next 2 weeks, interact with AI & The Graph using natural language... & compete for bounties!

Let’s see what you can do 📊

🔗agentc.xyz

English

MiBakh retweetledi

🚨 BREAKING:

Open-source robotics program - Le Robot.

@huggingface x @pollenrobotics! 🤝

This is further proof that the 'humanoid race' is not slowing down and more big players are joining.

Check out the first project from Hugging Face's open-source robotics program, "Le Robot," in collaboration with Pollen Robotics! 🎉

ℹ️ Bit of facts about the french humanoid robot:

→ Reachy2 is a humanoid robot designed by Pollen Robotics, trained by Hugging Face to do various household chores and interact safely with humans and dogs.

→ The robot was initially teleoperated by a human wearing a virtual reality headset, who controlled it through various tasks.

→ A machine learning algorithm then studied 50 videos of the teleoperation sessions, learning how to do the tasks on its own and guiding Reachy2 to do them.

→ The dataset and model used for the demo are open-sourced on Hugging Face, and available for anyone to use.

❓ Question: How will open-source robotics AI change the game for robotics development?

Credits: @RemiCadene

~~~

♻️ RT to help 1 robot find a new workplace.

English

MiBakh retweetledi

MiBakh retweetledi

📽️ New 4 hour (lol) video lecture on YouTube:

"Let’s reproduce GPT-2 (124M)"

youtu.be/l8pRSuU81PU

The video ended up so long because it is... comprehensive: we start with empty file and end up with a GPT-2 (124M) model:

- first we build the GPT-2 network

- then we optimize it to train very fast

- then we set up the training run optimization and hyperparameters by referencing GPT-2 and GPT-3 papers

- then we bring up model evaluation, and

- then cross our fingers and go to sleep.

In the morning we look through the results and enjoy amusing model generations. Our "overnight" run even gets very close to the GPT-3 (124M) model. This video builds on the Zero To Hero series and at times references previous videos. You could also see this video as building my nanoGPT repo, which by the end is about 90% similar.

Github. The associated GitHub repo contains the full commit history so you can step through all of the code changes in the video, step by step.

github.com/karpathy/build…

Chapters.

On a high level Section 1 is building up the network, a lot of this might be review. Section 2 is making the training fast. Section 3 is setting up the run. Section 4 is the results. In more detail:

00:00:00 intro: Let’s reproduce GPT-2 (124M)

00:03:39 exploring the GPT-2 (124M) OpenAI checkpoint

00:13:47 SECTION 1: implementing the GPT-2 nn.Module

00:28:08 loading the huggingface/GPT-2 parameters

00:31:00 implementing the forward pass to get logits

00:33:31 sampling init, prefix tokens, tokenization

00:37:02 sampling loop

00:41:47 sample, auto-detect the device

00:45:50 let’s train: data batches (B,T) → logits (B,T,C)

00:52:53 cross entropy loss

00:56:42 optimization loop: overfit a single batch

01:02:00 data loader lite

01:06:14 parameter sharing wte and lm_head

01:13:47 model initialization: std 0.02, residual init

01:22:18 SECTION 2: Let’s make it fast. GPUs, mixed precision, 1000ms

01:28:14 Tensor Cores, timing the code, TF32 precision, 333ms

01:39:38 float16, gradient scalers, bfloat16, 300ms

01:48:15 torch.compile, Python overhead, kernel fusion, 130ms

02:00:18 flash attention, 96ms

02:06:54 nice/ugly numbers. vocab size 50257 → 50304, 93ms

02:14:55 SECTION 3: hyperpamaters, AdamW, gradient clipping

02:21:06 learning rate scheduler: warmup + cosine decay

02:26:21 batch size schedule, weight decay, FusedAdamW, 90ms

02:34:09 gradient accumulation

02:46:52 distributed data parallel (DDP)

03:10:21 datasets used in GPT-2, GPT-3, FineWeb (EDU)

03:23:10 validation data split, validation loss, sampling revive

03:28:23 evaluation: HellaSwag, starting the run

03:43:05 SECTION 4: results in the morning! GPT-2, GPT-3 repro

03:56:21 shoutout to llm.c, equivalent but faster code in raw C/CUDA

03:59:39 summary, phew, build-nanogpt github repo

YouTube

English

MiBakh retweetledi

MiBakh retweetledi

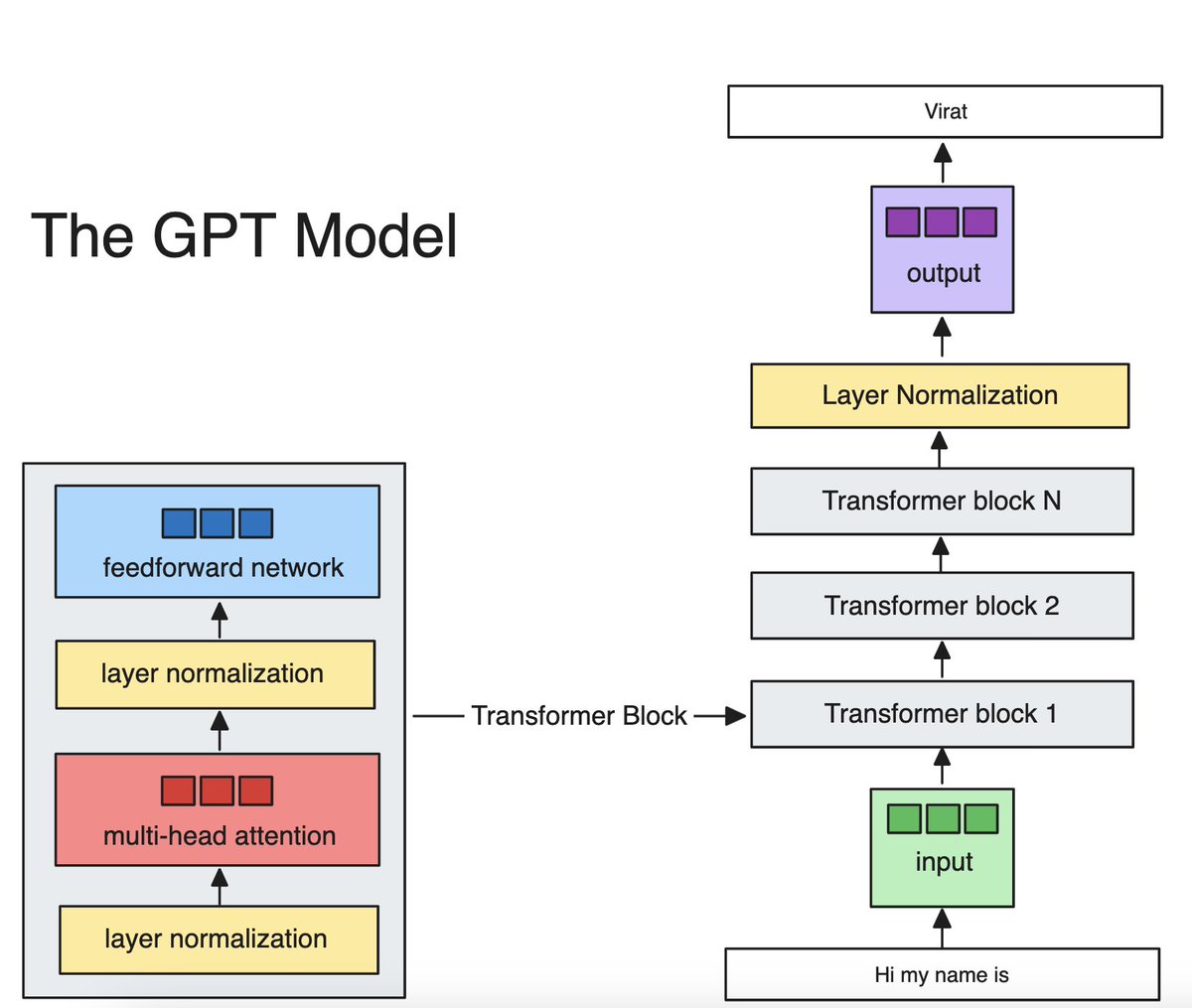

I finally understand how GPT generates text.

Really helps to code it from scratch in Python.

There are 5 components:

• token embeddings

• positional embeddings

• transformer blocks

• layer normalization

• output head

It sounds complex, but grokking GPT is simple.

Token embeddings turn input text into meaningful vectors that capture semantic meaning.

Positional embeddings encode positions of input tokens. This tells GPT "where" each token is in the input text.

Transformer blocks are the processing powerhouse. This is where attention and training happens.

Layer normalization smooths out the data, which enhances training stability.

Output head translates the learned features into a next token prediction.

And that's it!

I recommend running the code below and then implementing it step-by-step on your own.

Happy learning.

English

MiBakh retweetledi

MiBakh retweetledi

Check out the inaugural issue of International Journal of Artificial Intelligence and Robotics Research (IJAIRR)!

Articles on #GenerativeAI, #RoboticPlans, #LanguageRecognition, and #NeuralNetwork training.

Click to access articles.

English

MiBakh retweetledi

Lets build the `LLM OS` inspired by the great @karpathy

Can LLMs be the CPU of a new operating system and solve problems using:

💻 software 1.0 tools

🌎 internet browsing

📕 knowledge retrieval

🤖 communication with other LLMs

Code: git.new/llm-os

English

MiBakh retweetledi

You can make an unlimited amount of money. Do the following:

Solve a big problem that the client sees as necessary and urgent. Design your entire conversation and content around addressing this.

Here are 12 steps to follow.

Step 1: Who is your client? Describe them. Most get stuck here because they can’t commit to a single dream client avatar. Less is more.

Step 2: What do they want?

Step 3: Why do they want it?

Step 4: What’s the obstacle standing in their way?

Step 5: In an ideal world, what is their dream outcome?

Step 6: What are they afraid of? What negative outcomes are they trying to avoid?

Step 7: What have they tried in the past that hasn’t worked? What gives them pause to try again?

Step 8: How would they measure success?

Step 9: What impact would this have on their life and business?

Step 10: What % of value created feels fair to spend?

Step 11: Are there any reasons preventing them from moving forward with someone like you?

Step 12: What do they want to do next?

English

MiBakh retweetledi

MiBakh retweetledi

Yes!

A compute-kernel that enables building a configurable #SmartAgent for anyone that’s progressively enhanced (and personalized) via loose coupling with #OpenAPI-compliant #APIs.

That’s all we need, at #Web-scale.

#TheInevitable #AI #GenAI #LLMs

The Technology Brother@thetechbrother

OpenAI CEO Sam Altman on the key to having a world where we have reasoning agents interfacing with the world -- a shared interface:

English

MiBakh retweetledi