Sabitlenmiş Tweet

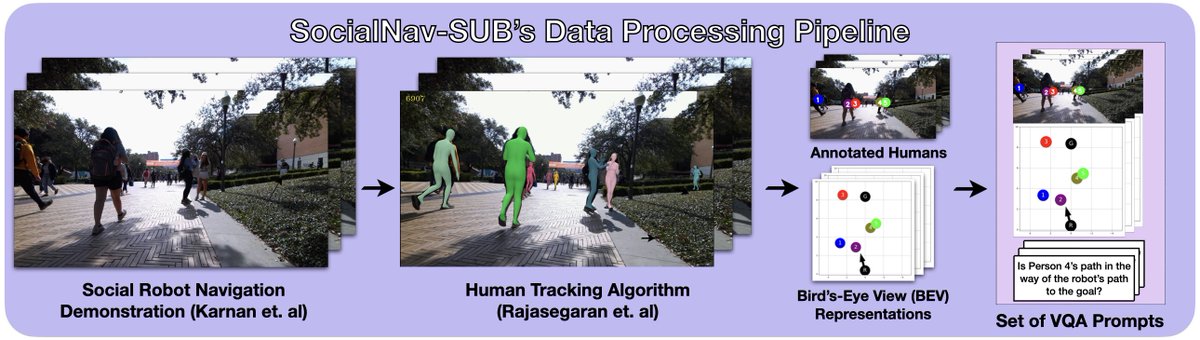

[1/8] New social navigation paper + benchmark: SocialNav-SUB 🚶🤖 Recent work puts VLMs on robots for navigation, but can they really interpret scenes and extract key details for social navigation? 🔎 larg.github.io/socialnav-sub

English