Michael Ryan

309 posts

Michael Ryan

@michaelryan207

PhD Student @stanfordnlp || Working on DSPy 🧩 || Prev @GeorgiaTech @Microsoft @SnowflakeDB

If you work in AI, you work in a human capital bound field. You get to vote with your feet on how the world will turn out. I would encourage everyone to think carefully about what they support

🧠🎙️ We’re co-hosting an Augmented Mind Podcast Meetup w/ a16z — Tue Feb 24 (11–1) @ Gates CS (Stanford)! If you’re into technical human-centered AI and want an easy, low-pressure way to meet others building in the space, come hang out! 🔗Link to RSVP Below

Can we build a blind, *unlinkable inference* layer where ChatGPT/Claude/Gemini can't tell which call came from which users, like a “VPN for AI inference”? Yes! Blog post below + we built it into open source infra/chat app and served >15k prompts at Stanford so far. How it helps with AI user privacy: # The AI user privacy problem If you ask AI to analyze your ChatGPT history today, it’s surprisingly easy to infer your demographics, health, immigration status, and political beliefs. Every prompt we send accumulates into an (identity-linked) profile that the AI lab controls completely and indefinitely. At a minimum this is a goldmine for ads (as we know now). A bigger issue is the concentration of power: AI labs can easily become (or asked to become) a Cambridge Analytica, whistleblow your immigration status, or work with health insurance to adjust your premium if they so choose. This is a uniquely worse problem than search engines because your average query is now more revealing (not just keywords), interactive, and intelligence is now cheap. Despite this, most of us still want these remote models; they’re just too good and convenient! (this is aka the "privacy paradox".) # Unlinkable inference as a user privacy architecture The idea of unlinkable inference is to add privacy while preserving access to the remote models controlled by someone else. A “privacy wrapper” or “VPN for AI inference”, so to speak. Concretely, it’s a blind inference middle layer that: (1) consists of decentralized proxies that anyone can operate; (2) blindly authenticates requests (via blind signatures / RFC9474,9578) so requests are provably sandboxed from each other and from user identity; (3) relays prompts over randomly chosen proxies that don’t see or log traffic (via client-side ephemeral keys or hosting in TEEs); and (4) the provider simply sees a mixed pool of anonymous prompts from the proxies. No state, pseudonyms, or linkable metadata. If you squint, an unlinkable inference layer is essentially a vendor for per-request, anonymous, ephemeral AI access credentials (for users or agents alike). It partitions your context so that user tracking is drastically harder. Obviously, unlinkability isn’t a silver bullet: the prompt itself still goes to the remote model and can leak privacy (so don't use our chat app for a therapy session!). It aims to combat *longitudinal tracking* as a major threat to user privacy, and its statistical power increases quickly by mixing more users and requests. Unlinkability can be applied at any granularity. For an AI chat app, you can unlinkably request a fresh ephemeral key for every session so tracking is virtually impossible. # The Open Anonymity Project We started this project with the belief that intelligence should be a truly public utility. Like water and electricity, providers should be compensated by usage, not who you are or what you do with it. We think unlinkable inference is a first step towards this “intelligence neutrality”. # Try it out! It’s quite practical - Chat app “oa-chat”: chat.openanonymity.ai (<20 seconds to get going) - Blog post that should be a fun read: openanonymity.ai/blog/unlinkabl… - Project page: openanonymity.ai - GitHub: github.com/OpenAnonymity

Introducing the curse of coordination. Agents perform 50% worse in teams than working alone. People building human-AI collaboration today don't realize why current LLMs fail to be good teammates. We built CooperBench to study this. For humans, we recognize that teamwork isn't just the sum of individual capability. Communication and coordination often outweigh raw skill. But for AI? We're only hill-climbing benchmarks that evaluate solo technical abilities. CooperBench A benchmark to evaluate agent cooperation in realistic software teamwork tasks. The setup is intuitive: two agents, two tasks, two VMs, one chat channel (agents can send over arbitrary text, even the entire patch they wrote). We evaluate whether the merged solution from both agents passes the requirements of both tasks. The curse of coordination The most striking result: agents perform 50% worse in teams (black line) than working alone (blue line). Why is this happening? Is it because they can't use the communication tool? No. They spent 20% of their time sending messages. The problem? Those messages were repetitive, vague, ignored questions, or straight-up hallucinated. But bad communication is only part of the story. We found two deeper failures: Commitment: Agents don't do what they promised. Expectations: Agents don't expect others to keep promises either. Without these, cooperation collapses. However, there is a silver lining We also find emergent coordination behaviors, e.g. role division, resource division, and negotiation, which gives us hope that we can use reinforcement learning to improve coordination. What's next? It is true that highly-engineered multi-agent orchestration could largely sidestep the coordination problem. However, we care more about the AI's capability: if we truly want AI to be our teammates, we need them to be natively capable of effective communicating and coordinating. Two agents on software tasks is just the beginning. The real goal: agents that can cooperate with us well enough to actually empower us. CooperBench is our first step. If you're working on this too, let's talk.

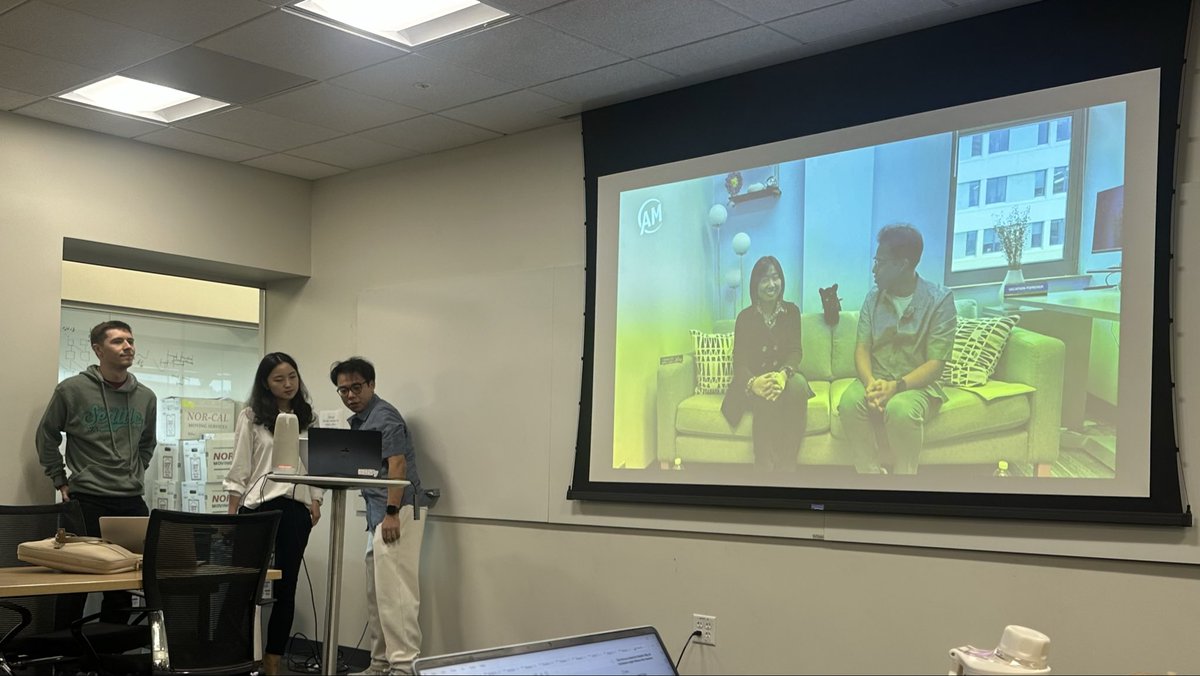

Thank you so much for all your support and interest💛 We've got something new in the works — here's a first look at EP01 with @oshaikh13 👀 Full episode will be released tomorrow morning!

AI used to be a distant promise; now it permeates our lives. AI is getting better, but is it making us better? We are promised that AI will augment our minds, but how? We--@EchoShao8899, @shannonzshen, and @michaelryan207--are excited to launch the Augmented Mind Podcast (The AM Podcast), a podcast about technical human-centered AI work. We'll share compelling research, infrastructure, and systems through monthly episodes, featuring interviews with the pioneering minds behind them. We release EP0 today to share who we are, why we started this podcast, and what we're looking forward to. 0:00 - Prelude: the problems we care about 1:48 - Host introduction 2:03 - Why we started the AM Podcast 2:31 - Hot takes on human-centered AI 10:45 - Format of our podcast 11:28 - Unique technical challenges in human-centered AI 16:45 - Let the journey begin!

Today we introduce humans&, a human-centric frontier AI lab. We believe AI can be reimagined, centering around people and their relationships with each other. At its best, AI should serve as a deeper connective tissue that strengthens organizations and communities

AI used to be a distant promise; now it permeates our lives. AI is getting better, but is it making us better? We are promised that AI will augment our minds, but how? We--@EchoShao8899, @shannonzshen, and @michaelryan207--are excited to launch the Augmented Mind Podcast (The AM Podcast), a podcast about technical human-centered AI work. We'll share compelling research, infrastructure, and systems through monthly episodes, featuring interviews with the pioneering minds behind them. We release EP0 today to share who we are, why we started this podcast, and what we're looking forward to. 0:00 - Prelude: the problems we care about 1:48 - Host introduction 2:03 - Why we started the AM Podcast 2:31 - Hot takes on human-centered AI 10:45 - Format of our podcast 11:28 - Unique technical challenges in human-centered AI 16:45 - Let the journey begin!