mindmodel

2.2K posts

mindmodel

@mindmodel

Freelance C# / .Net Web architect / developer, specializing in clean, simple, performant code. Looking for new projects. Check my web site for demos, etc.

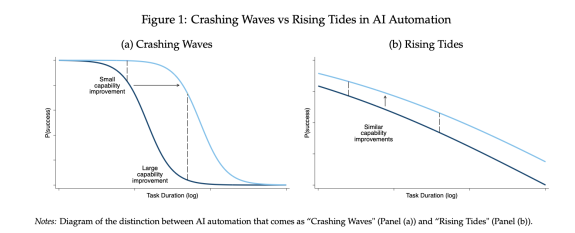

Marc Andreessen: Software isn't precious anymore. In this new world, high quality software is infinitely available. "We've always lived in a world in which software is this precious thing that you have to think about very carefully." "It was really hard to generate good software, and there was only a small number of people who could do it." "Those days are just over." "If you need new software to do X, Y, or Z, you're just going to wave your hand and get it." "Things that used to be hard, or even seem like an insurmountable mountain to get through, all of a sudden, I think, become very easy." @pmarca with @latentspacepod

realized the door im running in front of every day of is anthropics office let's see attendance on a saturday

"Using coding agents well is taking every inch of my 25 years of experience as a software engineer." Simon Willison (@simonw) is one of the most prolific independent software engineers and most trusted voices on how AI is changing the craft of building software. He co-created Django, coined the term "prompt injection," and popularized the terms "agentic engineering" and "AI slop." In our in-depth conversation, we discuss: 🔸 Why November 2025 was an inflection point 🔸 The "dark factory" pattern 🔸 Why mid-career engineers (not juniors) are the most at risk right now 🔸 Three agentic engineering patterns he uses daily: red/green TDD, thin templates, hoarding 🔸 Why he writes 95% of his code from his phone while walking the dog 🔸 Why he thinks we're headed for an AI Challenger disaster 🔸 How a pelican riding a bicycle became the unofficial benchmark for AI model quality Listen now 👇 youtu.be/wc8FBhQtdsA