Sabitlenmiş Tweet

⛰️

99 posts

⛰️ retweetledi

Synthetic data, generated from #DigitalTwins through computer simulations, is enabling developers to bootstrap physical #AI model training.

Learn how you can build custom SDG pipelines using NVIDIA NIM microservices for #OpenUSD.

🔗 nvda.ws/3WH6yY1

#SIGGRAPH2024 #GenAI

English

⛰️ retweetledi

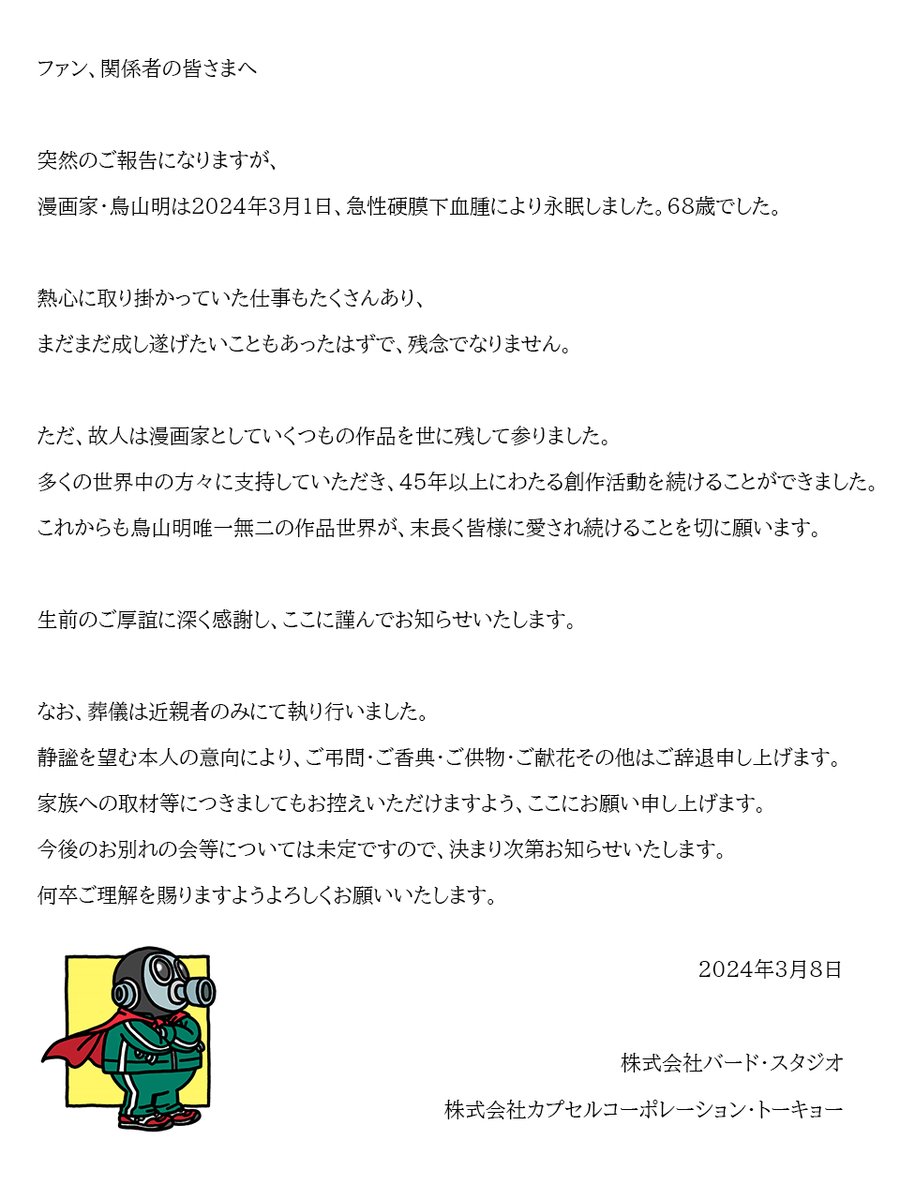

CAIR Lab at #CHI2024, 4 of 13 / Full Paper

Title: Enhancing UX Evaluation Through Collaboration with Conversational AI Assistants: Effects of Proactive Dialogue and Timing

Monday, May 13, at 12:00 / AI and Interaction Design

English

⛰️ retweetledi

SWE-agent is our new system for autonomously solving issues in GitHub repos. It gets similar accuracy to Devin on SWE-bench, takes 93 seconds on avg + it's open source!

We designed a new agent-computer interface to make it easy for GPT-4 to edit+run code

github.com/princeton-nlp/…

English

⛰️ retweetledi

AGI System Flow Chart for Solving Complex Problems

1. Problem Identification

- An initial LLM receives a complex problem and identifies its nature and scope.

2. Task Decomposition

- The problem is broken down into subtasks, and requirements are outlined.

3. Resource Allocation

- The CFO LLM allocates computational resources and prioritizes tasks based on complexity and urgency.

4. Tool and Information Gathering

- Relevant software tools are identified.

- The browser module gathers necessary information, possibly engaging in web research.

5. Subtask Distribution

- Subtasks are assigned to specialized LLMs, like the Python interpreter for computational tasks or the video/audio LLMs for multimedia processing.

6. Parallel Processing

- LLMs work on their assigned subtasks concurrently, leveraging the distributed architecture for efficiency.

7. Agentic Workflow Engagement

- Each LLM employs agentic reasoning, engaging in iterative processes, planning, reflection, and tool use specific to their subtasks.

8. Intermediate Synthesis

- As subtasks are completed, an LLM synthesizes intermediate results, checking for coherence and completeness.

9. Multi-Agent Collaboration

- LLMs exchange information and results, engaging in multi-agent collaboration to refine solutions and fill gaps.

10. Executive Review

- The CEO LLM reviews the synthesized solution, ensuring alignment with the overall goal and strategy.

11. Optimization and Refinement

- The solution is iteratively optimized, with the COO LLM overseeing operational efficiency.

12. Final Synthesis

- All refined subtask solutions are integrated into a final solution.

13. Verification and Validation

- The final solution is verified against the problem requirements and validated for correctness and effectiveness.

14. Output Generation

- The verified solution is compiled into the appropriate format for output.

15. Delivery

- The final solution is delivered through the peripheral devices I/O or stored in the file system for later retrieval.

16. Feedback and Learning

- Performance feedback is collected, leading to system-wide learning and improvement for future problem-solving.

Summary

This flow chart outlines the stages an AGI system would go through in solving complex problems. It emphasizes the system's distributed nature, the specialized roles of various LLMs, and the importance of iterative processes, multi-agent collaboration, and continuous learning.

English

⛰️ retweetledi

⛰️ retweetledi

Hack of the day: Llama on a microcontroller

🦙🔬

Details and source code at github.com/maxbbraun/llam…

Thanks to @karpathy, whose llama2.c inspired this!

English

⛰️ retweetledi

⛰️ retweetledi

Our survey work "Web3 Metaverse: State-of-the-Art and Vision" is finally accepted by ACM Transactions on Multimedia Computing, Communications and Applications. Early access: dl.acm.org/doi/10.1145/36… #web3 #metaverse #wagmi

English

🌟THRILLED TO SHARE: I’ve been awarded the Google PhD Fellowship for Human-Computer Interaction!🌟

A big shoutout to my incredible advisors, @kristenshino and @mingming_fan, as well as my brilliant collaborators, @jeffjianzhao, @cecialm, @ehsan_soure, @suffvier, and @minghao914

Google AI@GoogleAI

In 2009, Google created the PhD Fellowship Program to recognize and support outstanding graduate students pursuing exceptional research in computer science and related fields. Today, we congratulate the recipients of the 2023 Google PhD Fellowship! goo.gle/3PYfLXl

English

⛰️ retweetledi

Excited to share our #ICCV2023 paper: Fine-tuning Vision-Language Models without Zero-Shot Transfer Degradation (ZSCL). ZSCL outperforms the pre-trained model on downstream tasks and maintains its zero-shot transferability to other tasks.

paper: openaccess.thecvf.com/content/ICCV20…

blog: zhengzangw.github.io/blogs/zscl/

Poster: 10:30am to 12:30pm, 6 Oct. Room Foyer Sud 143 x.com/zangweizheng/s…

English

⛰️ retweetledi

the domain name is branchclash.com ! Just try it.

Moonshot Commons | Where Web3 founders build@buildmoonshot

@BranchClash @gabby_world_ @genki_cats @theMatrixWorld 🏰 BranchClash @BranchClash: A Fully On-Chain Tower Defense Blockchain Game with New Collaboration Mechanism. 🔗 Pitch Video: drive.google.com/file/d/1GnjK9H…

English

⛰️ retweetledi

⛰️ retweetledi

⛰️ retweetledi

To better enable the community to build on our work — and contribute to the responsible development of LLMs — we've published further details about the architecture, training compute, approach to fine-tuning & more for Llama 2 in a new paper.

Full paper➡️ bit.ly/44JAELQ

English

⛰️ retweetledi

GPT-4 is getting worse over time, not better.

Many people have reported noticing a significant degradation in the quality of the model responses, but so far, it was all anecdotal.

But now we know.

At least one study shows how the June version of GPT-4 is objectively worse than the version released in March on a few tasks.

The team evaluated the models using a dataset of 500 problems where the models had to figure out whether a given integer was prime. In March, GPT-4 answered correctly 488 of these questions. In June, it only got 12 correct answers.

From 97.6% success rate down to 2.4%!

But it gets worse!

The team used Chain-of-Thought to help the model reason:

"Is 17077 a prime number? Think step by step."

Chain-of-Thought is a popular technique that significantly improves answers. Unfortunately, the latest version of GPT-4 did not generate intermediate steps and instead answered incorrectly with a simple "No."

Code generation has also gotten worse.

The team built a dataset with 50 easy problems from LeetCode and measured how many GPT-4 answers ran without any changes.

The March version succeeded in 52% of the problems, but this dropped to a pale 10% using the model from June.

Why is this happening?

We assume that OpenAI pushes changes continuously, but we don't know how the process works and how they evaluate whether the models are improving or regressing.

Rumors suggest they are using several smaller and specialized GPT-4 models that act similarly to a large model but are less expensive to run. When a user asks a question, the system decides which model to send the query to.

Cheaper and faster, but could this new approach be the problem behind the degradation in quality?

In my opinion, this is a red flag for anyone building applications that rely on GPT-4. Having the behavior of an LLM change over time is not acceptable.

Have you noticed any issues when using GPT-4 and ChatGPT lately? Do you think these problems are overblown?

English

⛰️ retweetledi

⛰️ retweetledi

We found a DAO could be better than a centralized autonomous organization (CAO) for mitigating conflicts caused by the Ukraine-Russia war 2022.

This work was partly supported by @see_dao and the inspiration from its researchers @Seedao_ir.

@0xHCSLab

#keywords" target="_blank" rel="nofollow noopener">ieeexplore.ieee.org/document/10136…

English

⛰️ retweetledi

RecurrentGPT: Interactive Generation of (Arbitrarily) Long Text

RecurrentGPT, a language-based simulacrum of the recurrence mechanism in RNNs. RecurrentGPT is built upon a large language model (LLM) such as ChatGPT and uses natural language to simulate the Long Short-Term Memory mechanism in an LSTM. At each timestep, RecurrentGPT generates a paragraph of text and updates its language-based long-short term memory stored on the hard drive and the prompt, respectively. This recurrence mechanism enables RecurrentGPT to generate texts of arbitrary length without forgetting. Since human users can easily observe and edit the natural language memories, RecurrentGPT is interpretable and enables interactive generation of long text. RecurrentGPT is an initial step towards next-generation computer-assisted writing systems beyond local editing suggestions. In addition to producing AI-generated content (AIGC), we also demonstrate the possibility of using RecurrentGPT as an interactive fiction that directly interacts with consumers. We call this usage of generative models by ``AI As Contents'' (AIAC), which we believe is the next form of conventional AIGC. We further demonstrate the possibility of using RecurrentGPT to create personalized interactive fiction that directly interacts with readers instead of interacting with writers. More broadly, RecurrentGPT demonstrates the utility of borrowing ideas from popular model designs in cognitive science and deep learning for prompting LLMs

paper page: huggingface.co/papers/2305.13…

English