Mithril

106 posts

Mithril

@mithrilcompute

The AI omnicloud

Computer use models shouldn't learn from screenshots. We built a new foundation model that learns from video like humans do. FDM-1 can construct a gear in Blender, find software bugs, and even drive a real car through San Francisco using arrow keys.

We nearly named it MBTI (Mithril Batch Tokens Inference) last minute, so we could make Myers Briggs puns (although maybe that's more of a LinkedIn joke). Wrt "intelligence too cheap to meter", INTJ: I Need Trust in Jevon's*. If you're a pun enjoyer, comment or retweet with your MBTI/MyersBriggs and a pun, and we'll give 5 random people free tokens (500M/day) for 5 days! Some good ones we've heard—I Need Flops Pronto, I Need Tokens Please ---- *Jevon's paradox / the idea that if you make something 2x cheaper, demand will rise more than 2x to increase aggregate spend.

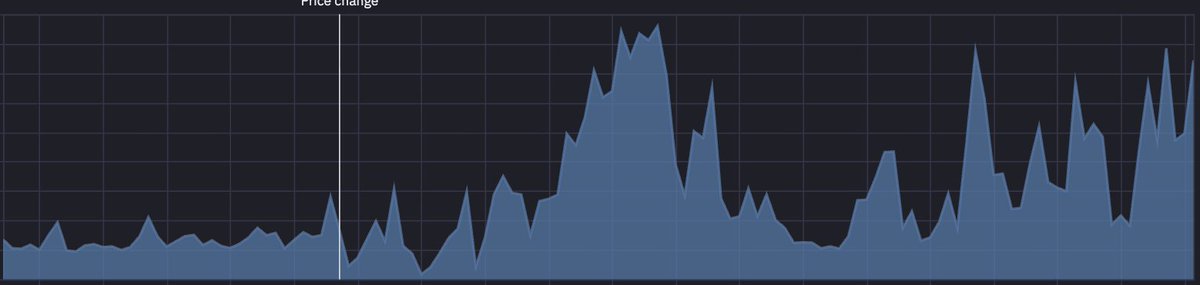

Introducing Mithril Batch Inference A plug-and-play API built for massive-scale inference workloads! MBI leverages Mithril's global and dynamic omnicloud pool to push the frontier on: 🚀 Throughput: process 500 M tokens/day (higher on request) across models from OpenAI, Llama, Deepseek, Qwen, or custom models. 💰 Savings: Sufficiently low per M tokens we’re considering integrating @stripe's bridge network for fractions-of-a-penny "micro‑transactions"—we’re encroaching on the goal of "intelligence too cheap to meter". MBI is perfect for eval pipelines, embeddings & labeling, synthetic data generation, and more.