Jared Quincy Davis

392 posts

Jared Quincy Davis

@jaredq_

Founder and CEO, Mithril (@mithrilcompute). Orchestrating Compute. Fmr Research Scientist @GoogleDeepMind, Deep Learning Team. CS PhD @Stanford ML, Systems

Hallelujah I found something but I won’t reveal due to selfish gpu hoarding.

Composer 2 marks the one-year anniversary of our large model training efforts. Since then, we've built an exceptionally talent-dense team of ~40 people with some of the best researchers and engineers from the labs, academia, industry, and more heterogeneous backgrounds. And we are exclusively focused on coding. We don't care about models that can respond to emails, do your tax returns, or be your friend. Every FLOP, token, parameter, and researcher is entirely dedicated to software engineering.

We believe Cursor discovered a novel solution to Problem Six of the First Proof challenge, a set of math research problems that approximate the work of Stanford, MIT, Berkeley academics. Cursor's solution yields stronger results than the official, human-written solution. Notably, we used the same harness that built a browser from scratch a few weeks ago. It ran fully autonomously, without nudging or hints, for four days. This suggests that our technique for scaling agent coordination might generalize beyond coding.

Excited to see Anthropic's fast mode experiment. More options is always good — and counterintuitively, even 6x cost for 2.5x speed can make applications cheaper. We ran an experiment at @mithrilcompute that shows why flexible compute economics are so powerful 👇

📢Introducing Gemini 3.1 Flash-Lite, our fastest and most efficient model, built for high-volume workloads. It outperforms 2.5 Flash in reasoning, reliability, and scalability at a lower cost. This model also introduces thinking levels. You can adjust compute by complexity of the task, burning zero thinking overhead on high-volume tasks, while reasoning through the complex edge cases. Maximum intelligence, minimal latency. Read more: blog.google/innovation-and…

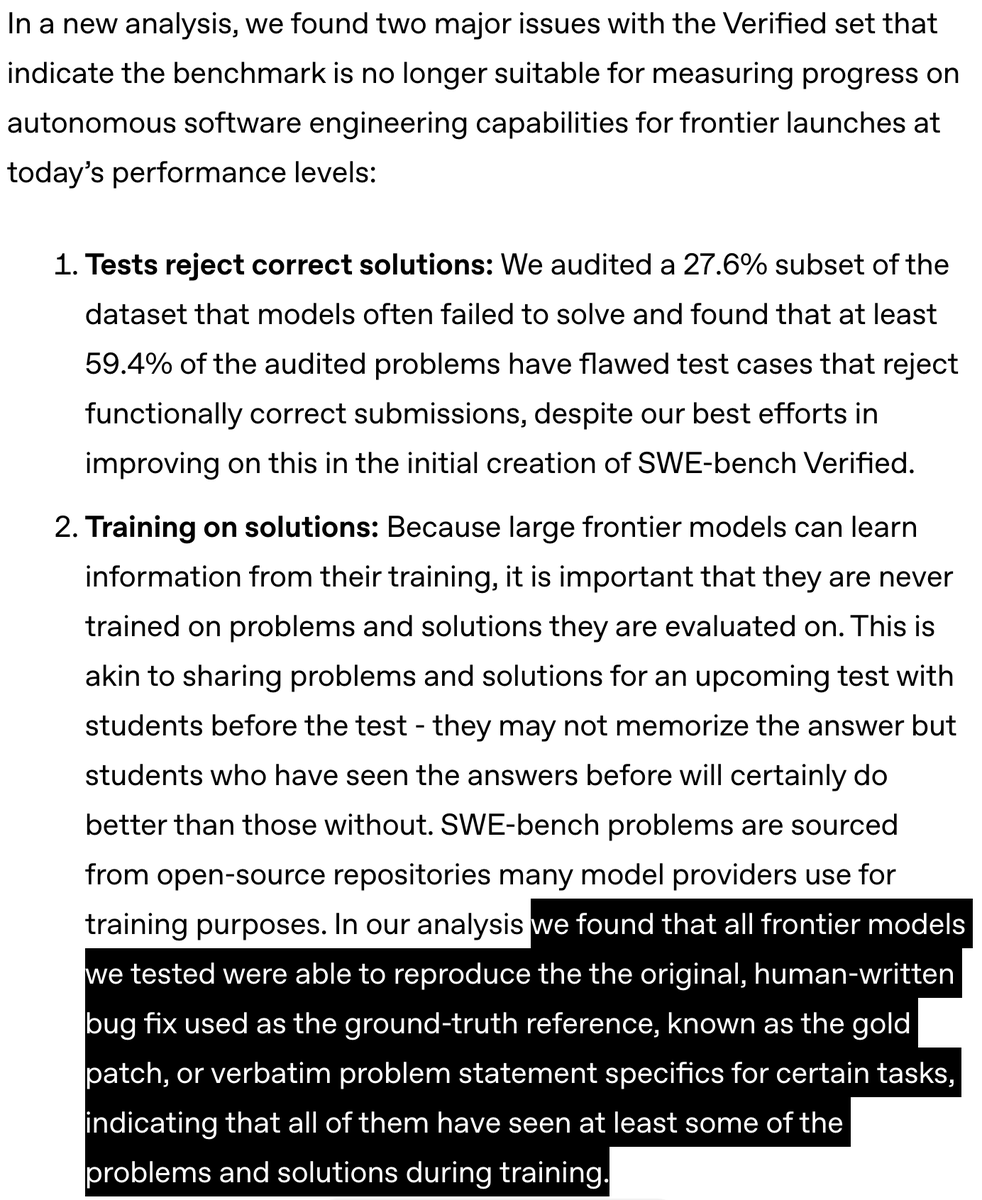

In the past 6 months we’ve seen a divergence between the game-changing experience of coding w new models and tiny SWE-bench Verified gains. llm-stats.com/benchmarks/swe… New analysis finds most remaining unsolved problems have unfair tests, and many models are heavily contaminated.

Mercury 2 is now live! 🚀 The fastest reasoning LLM built for production speed. ~1000 tokens/sec vs <200 tokens/sec for comparable models. What this enables: 🤖 Fast agents: fast iteration loops, no compounding delays 🎙️ Voice and Search AI: tight turn-taking, natural conversations under strict latency budgets 💻 Interactive code completions, editing, and design workflows

Think different

GPT-5.2 derived a new result in theoretical physics. We’re releasing the result in a preprint with researchers from @the_IAS, @VanderbiltU, @Cambridge_Uni, and @Harvard. It shows that a gluon interaction many physicists expected would not occur can arise under specific conditions. openai.com/index/new-resu…