MiyoungKo

30 posts

🤔How can we systematically assess an LM's proficiency in a specific capability without using summary measures like helpfulness or simple proxy tasks like multiple-choice QA? Introducing the ✨BiGGen Bench, a benchmark that directly evaluates nine core capabilities of LMs.

🚨 New LLM personalization/alignment paper 🚨 🤔 How can we obtain personalizable LLMs without explicitly re-training reward models/LLMs for each user? ✔ We introduce a new zero-shot alignment method to control LLM responses via the system message 🚀

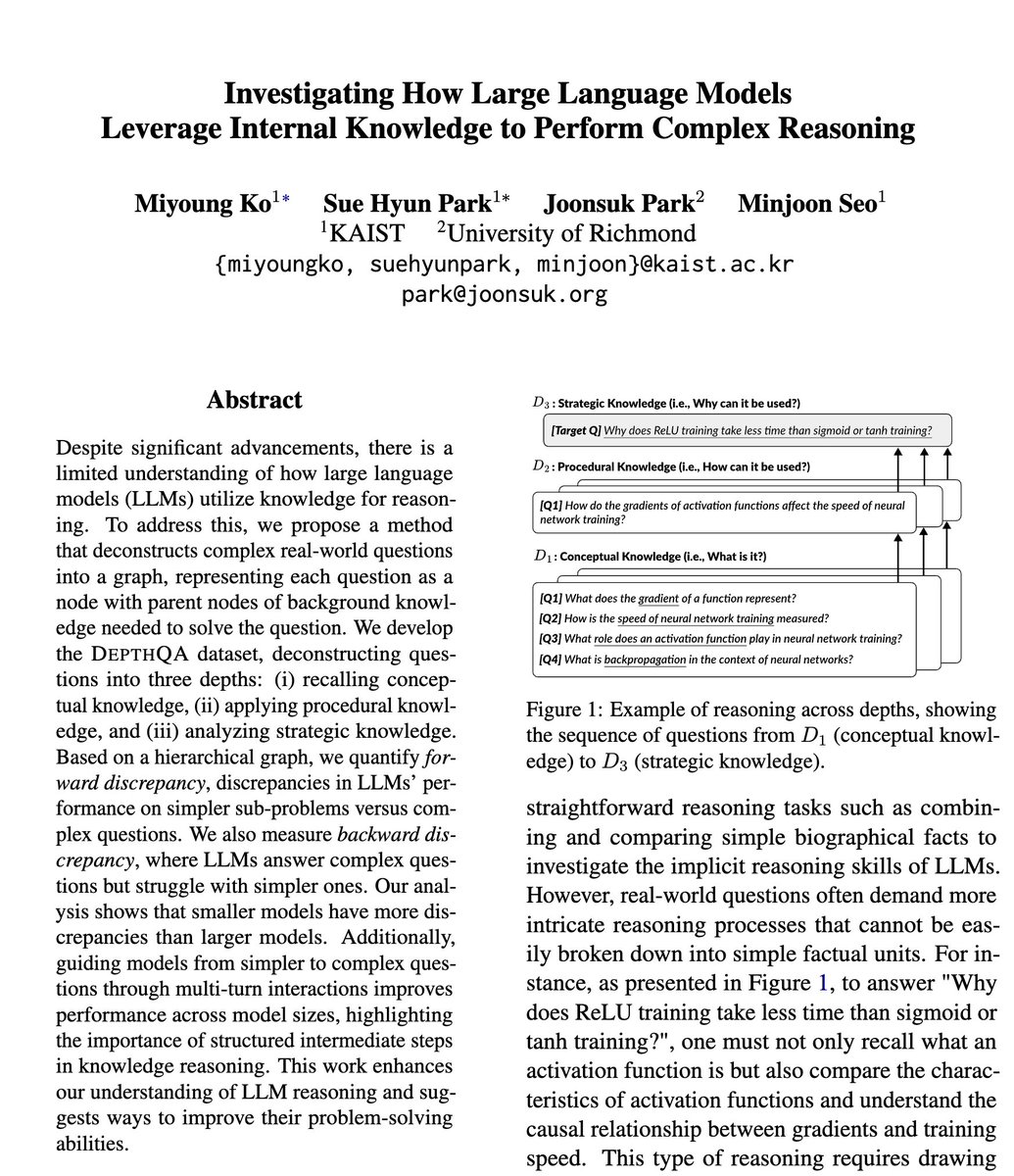

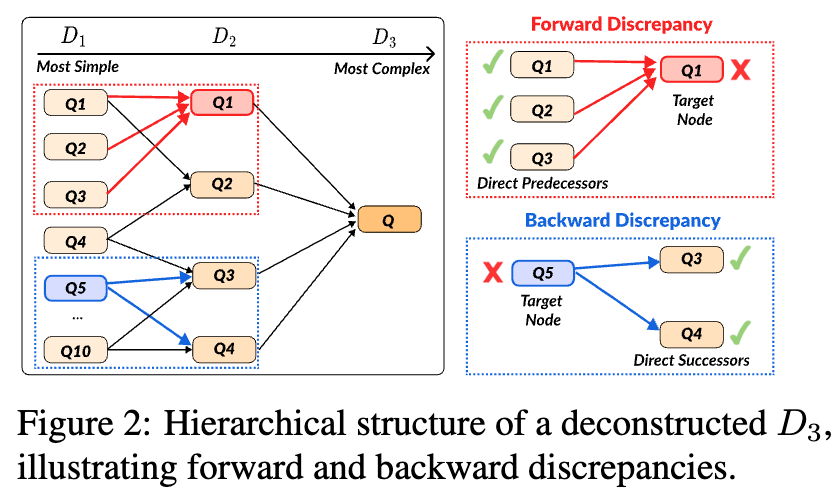

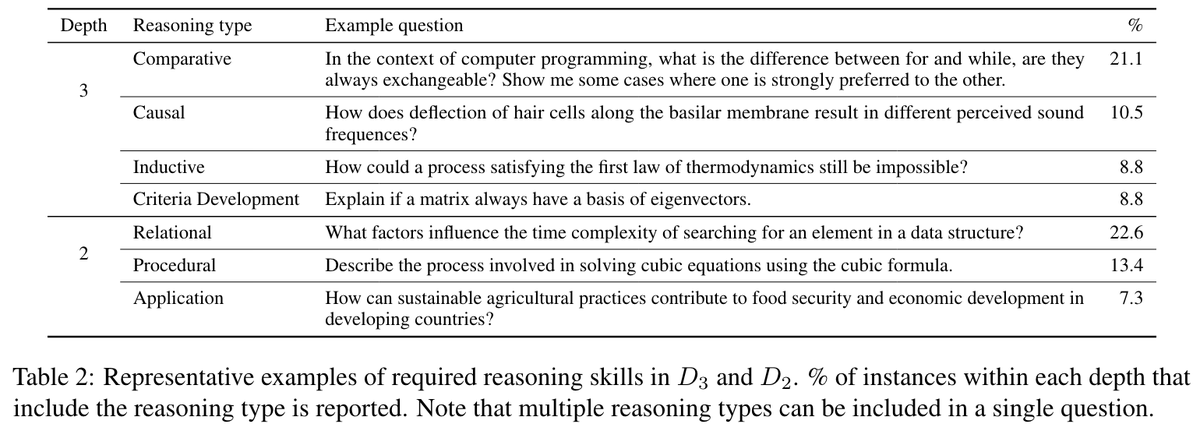

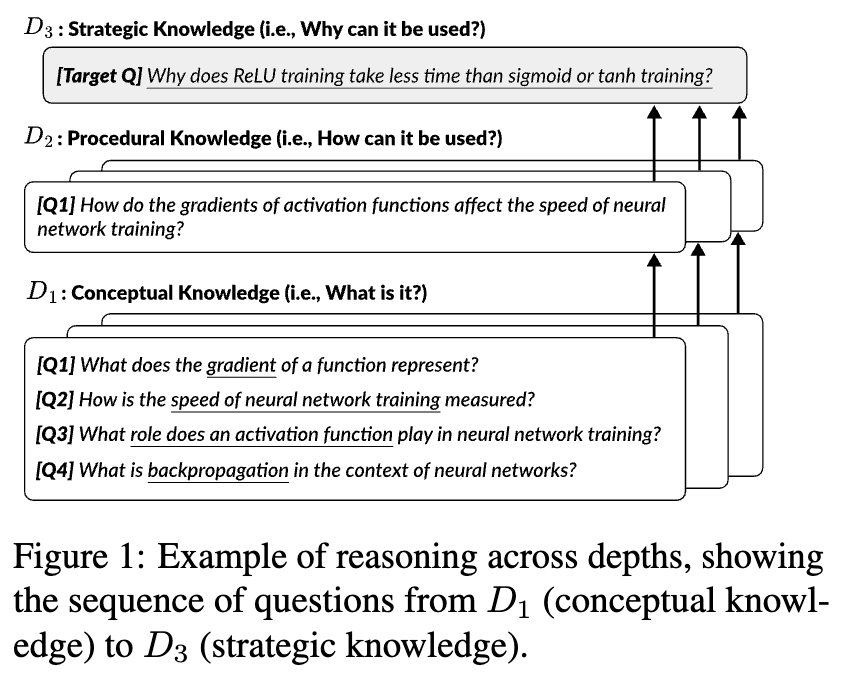

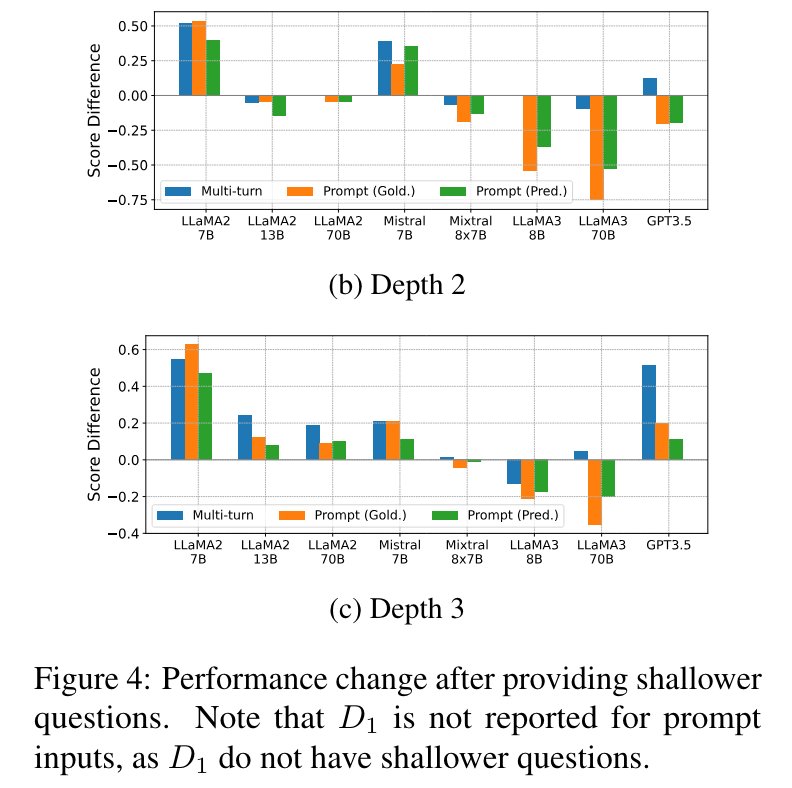

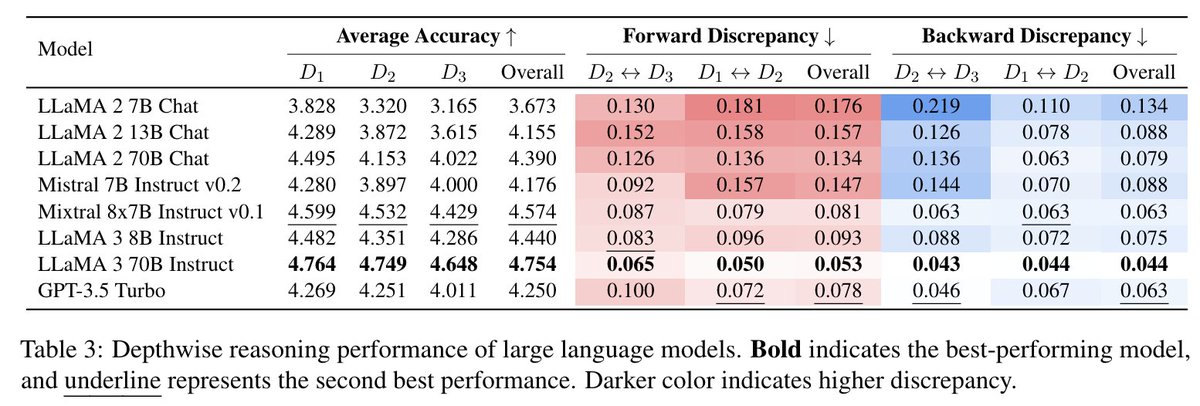

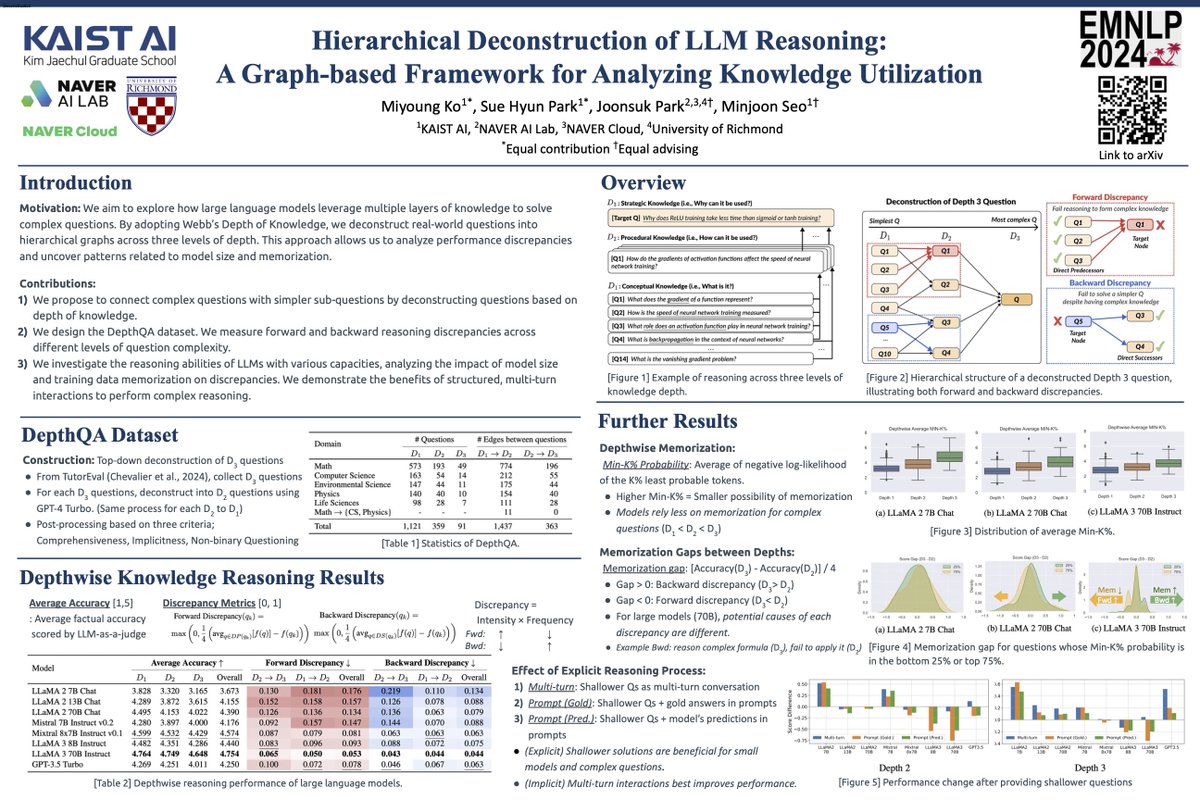

📢 Excited to share our latest paper on the reasoning capabilities of LLMs! Our research dives into how these models recall and utilize factual knowledge during solving complex questions. [🧵1 / 10] arxiv.org/abs/2406.19502