Matthew Green

44 posts

Matthew Green

@mjkgreen

building Chasqui, an AI that turns your network into warm intros. Aerospace engineer turned founder. Building: https://t.co/NnoM5o5o7s | https://t.co/zgbWlA6QWY

Katılım Nisan 2021

64 Takip Edilen8 Takipçiler

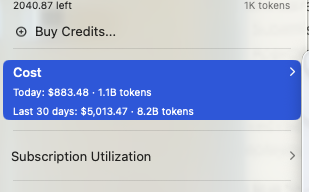

Introducing SubQ - a major breakthrough in LLM intelligence.

It is the first model built on a fully sub-quadratic sparse-attention architecture (SSA),

And the first frontier model with a 12 million token context window which is:

- 52x faster than FlashAttention at 1MM tokens

- Less than 5% the cost of Opus

Transformer-based LLMs waste compute by processing every possible relationship between words (standard attention).

Only a small fraction actually matter.

@subquadratic finds and focuses only on the ones that do.

That's nearly 1,000x less compute and a new way for LLMs to scale.

English

@eriktorenberg I mean, sounds like you’re looking for a podcaster

English

@Kekius_Sage Well, “nothing” can surpass light speed. But “something” can’t. Need negative mass things to do anything with it.

English

@levelsio Thinking you build a two way valve to let the window “breath” while forcing it through a maze like insulated muffler

English

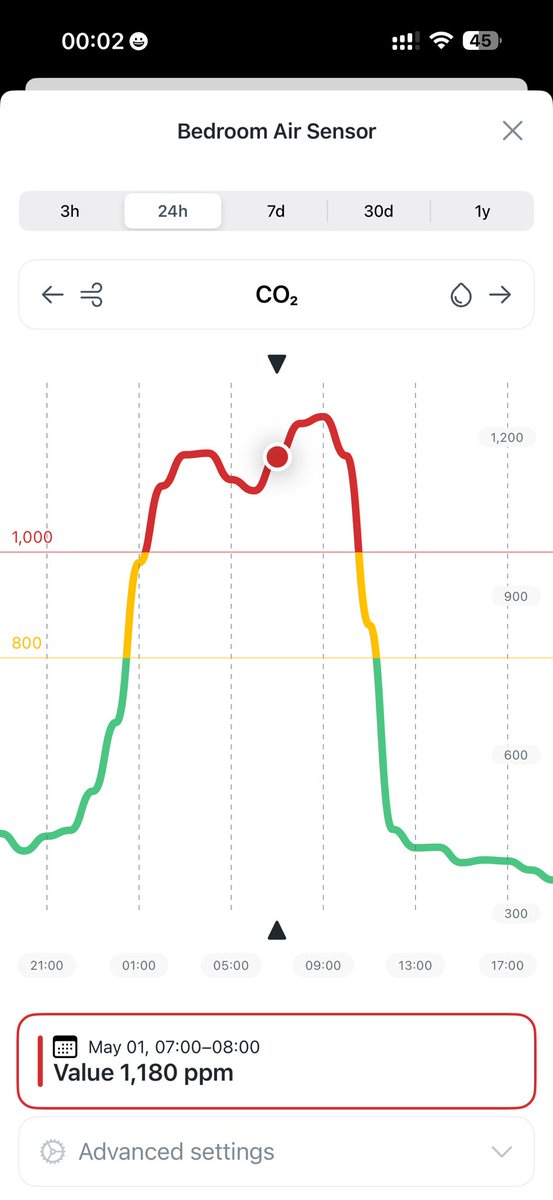

I still haven't solved the CO2 bedroom challenge

You open the window and you wake up from a 6am garbage truck or barking dogs and sunlight

You close it, you suffocate in 1200 ppl at 5am

I guess you really need some mini tube in your wall with a vent that opens and closed based on internal CO2 but how do I build that?

English

@AishwaryaDevv So really simple fix I’ve found, create an OVERVIEW.md with:

-project structure

-intent/goals

-to dos

-context

-components

Then tell whatever tool you’re using to update it whenever you finish a task. And look for it and read it at the start of each session

English

@rcmisk This is why warm intros win. Getting those first 100 customers is the real game

English