Sabitlenmiş Tweet

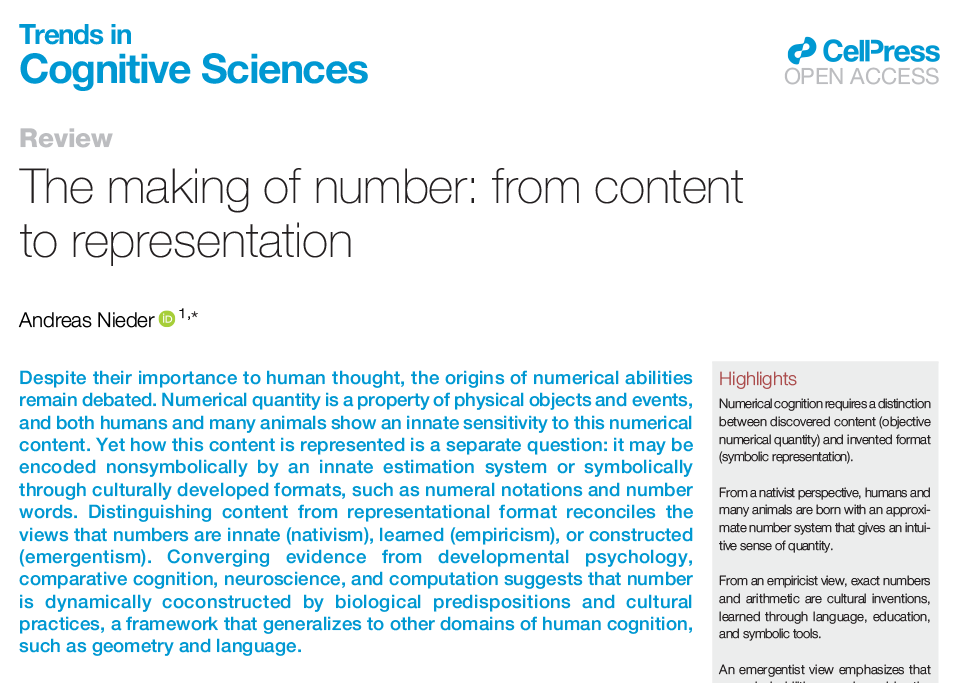

🚨New paper🚨about accented speech perception doi.org/10.1016/j.band… by brilliant (MSc student at the time!) Amir Ghooch Kanloo accompanied by myself, @KazuyaSLA and @AdamTTierney from fun times at @audioneurolab @bbkpsychology 🧵1/5

English