Miguel Liu-Schiaffini retweetledi

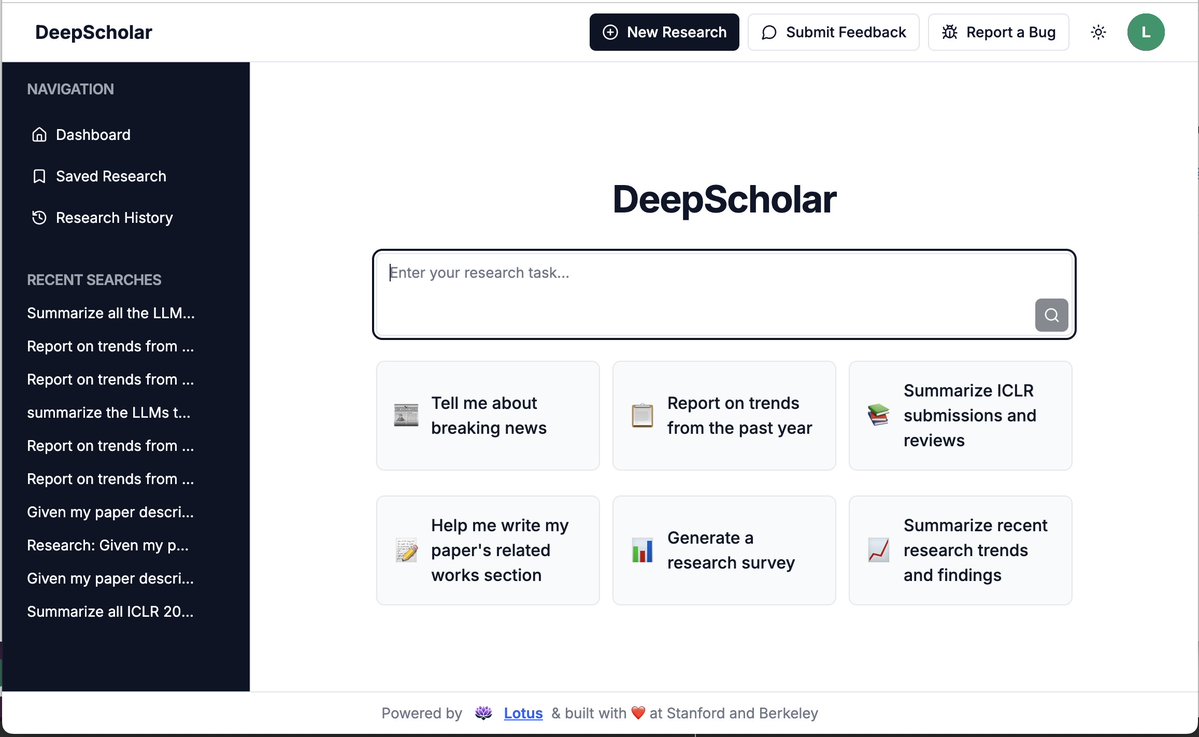

🚀 Thrilled to launch DeepScholar, an openly-accessible DeepResearch system we've been building at Berkeley & Stanford.

DeepScholar efficiently processes 100s of articles, demonstrating strong long-form research synthesis capabilities, competitive with OpenAI's DR, while running up to 2x faster!

Try it out: deep-scholar.vercel.app

English