Kamyar Azizzadenesheli

1.1K posts

@Azizzadenesheli

Project Prometheus, x-Staff @nvidia, x-prof at @Purdue #MachineLearning, #ArtificialIntelligence #NeuralOperators #AI+#Science

DeepInfra has raised its $107M in Series B funding 🚀 AI is moving from training to production-scale deployment, and inference is becoming the system constraint. DeepInfra was built for this shift — scaling high-throughput inference for open-source and agent-driven workloads. Grateful to our investors and partners, co-led by @500GlobalVC and @gharik

Congratulations to Zongyi Li (@ZongyiLiCaltech), incoming Assistant Professor of Computer Science, who was announced as a 2025 AI2050 Early Career Fellow yesterday! The AI2050 program funds researchers pursuing projects to help AI create immense benefits for humanity by 2050:

Excited to share our recently published paper in @WileyGlobal on "Ocean Emulation With Fourier Neural Operators: Double Gyre" agupubs.onlinelibrary.wiley.com/doi/10.1029/20… We used Fourier Neural Operators to build the first high-resolution weather model, FourCastNet. Since it works so well for atmospheric emulation a natural progression is to extend them to emulate ocean simulations. We propose learning the dynamics of a simplified ocean simulation using Fourier neural operators. Fourier neural operators. We are able to generate long forecasts using trained Fourier neural operators, and find that they are more accurate than using climatology or persistence on short-term forecasts and approach the accuracy of the physics-based model. On long-term forecasts, the neural operators can still predict future scenarios with realistic physics like propagating waves and meandering currents. This is impressive because no physics is explicitly programmed into the neural operators. Physics is learned from data. @Azizzadenesheli

Thank you @BBCNews for featuring our work using AI to overcome turbulence bbc.com/news/articles/…

Our 500+ page AI4Science paper is finally published: Artificial Intelligence for Science in Quantum, Atomistic, and Continuum Systems. Foundations and Trends® in Machine Learning, Vol. 18, No. 4, 385–912, 2025 nowpublishers.com/article/Detail…

Neural Operators – Deep learning at any resolution Extending neural networks to function spaces: While many phenomena are inherently described by functions, neural networks define vector-to-vector mappings that rely on fixed discretization of the input and output. Neural Operators instead define learnable function-to-function mappings that guarantee consistent predictions across different discretization of the input and output functions. By respecting the functional nature of the data, neural operators can achieve improved performance and generalization. Translating the success of deep learning to operator learning: Careful engineering of neural architectures has been a key factor in deep learning’s success. Translating these architectures to neural operators is crucial for operator learning to enjoy the same empirical optimizations. Key principles for constructing Neural Operators: *Recipes for converting popular architectures (CNNs, GNNs, transformers, etc.) into Neural Operators *Guidance for practitioners arxiv.org/abs/2506.10973 github.com/neuraloperator @julberner @mliuschi @JeanKossaifi Valentin Duruisseaux Boris Bonev @Azizzadenesheli @caltech

📢ChebNet is back—with long-range abilities on graphs !🎉 We revive ChebNet for long-range tasks, uncover instability in polynomial filters, and propose Stable‑ChebNet—a non-dissipative dynamical system with controlled, stable info propagation 🚀 📄: arxiv.org/abs/2506.07624

Excited to introduce our latest work, Guided Diffusion Sampling on Function Spaces (FunDPS) (arxiv.org/abs/2505.17004) - a discretization-agnostic generative framework for solving PDE-based forward and inverse problems. Diffusion-based posterior sampling on function spaces: Our model recovers full-field PDE solutions, coefficient functions, and boundary conditions from severely sparse (just 3%) measurements, yielding SotA performance in both speed and accuracy. Multi-resolution operator learning pipeline: FunDPS leverages Gaussian Random Field priors and neural operator architectures, enabling multi-resolution training and inference, reducing training time by 25% and inference time by 50%. Infinite-dimensional Tweedie’s Formula: We extend Tweedie’s formula into infinite-dimensional Banach spaces, forming the rigorous theoretical foundation for posterior mean estimation. Results: Achieved an average 32% accuracy improvement and 4x fewer sampling steps compared to previous SOTA approaches across five challenging PDE tasks. Plus, our multi-resolution inference pipeline accelerates computations by up to 25x! Paper (arxiv.org/abs/2505.17004). Code (github.com/neuraloperator…), based on our earlier workshop paper (ml4physicalsciences.github.io/2024/files/Neu…). @jiacheny7, @AbbasMammadov11, @julberner, @gavinkerrigan, Jong Chul Ye, @Azizzadenesheli #DiffusionModels #InverseProblems #PDE #MachineLearning #NeuralOperators #AI4Science

Adam is similar to many algorithms, but cannot be effectively replaced by any simpler variant in LMs. The community is starting to get the recipe right, but what is the secret sauce? @gowerrobert and I found that it has to do with the beta parameters and variational inference. arxiv.org/pdf/2505.21829

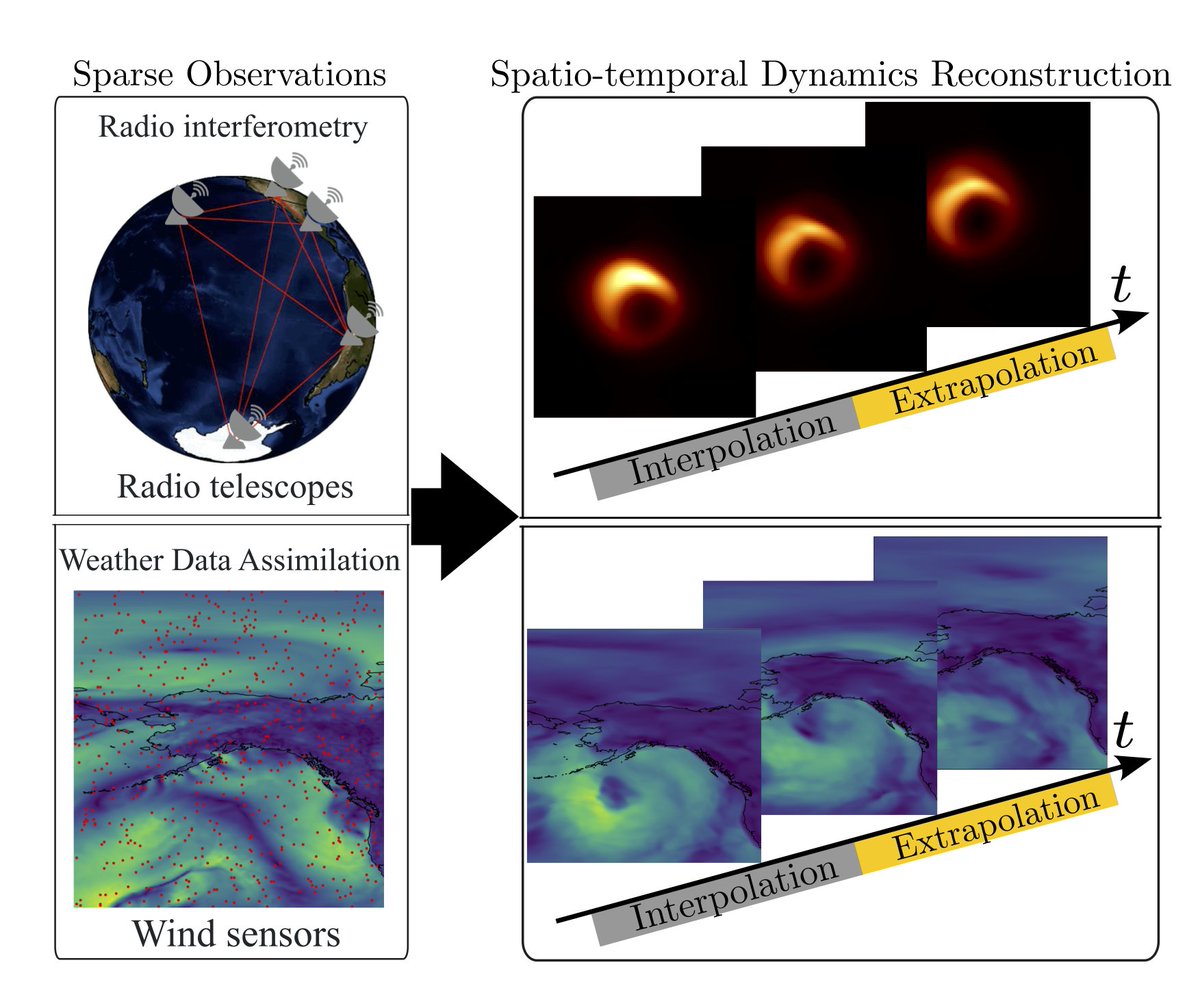

We have released VARS-fUSI: Variable sampling for fast and efficient functional ultrasound imaging (fUSI) using neural operators. The first deep learning fUSI method to allow for different sampling durations and rates during training and inference. biorxiv.org/content/10.110… 1/