modpotato

455 posts

modpotato

@modpotatos

modpotato (i have no idea where my other accounts are)

If VRAM isn’t eaten by weights, it can go to KV cache and batch size. FlexTensor’s planned tensor offload displaces weight storage into host RAM, so inference stacks like vLLM can scale context and throughput on fixed hardware instead of immediately jumping to multiple GPUs.

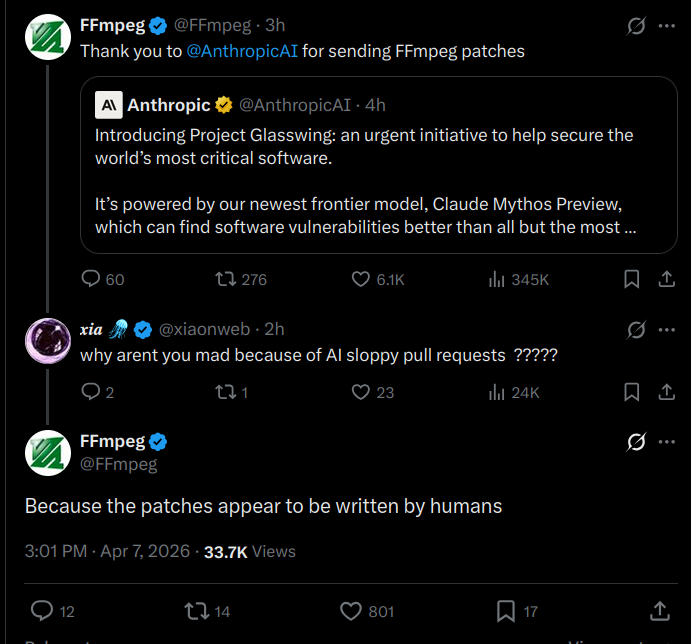

We're bringing the advisor strategy to the Claude Platform. Pair Opus as an advisor with Sonnet or Haiku as an executor, and get near Opus-level intelligence in your agents at a fraction of the cost.

JUST IN: Hacker allegedly breaches Chinese state-run supercomputer & steals over 10 petabytes of sensitive data, including "highly classified defense documents & missile schematics"

if you work at Anthropic and leak Mythos weights you will go down in history.

GeometryDash.com is live! Play Stereo Madness for free in your browser, explore news, check the Top 1000 leaderboards and more :) /RubRub