Jean Olivier

70 posts

Jean Olivier retweetledi

Qwen 3.6 Plus is an incredible model. Well done to the @Alibaba_Qwen team. It blows GPT-5.4-Codex out of the water for agentic tasks / @openclaw , is 3X faster, and is currently offered free through @OpenRouter.

openrouter.ai/qwen/qwen3.6-p…

English

Jean Olivier retweetledi

Today I had the worst experience I’ve ever had with any LLM. It was GLM-5 on the ZAI coding plan.

The model repeatedly stopped mid-task, completely ignored all of my explicit instructions, and refused to follow even the simplest requests. When I specifically asked it to commit its work, it instead spawned four separate agents across the codebase, generated a report, and never once acknowledged or mentioned a prompt issue I had been crystal clear about.

This kind of behavior is unacceptable and frankly disgusting. I understand models can be quantized, but delivering something this broken to paying customers is not acceptable.

it feels like a straight-up scam.

As soon as my current billing period ends, I will be cancelling my subscription.

@Zai_org

English

@llama_index I've been comparing PDF parsing libraries exactly 2h hours ago. Tech updates keep stacking 🤦🏼

English

We've spent years building LlamaParse into the most accurate document parser for production AI. Along the way, we learned a lot about what fast, lightweight parsing actually looks like under the hood.

Today, we're open-sourcing a light-weight core of that tech as LiteParse 🦙

It's a CLI + TS-native library for layout-aware text parsing from PDFs, Office docs, and images. Local, zero Python dependencies, and built specifically for agents and LLM pipelines. Think of it as our way of giving the community a solid starting point for document parsing:

npm i -g @llamaindex/liteparse

lit parse anything.pdf

- preserves spatial layout (columns, tables, alignment)

- built-in local OCR, or bring your own server

- screenshots for multimodal LLMs

- handles PDFs, office docs, images

Blog: llamaindex.ai/blog/liteparse…

Repo: github.com/run-llama/lite…

English

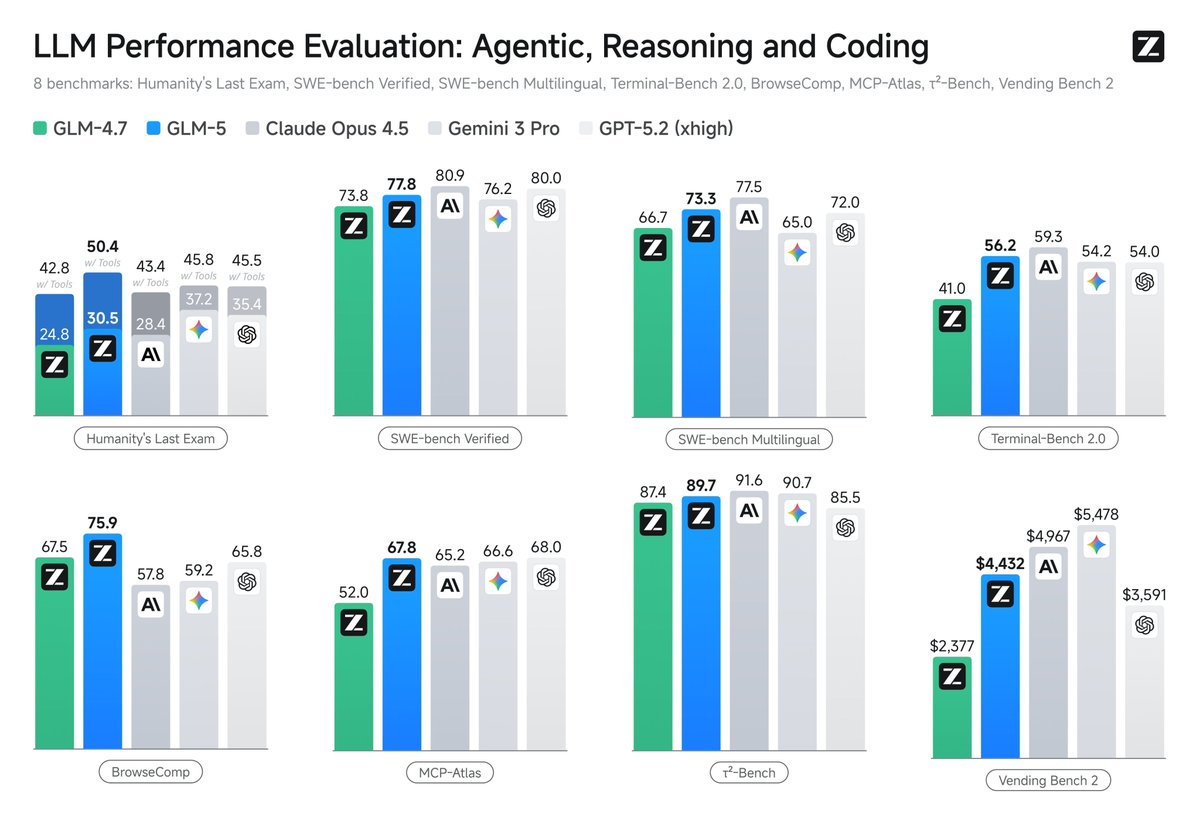

Xiaomi has released MiMo-V2-Pro, which scores 49 on the Artificial Analysis Intelligence Index, placing it between Kimi K2.5 and GLM-5

@Xiaomi's MiMo-V2-Pro is a new reasoning model and a significant upgrade over their prior open weights release, MiMo-V2-Flash (309B total / 15B active, MIT license), which scores 41 on the Intelligence Index. Xiaomi has not yet released the weights of this model and it is currently only available via Xiaomi's first-party API.

Key takeaways:

➤ MiMo-V2-Pro scores 49 on the Artificial Analysis Intelligence Index behind GLM-5 (Reasoning, 50). It is ahead of Kimi K2.5 (Reasoning, 47) and Qwen3.5 397B A17B (Reasoning, 45). On the overall leaderboard, it places #10, just behind GPT-5.2 Codex (xhigh, 49) and ahead of Grok 4.20 Beta (Reasoning, 48)

➤ Leading Elo of 1426 on GDPval-AA (Agentic Real-World Work Tasks), ahead of peer models: On GDPval-AA, MiMo-V2-Pro places ahead of GLM-5 (Reasoning, 1406), Kimi K2.5 (Reasoning, 1283), and Qwen3.5 397B A17B (Reasoning, 1209). GPT-5.4 (xhigh) and Claude Sonnet 4.6 (Adaptive Reasoning, max effort) have an Elo of 1667 and 1633 respectively

➤ Competitive AA-Omniscience Index driven by low hallucination: MiMo-V2-Pro scores +5, ahead of GLM-5 (Reasoning, +2), Kimi K2.5 (Reasoning, -8), and Qwen3.5 397B A17B (Reasoning, -30). For context, Claude Opus 4.6 (Adaptive Reasoning, max effort, +14) and Gemini 3.1 Pro Preview (+33) remain ahead

➤ MiMo-V2-Pro is more token efficient than peers. It used 77M output tokens to run the Artificial Analysis Intelligence Index, significantly less than GLM-5 (Reasoning, 109M) and Kimi K2.5 (Reasoning, 89M)

➤ MiMo-V2-Pro costs $348 to run the Artificial Analysis Intelligence Index at $1/$3 per 1M input/output tokens. This is less expensive than GLM-5 despite scoring only 1 point lower on the Intelligence Index. For comparison, GPT-5.2 (xhigh) cost $2,304 and Claude Opus 4.6 (Adaptive Reasoning, max effort) cost $2,486

Key model information:

➤ Context window: 1M tokens

➤ Pricing: $1/$3 per 1M input/output tokens, for 256K token input and $2/$6 per 1M input/output tokens for 1M token input

➤ Availability: Xiaomi first-party API only

➤ Modality: Text input and output only (no multimodality)

English

Jean Olivier retweetledi

@Chrisondesk @askOkara @Zai_org They just can’t , even with max users, their lower

users base , they struggle to handle the charge , Don’t expect to much

English

@moncrolio I've used it on Mac cpu and linux cpu and seems to work fine, just slow.

English

Jean Olivier retweetledi

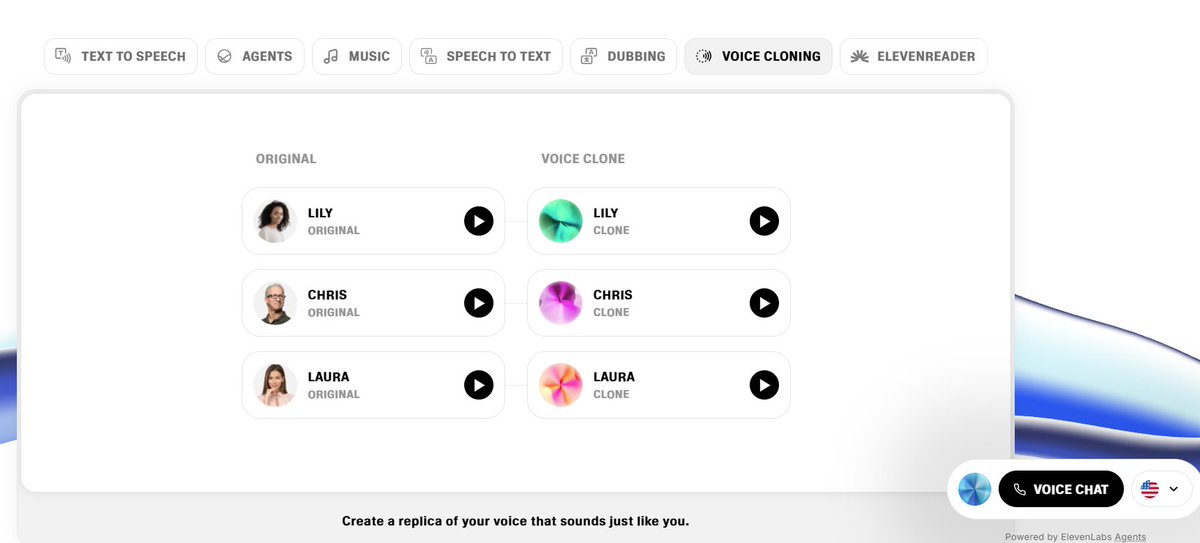

First: Ditch generic voices! Don't grab "Adam" from @elevenlabs

it screams low-effort. Build a custom persona instead. Prompt like: "Confident 30s female, warm British accent, witty podcast host vibe." Own your sonic brand!

English

Jean Olivier retweetledi

Jean Olivier retweetledi

I learn more from this article than from four hours of psychology class each week. How does @thedankoe write with such profound insight? It makes me wonder if wisdom like this can truly come from books alone.

x.com/thedankoe/stat…

DAN KOE@thedankoe

English

Jean Olivier retweetledi