Sabitlenmiş Tweet

mark webster 🪸

20.2K posts

mark webster 🪸

@motiondesign_01

It's all language. Instagram : https://t.co/IIpTabbKUZ Newsletter : https://t.co/fb8PpAlgym

FRANCE Katılım Şubat 2008

2.4K Takip Edilen7.3K Takipçiler

Thinking on Generative Art

When @_nonfigurativ_ references Schmidt's argument that generative art is “a system with relatively simple rules that gives rise to complexity through emergence,” he’s drawing an important line between emergence and randomness. Randomness just produces variation. Emergence produces structure. In other words, the complexity we see is not arbitrary but the result of clear constraints unfolding over time. We think this idea matters because it changes what and why we are looking at his work. We are no longer consuming an image as a finished aesthetic object. We are witnessing a system at work. With open vulnerabilities. The artwork becomes evidence of relationships, rules, and decisions rather than a surface created for immediate visual impact.

In a moment when AI systems generate outputs instantly (and infinitely) and often in an opaque manner, this distinction becomes critical for generative art and the digital technologies that govern our lives. Emergent generative art slows things down and makes process perceptible. It trains us to see how complexity arises instead of accepting results as given. We feel that that has cultural weight today. It pushes back against a passive relationship to technology and pushes attention towards structure, constraint, and agency. Instead of just being impressed by what machines can produce and being lulled by techno-optimism, we are invited to think about systems through "poetic structures." Art as a space of reflection and vulnerability.

English

Train Cycle V (Cycle 089)

For those not familiar with this process. The main electricity poles are the main chord progression rhythm. The small railway boxes and poles are higher register piano. The arpeggio synth is the railway track. The woodwind and strings doing the boundaries in the fields and the bridge and the larger foliage.

I took a train from Paris to Milan recently and shot a lot of train footage. This is some footage that’s somewhere in-between Turn and Milano if i remember correctly. There was lots of low lying farm land with a lot of water. I’m looking forward to doing more train cycles, they’re one of my favourite ones to do at the moment.

Finding patterns in nature then I extended my favourites in to longer pieces.

-

#composer #strings #neoclassical #recording #train cycles nature music composition test aleoteric touchdesigner ableton spectrogram chance rhythm cars maxmsp puredata datadriven max4live producer art installation digitalart videoart natureinspired motorway nature filmscore

English

@Erz_Sol . . . trouvée à la mer chez Madame La Langouste Bleue :––°

Français

@quasimondo I'm reading more along the lines of consensus being the mechanism for decision making, implying agreement amongst a community of people. It is constantly shifting as you well mention. The word however lacks any understanding for the real mechanisms that impose decisions.

English

@motiondesign_01 Sounds like you did not read the full essay yet (understandably). I see consensus as a time-aggregating process rather than current agreement, and the artist as one agent in a network rather than the sole accountable one.

English

@quasimondo Sure, I get it. I'm simply shifting the focus from object to subject. Consensus is a weak word, do you not think? We don't all agree on what 'counts as Art'. The artist however is unmistakably accountable.

English

@motiondesign_01 No disagreement here. What I am attempting to do here is to explain how the consensus that decides what counts as art actually forms, and what happens to it when the conditions that produce the consensus change.

English

mark webster 🪸 retweetledi

@memoakten @paolon Great quote from Dennett.

I'm more for the poet than the scribe with this.

English

I have thorough general_guidelines.md as well as multiple project_specs.md

Clearly not communicating everything, clearly enough.

(But even when I do, it goes & does smth that is almost exactly, but not perfectly exactly what I told it not to do).

Competence without comprehension - as Dennett would say.

English

mark webster 🪸 retweetledi

I've been programming computers for 40 years.

Writing code is my poetry.

I write code to say what I want to say, to make computers do what I want them to do.

But also, deep down, I love the beauty, the elegance of a finely sculpted logic. Minimal, concise, precise. Carved in pursuit of the perfect form, as simple as it needs to be, but no simpler. Information flowing gracefully through, gently stroking the contours.

Now I can make computers do what I want them to do without writing any code, and it's blowing my mind. I absolutely love it ...

... until I look at the code. And then my heart stops.

What is this revolting, idiotic mess of excruciatingly over-engineered, pathetically brittle filth? This crime against sanity and all that is pure and good?

Yes, the computer appears to do what I asked. But my heart weeps blood. The poetry, the poetry is dead. Replaced by a Michael Bay Avengers Disney-remake multiverse-reboot mashup sludge-pit of rat’s-nest codeslop.

memo akten@memoakten

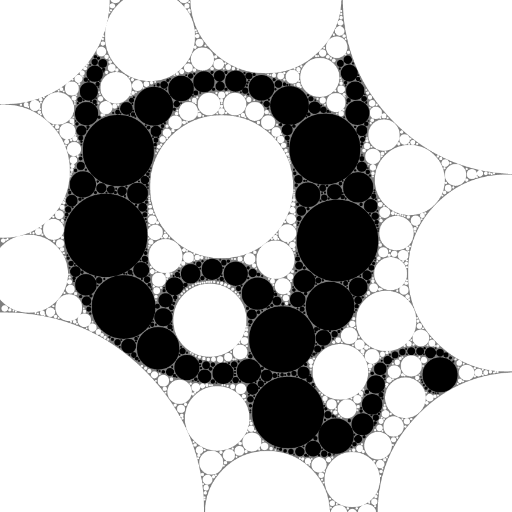

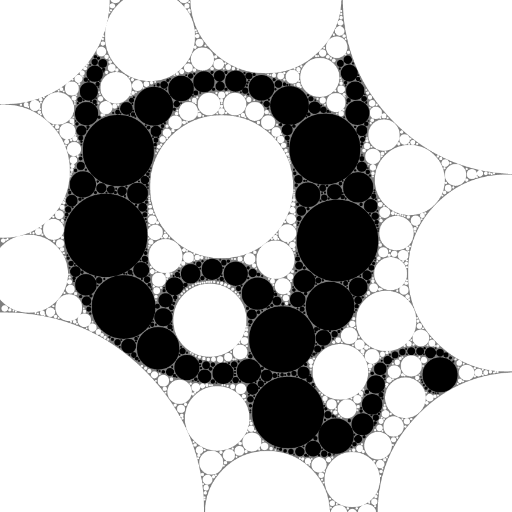

@presstube This is how I learnt how to program, and "All about computers" in general, when I was 10 years old. (Some of you might remember these images, I used to show them in talks a decade ago or so).

English

mark webster 🪸 retweetledi

@quasimondo Ok.I'm gonna wrap up this one.

“the machine doesn’t think, the machine makes us think”. Mohr, Manfred

English

mark webster 🪸 retweetledi

@DlSPUTED Keep writing too! Appreciate your current thoughts on coding today :––]

English

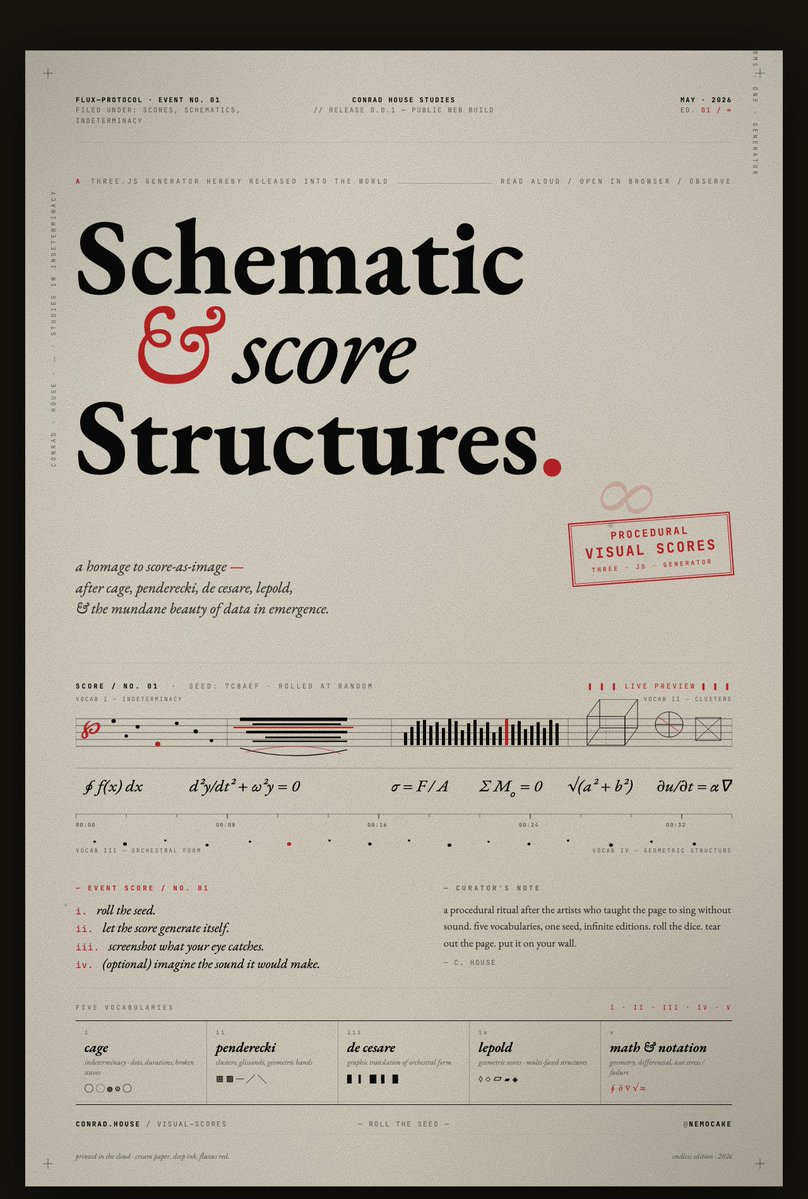

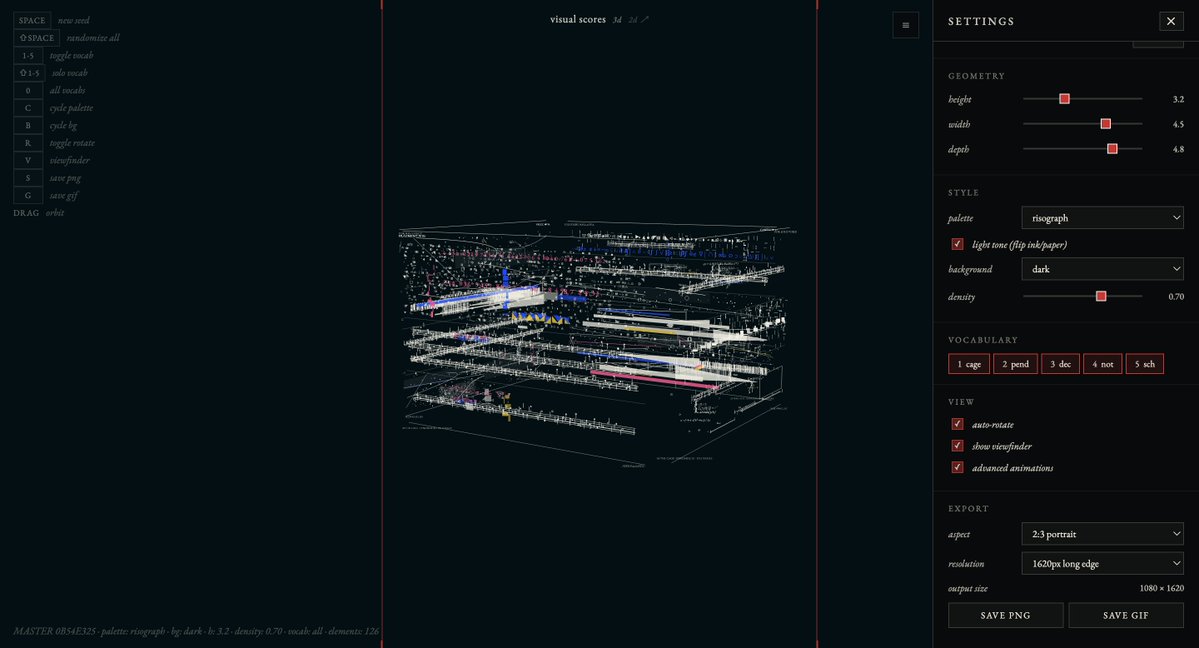

If anyone would like to create their own Schematic Scores, i've put the generator on my site to play with.

would love to see what anyone creates : ) feel free to share outputs.

link below.

conrad house@DlSPUTED

in motion

English