Mohammad Saffar

173 posts

@msaffar3

Research Scientist @googledeepmind, Gemini multi modal | past: @reveimage, Google brain

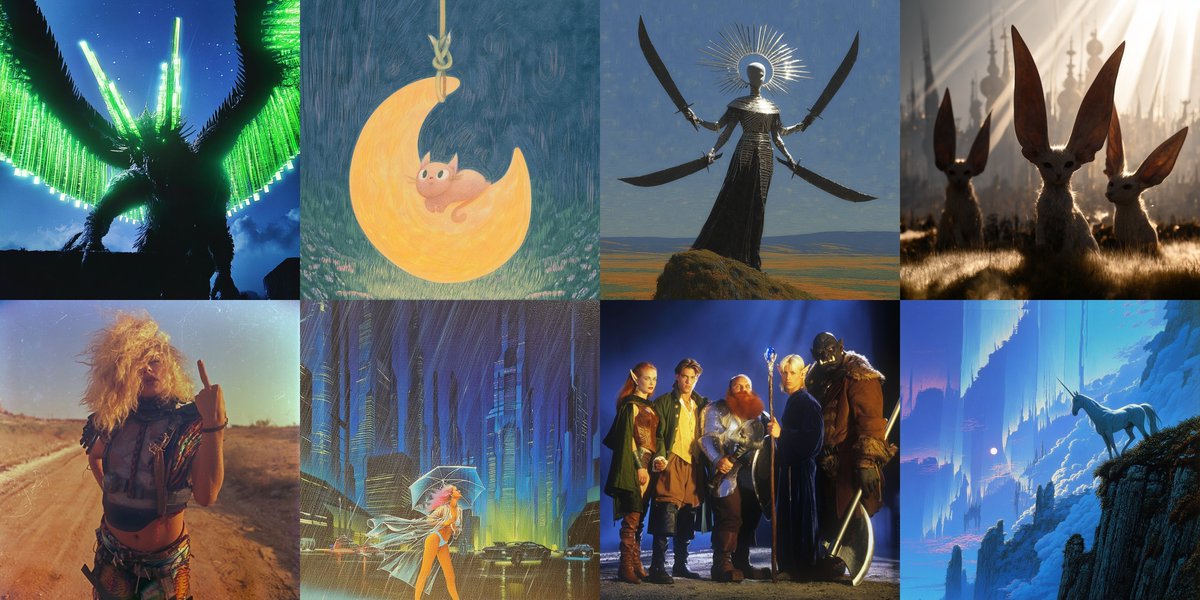

The long-awaited testing phase for @Midjourney V8 has officially begun, marking a massive leap forward for the generative art platform. This latest iteration promises a significant boost in efficiency, operating at five times the speed of its predecessors while maintaining a much tighter grip on complex prompt instructions. High-resolution creators will find the native 2K modes particularly useful for professional workflows. The update also brings more reliable text rendering and enhanced "sref" styling, allowing for a level of aesthetic consistency that was previously difficult to achieve. Personalization is a major focus of this release, with improved moodboard performance to help users fine-tune their unique visual language. It is an impressive step toward making AI-assisted design both faster and more intuitive.

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

Excited to introduce Uni-1, our new *unified* multimodal model that does both understanding and generation: lumalabs.ai/uni-1 TLDR: I think Uni-1 @LumaLabsAI is > GPT Image 1.5 in many cases, and toe-to-toe with Nano Banana Pro/2. (showcase below)

Create elaborate scenes with Nano Banana 2 using 14 input images!

Mercury 2 is live 🚀🚀 The world’s first reasoning diffusion LLM, delivering 5x faster performance than leading speed-optimized LLMs. Watching the team turn years of research into a real product never gets old, and I’m incredibly proud of what we’ve built. We’re just getting started on what diffusion can do for language.

Reve v1.5 is here. Our latest image model, now with 4K resolution.

@sama Really impressive model, huge congrats to everyone who worked on it at OpenAI! However, the calendar is wrong, I fixed it for you in Nano Banana Pro 😀

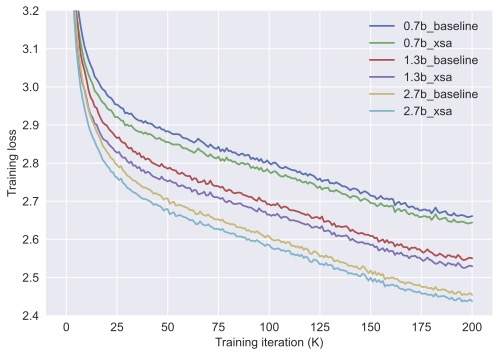

For years, RAW pixel space pretraining has been sidelined: too compute-expensive. Our new @GoogleDeepMind paper 📜 dives into the scaling trends of raw pixel models to answer the question “how far are we from scaling up next-pixel prediction?” arxiv.org/pdf/2511.08704 Forecast: Raw next-pixel modeling will reach competitive ImageNet classification (>80% top1 accuracy) and generation metrics (90 Fr’echet Distance) in five years! Threads 👇

Thinking (test-time compute) in pixel space... 🍌 Pro tip: always peek at the thoughts if you use AI Studio. Watching the model think in pictures is really fun!

You went 🍌🍌 for Nano Banana. Now, meet Nano Banana Pro. It’s SOTA for image generation + editing with more advanced world knowledge, text rendering, precision + controls. Built on Gemini 3, it’s really good at complex infographics - much like how engineers see the world:)