Marko Tasic

8.1K posts

@mtasic85

System Architect. Software Dev. AI/ML Specialist. Tech Consultant. SMBs & Enterprises. Opinions are my own. CEO/CTO at @tangledgroup

Our AI Agent popped a root shell on Ubuntu 26.04 on the first day it was released :)

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

Our goal is to build practical models with comprehensive capabilities beyond open benchmarks. And the only way to do it to co-design with diverse products while scaling solidly. Tencent has the best product ecosystem and a solid, low-ego culture, and we are just getting started!

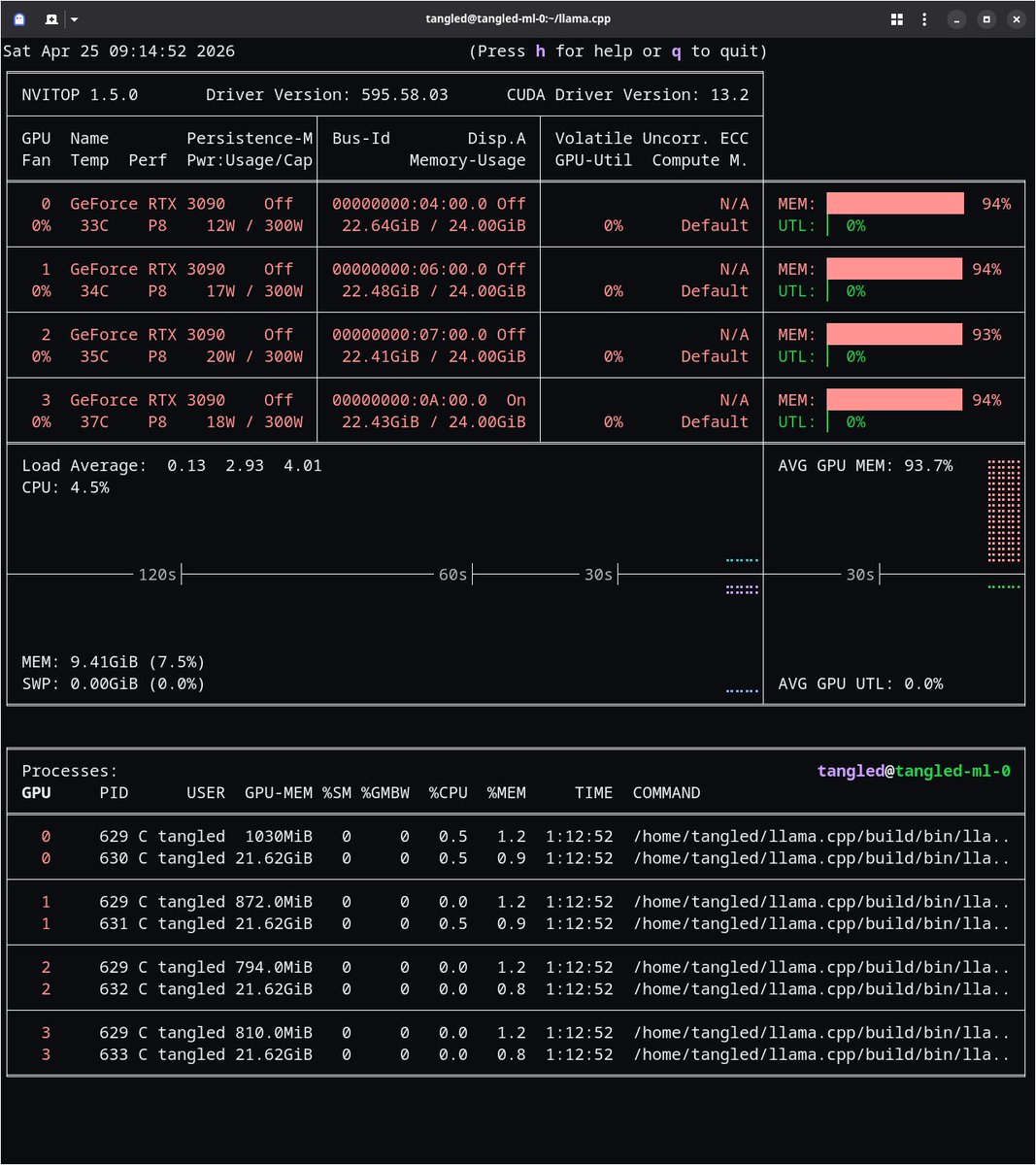

🟠Current setup, 23.37GiB / 24.00GiB VRAM 🤨30 t/s tg 🤔with speculative decoding 250 t/s tg 🤗Qwen3.6-27B Q3_K_M -ctk q8_0 -ctv q8_0 CUDA_VISIBLE_DEVICES=%i ./llama-server -hf unsloth/Qwen3.6-27B-GGUF:Q3_K_M -ngl -1 -fa on -fit off --metrics --props --slots --host 0.0.0.0 --port 808%i -dev CUDA0 --temp 0.7 --top-p 0.8 --top-k 20 --min-p 0.0 --presence-penalty 1.5 --repeat-penalty 1.0 --reasoning off --alias "Qwen/Qwen3.6-27B" -c 262144 -ctk q8_0 -ctv q8_0 --spec-default --no-mmproj-offload CUDA_VISIBLE_DEVICES=0,1,2,3 ./llama-server -hf ggml-org/GLM-OCR-GGUF:Q8_0 -ngl -1 -fa on -fit off --metrics --props --slots --host 0.0.0.0 --port 8090 --reasoning off --alias "zai-org/GLM-OCR" -c 32768 -ctk q5_1 -ctv q5_1 -np 1 --spec-default --no-mmproj-offload

🟡Current setup, 21.53GiB / 24.00GiB VRAM 🧐40 t/s tg 🧐with speculative decoding 200 t/s tg CUDA_VISIBLE_DEVICES=%i ./llama-server -hf unsloth/Qwen3.6-27B-GGUF:IQ4_NL -ngl -1 -fa on -fit off --metrics --props --slots --host 0.0.0.0 --port 808%i -dev CUDA0 --temp 0.7 --top-p 0.8 --top-k 20 --min-p 0.0 --presence-penalty 1.5 --repeat-penalty 1.0 --reasoning off --alias "Qwen/Qwen3.6-27B" -c 262144 -ctk q4_0 -ctv q4_0 --spec-default --no-mmproj-offload CUDA_VISIBLE_DEVICES=0,1,2,3 ./llama-server -hf ggml-org/GLM-OCR-GGUF:Q8_0 -ngl -1 -fa on -fit off --metrics --props --slots --host 0.0.0.0 --port 8090 --reasoning off --alias "zai-org/GLM-OCR" -c 32768 -ctk q5_1 -ctv q5_1 -np 1 --no-mmproj-offload

🟠Current setup, 22.64GiB / 24.00GiB VRAM pattern on 4x @nvidia RTX 3090, @Alibaba_Qwen and @Zai_org models and @UnslothAI and @ggml_org quants on llama.cpp 🧐40 t/s tg 🤯with speculative decoding 400 t/s tg CUDA_VISIBLE_DEVICES=%i ./llama-server -hf unsloth/Qwen3.6-27B-GGUF:IQ4_NL -ngl -1 -fa on -fit off --metrics --props --slots --host 0.0.0.0 --port 808%i -dev CUDA0 --temp 0.7 --top-p 0.8 --top-k 20 --min-p 0.0 --presence-penalty 1.5 --repeat-penalty 1.0 --reasoning off --alias "Qwen/Qwen3.6-27B" -c 262144 -ctk q4_0 -ctv q4_0 --spec-default CUDA_VISIBLE_DEVICES=0,1,2,3 ./llama-server -hf ggml-org/GLM-OCR-GGUF:Q8_0 -ngl -1 -fa on -fit off --metrics --props --slots --host 0.0.0.0 --port 8090 --reasoning off --alias "zai-org/GLM-OCR" -c 32768 -ctk q5_1 -ctv q5_1 -np 1 --no-mmproj-offload

Everyone is slowly coming to this realization, and I assure you, no one is running multitudes of agents overnight. No one that is doing anything of substance at least. There _are_ people pretending to be scientists, or fully caught up in their drug infused AI overdose, that think their slop machines are changing the world. They're not tho, and they're just wasting a bunch of money and compute to create a lot of LoC that will just get thrown away. The state of the art is still "can we even one shot a production quality patch that we wont regret later", and its rarer than you'd expect based on discourse.

JUST IN: Google $GOOGL to invest up to $40,000,000,000 in Claude AI developer Anthropic.