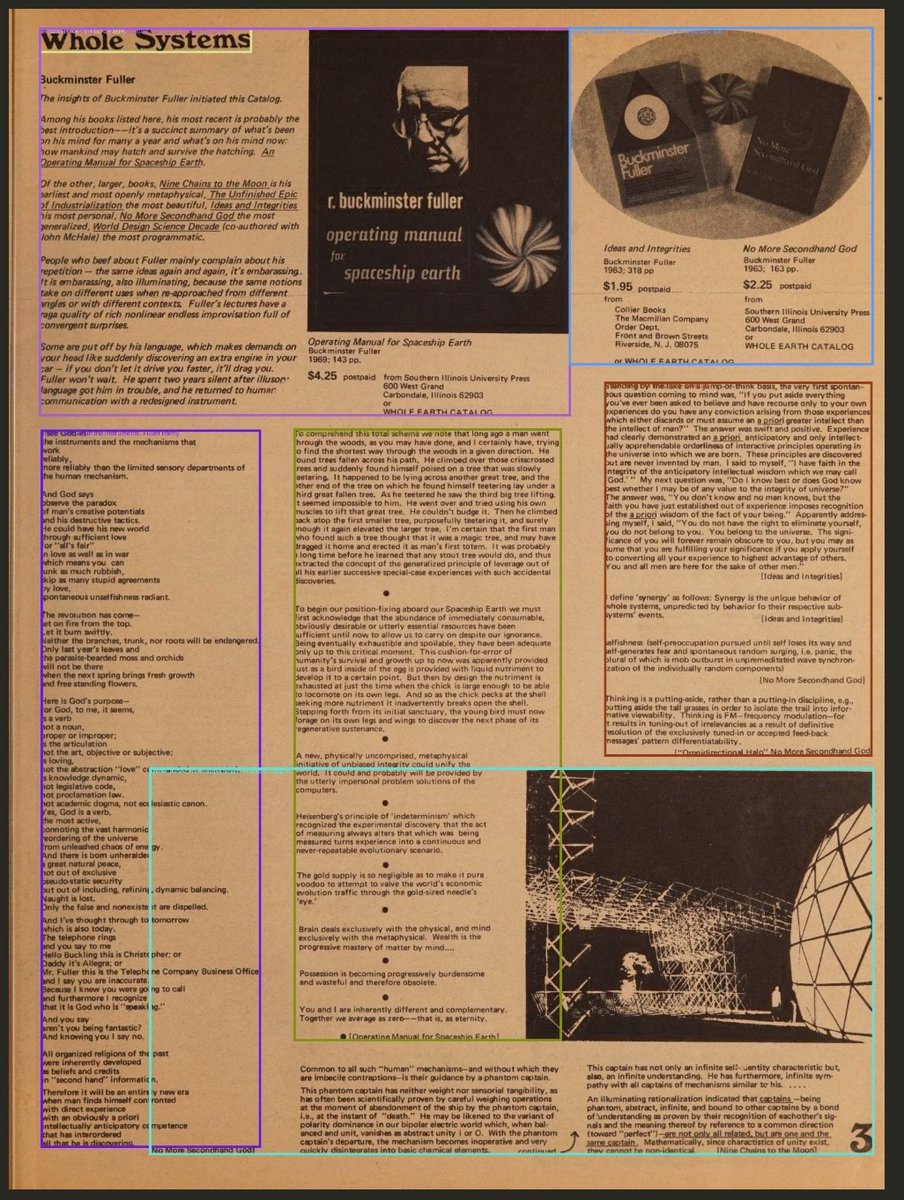

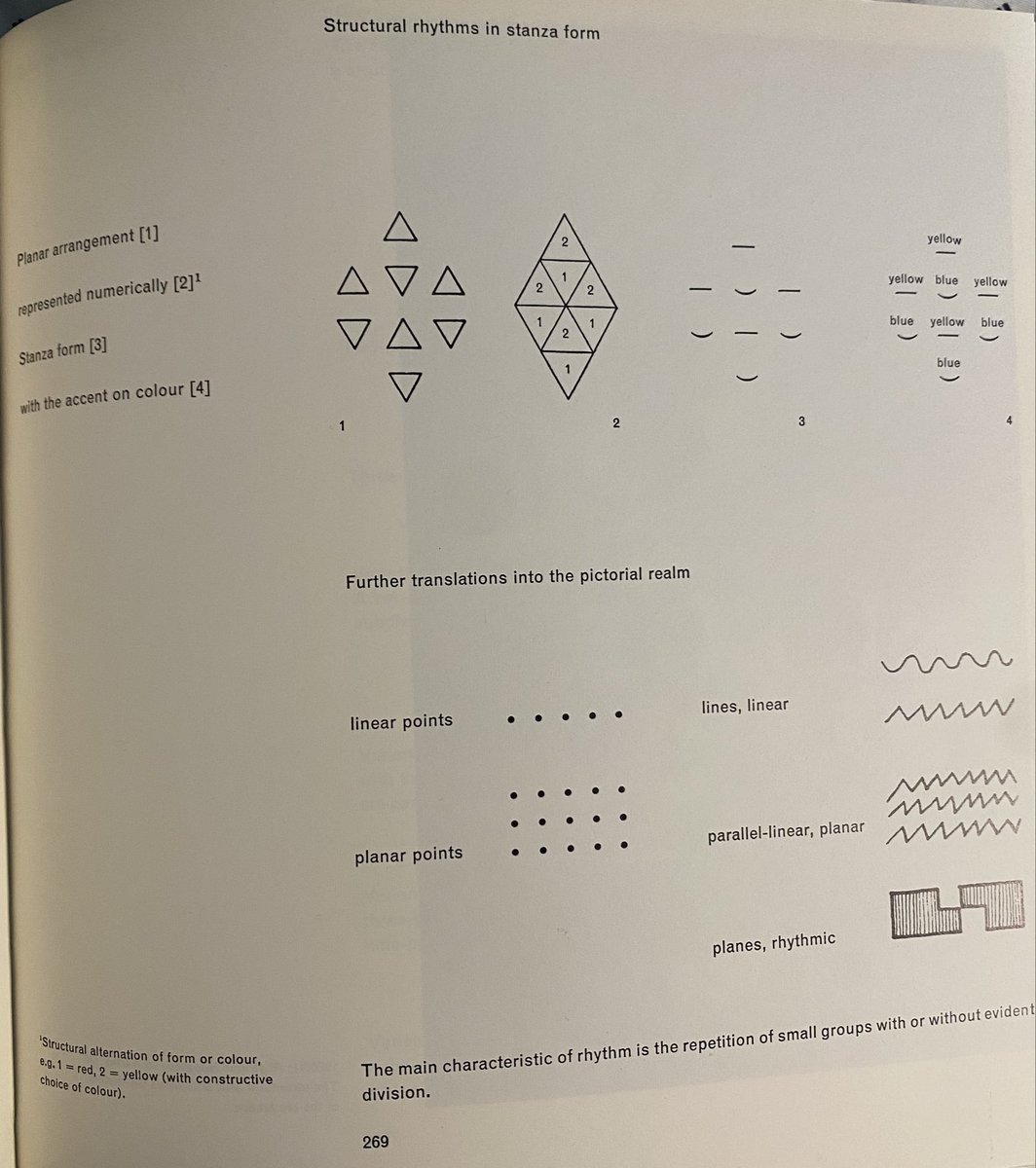

Exploring Cybernetic Serendipity (1968-69) The culmination of 3+ years of effort by curator Jasia Reichardt, this early international exhibition of Digital Art would introduce hundreds of thousands of visitors to the world of computer-generated creativity. More below.