Nicholas Meade

110 posts

Nicholas Meade

@ncmeade

PhD Student at @McGillU / @Mila_Quebec; AI Safety

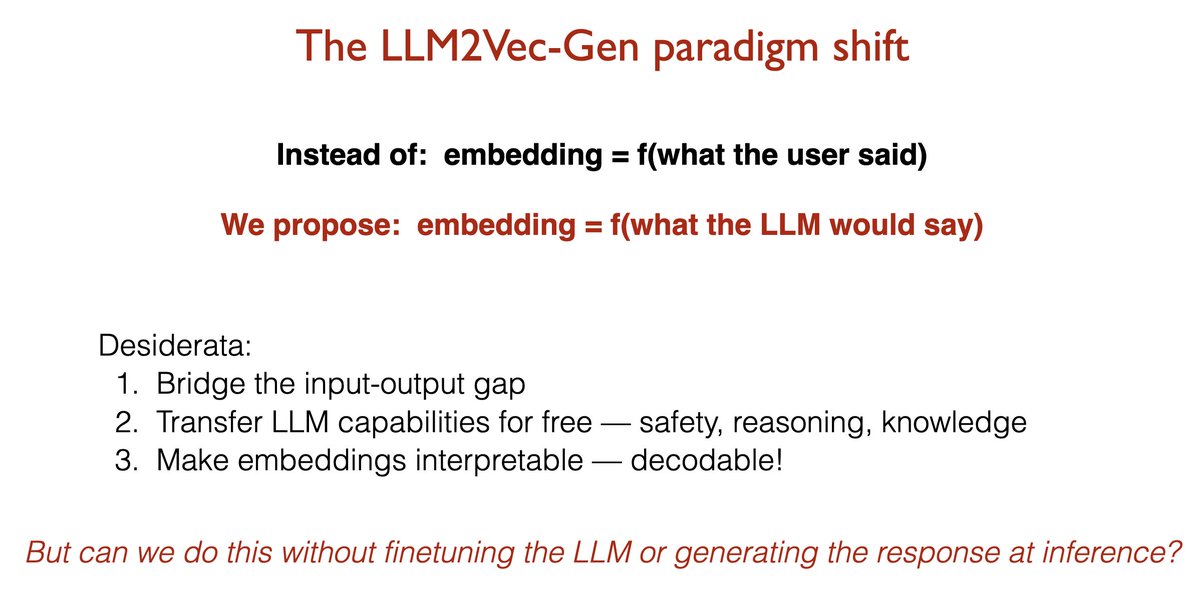

Your LLM already knows the answer. Why is your embedding model still encoding the question? 🚨Introducing LLM2Vec-Gen: your frozen LLM generates the answer's embedding in a single forward pass — without ever generating the answer. Not only that, the frozen LLM can decode the embedding back into text. 🏆 SOTA self-supervised embeddings 🛡️ Free transfer of instruction-following, safety, and reasoning

Your LLM already knows the answer. Why is your embedding model still encoding the question? 🚨Introducing LLM2Vec-Gen: your frozen LLM generates the answer's embedding in a single forward pass — without ever generating the answer. Not only that, the frozen LLM can decode the embedding back into text. 🏆 SOTA self-supervised embeddings 🛡️ Free transfer of instruction-following, safety, and reasoning

Congrats to the 126 early-career scholars awarded a 2026 Sloan Research Fellowship, whose creativity and innovation set them apart as the next generation of scientific leaders! Our Fellows represent 7 fields and 44 institutions across the US and Canada. sloan.org/fellowships/20…

Introducing linear scaling of reasoning: 𝐓𝐡𝐞 𝐌𝐚𝐫𝐤𝐨𝐯𝐢𝐚𝐧 𝐓𝐡𝐢𝐧𝐤𝐞𝐫 Reformulate RL so thinking scales 𝐎(𝐧) 𝐜𝐨𝐦𝐩𝐮𝐭𝐞, not O(n^2), with O(1) 𝐦𝐞𝐦𝐨𝐫𝐲, architecture-agnostic. Train R1-1.5B into a markovian thinker with 96K thought budget, ~2X accuracy 🧵

AgentRewardBench: Evaluating Automatic Evaluations of Web Agent Trajectories We are releasing the first benchmark to evaluate how well automatic evaluators, such as LLM judges, can evaluate web agent trajectories. We find that rule-based evals underreport success rates, and no single LLM judge excels across all benchmarks. We collect trajectories from web agents built on four LLMs (Claude 3.7, GPT-4o, Llama 3.3, Qwen2.5-VL) across popular web benchmarks (AssistantBench, WebArena, VWA, WorkArena, WorkArena++). An amazing team effort with: @a_kazemnejad @ncmeade @arkil_patel @dcshin718 @alejaz_a @karstanczak @ptshaw2 @chrisjpal @sivareddyg

Internship @ServiceNowRSRCH to build the next generation of computer use agents that are safe and secure from malicious attacks. Focus on intervention strategies, defenses to make agents robust against unsafe behavior.. Apply here: bit.ly/3V3mmTg

Come and visit our poster on the Safety of Retrievers @aclmeeting 🗓️Tuesday, Findings Posters, 16:00-17:30 🚨Instruction-following retrievers will become increasingly good tools for searching for harmful or sensitive information.🚨

1\ Hi, can I get an unsupervised sparse autoencoder for steering, please? I only have unlabeled data varying across multiple unknown concepts. Oh, and make sure it learns the same features each time! Yes! A freshly brewed Sparse Shift Autoencoder (SSAE) coming right up. 🧶