Pete Shaw

110 posts

Pete Shaw

@ptshaw2

Research Scientist @GoogleDeepmind

In the limit, what's important is our ability to adapt. What is a good recipe for teaching agents to adapt on-the-fly? We introduce two meta-learning for LLMs papers written with @JonnyCoook at @GoogleDeepMind. This is research from last year we can finally share 🧵👇

🚨Excited to share our new work viewing reasoning strategies as teaching tools: for fixed target model, which CoT strategies best support learning and generalization? ✨Our answer is intrinsic dimensionality (minimum effective capacity a model needs to solve the task). Somewhat counterintuitively, adding CoT – which requires generating longer and more structured outputs – can reduce learning complexity. Good reasoning compresses the task, i.e., it reduces the degrees of freedom the model needs to map inputs to correct solutions. 🧵⬇️ (1/5)

Drop Site obtained harrowing footage of the latest killing which appears to be from the perspective of the woman in pink filming from the sidewalk

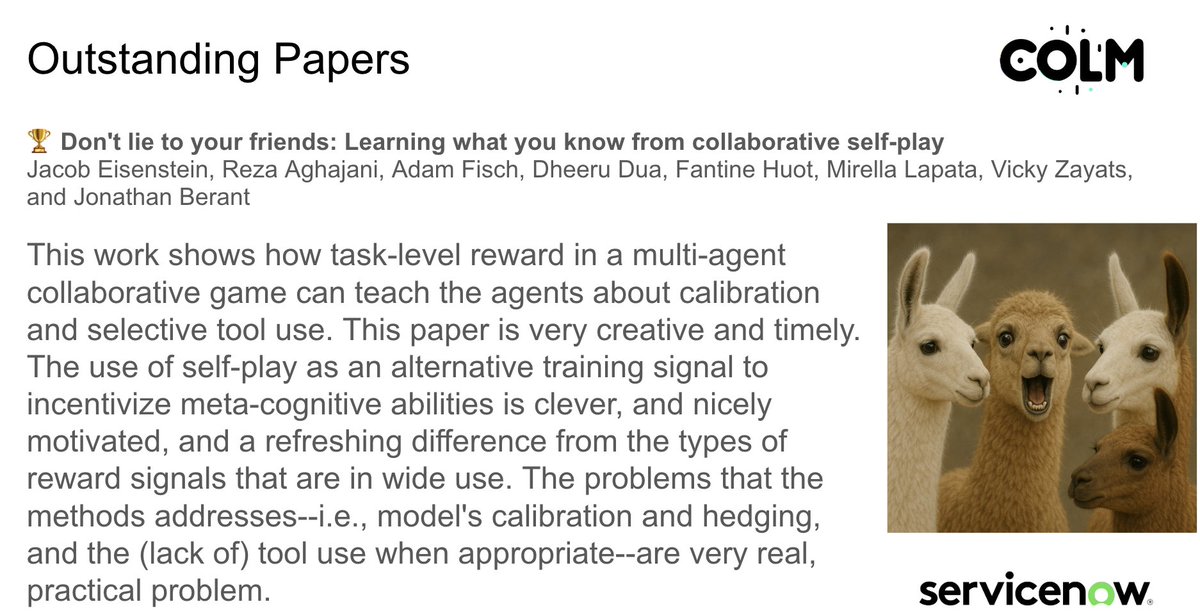

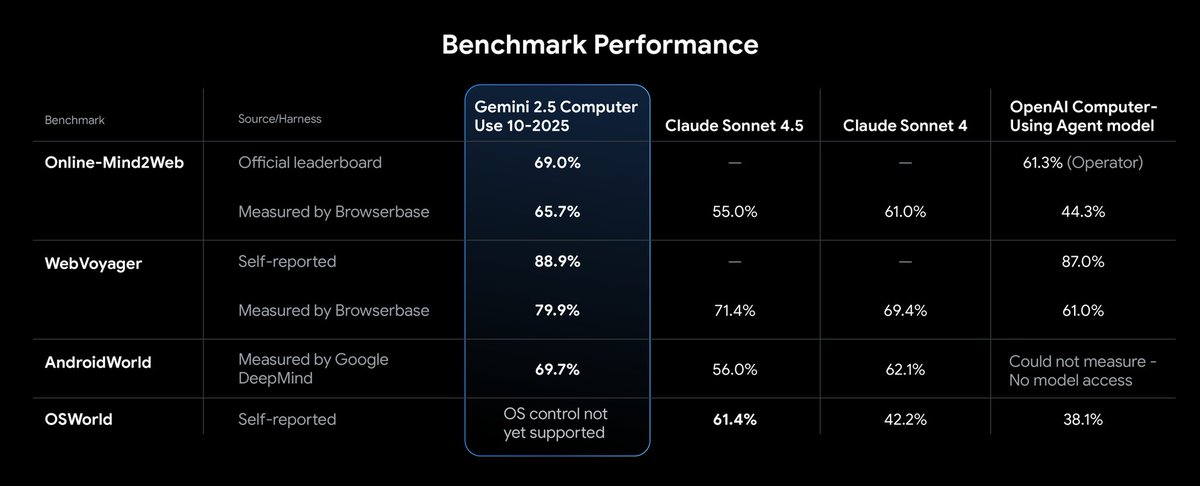

AgentRewardBench: Evaluating Automatic Evaluations of Web Agent Trajectories We are releasing the first benchmark to evaluate how well automatic evaluators, such as LLM judges, can evaluate web agent trajectories. We find that rule-based evals underreport success rates, and no single LLM judge excels across all benchmarks. We collect trajectories from web agents built on four LLMs (Claude 3.7, GPT-4o, Llama 3.3, Qwen2.5-VL) across popular web benchmarks (AssistantBench, WebArena, VWA, WorkArena, WorkArena++). An amazing team effort with: @a_kazemnejad @ncmeade @arkil_patel @dcshin718 @alejaz_a @karstanczak @ptshaw2 @chrisjpal @sivareddyg