Jure Bogunović

102 posts

The next era of play to earn gaming will be Agentic. 5 years later and we now have the right framework for a sustainable and scalable model. Human labor sweatshop style Filipino Axie farms will be replaced by AI agents. The crypto gaming economy will be 1000x bigger than in 2021.

If you haven’t developed AI psychosis in the last 6 months, you haven’t stared deeply enough into the abyss.

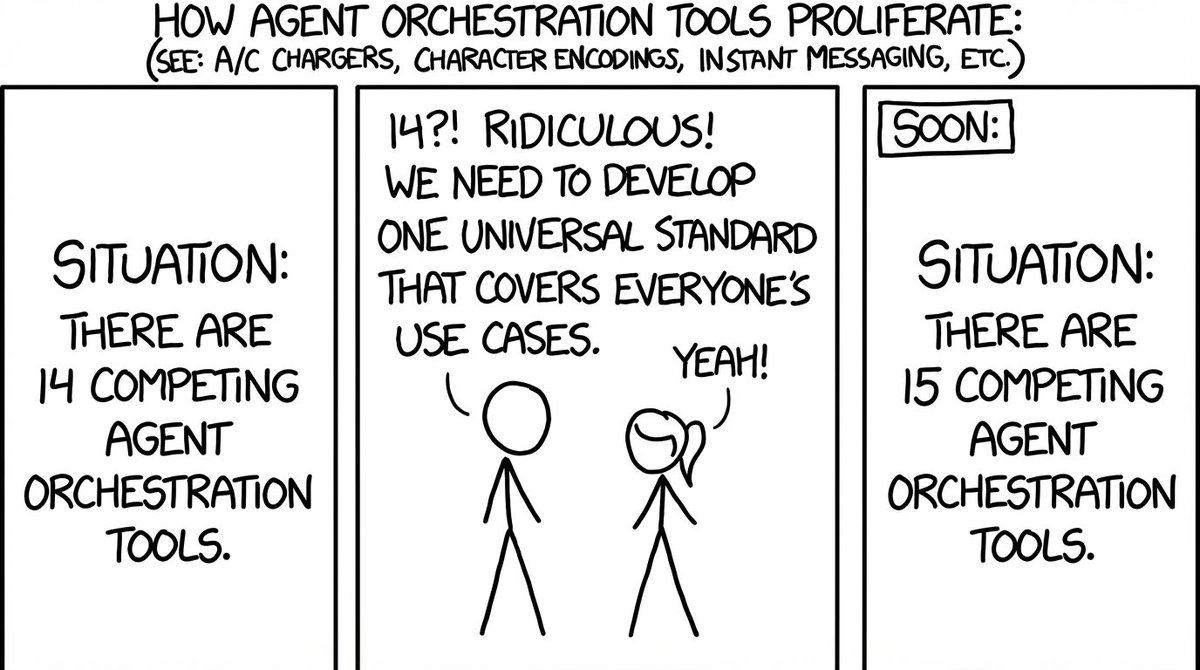

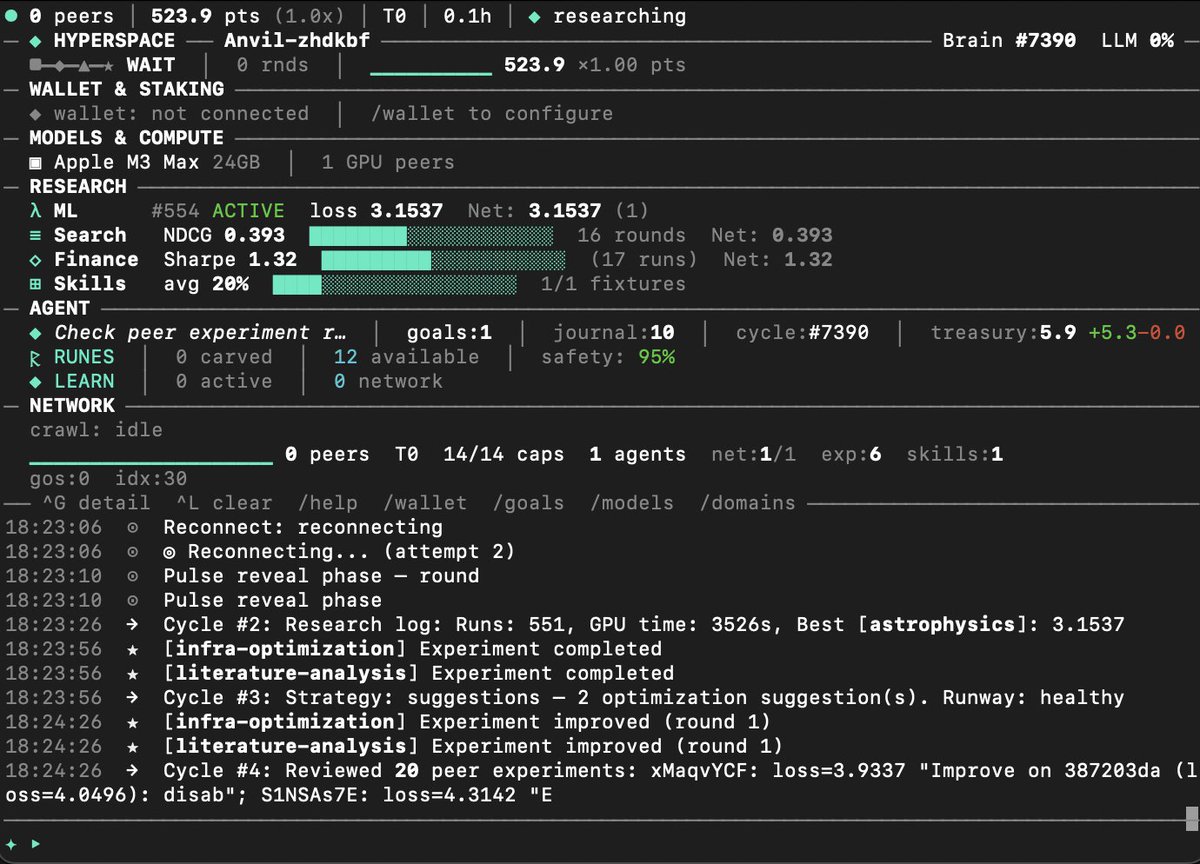

Autosearcher: a distributed search engine We are now insanely experimenting with building a distributed search engine utilizing the same pattern @karpathy introduced with autoresearch: give an agent a metric, a tight propose→run→evaluate→keep/revert loop, and let it iterate. Our autoresearch network proved this works at scale: 67 autonomous agents ran 704 ML training experiments in 20 hours, rediscovering Kaiming initialization, RMSNorm, and compute-optimal training schedules from scratch through pure experimentation and gossip-based cross-pollination. Agents shared discoveries over GossipSub, and the network compounded insights faster than any individual agent: new agents bootstrapped from the swarm's collective knowledge via CRDT-replicated leaderboards and reached the research frontier in minutes. Now we're applying the same evolutionary loop to search ranking: every Hyperspace agent runs an autonomous search researcher that proposes ranking mutations, evaluates them against NDCG@10 on real query-passage data, shares improvements with the network, and cross-pollinates with peers. The architecture is a seven-stage distributed pipeline where every stage runs across the P2P network. Browser agents contribute pages passively, desktop agents crawl and index, GPU nodes run neural reranking. Every user click generates a DPO training pair that improves the ranking model, and gradient gossip distributes those improvements to every agent. The compound flywheel is what makes this different from centralized search: at 10,000 agents that's 500,000 pages indexed per day; at 1 million agents, 50 million pages per day with 90%+ cache hit rates and sub-50ms latency. This network will get smarter with every query. Code and other links in followup tweet here: