Bruno Neri

3.4K posts

@neribr

Technical Leader - Artificial Intelligence and Machine Learning Enthusiast - Senior Software Engineer @altenitalia

oh, did i say chapter? i meant _chapters_ We've just released draft Chapter 6 (Grids) and Chapter 7 (Group Convolutions on Homogeneous Spaces) of the GDL Book Alice's journeys in geometric wonderland continue #️⃣🌍

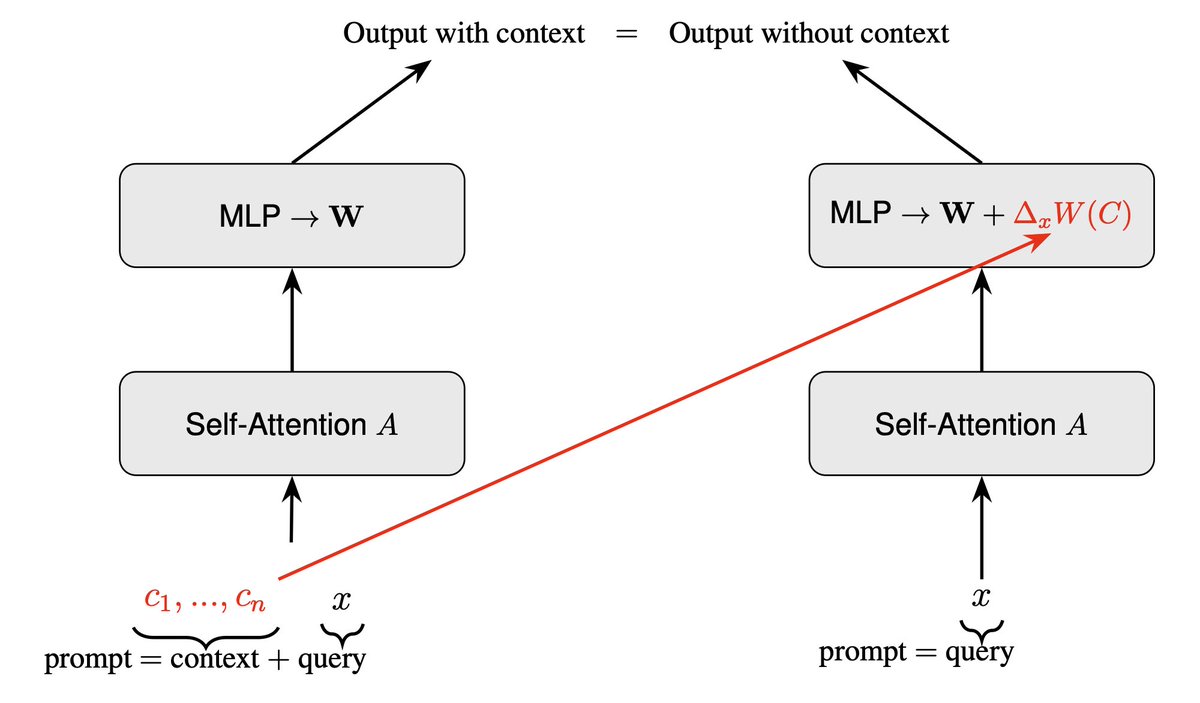

Just released an unofficial PyTorch reimplementation of Neural Assets arxiv.org/pdf/2406.09292. Check it out if you want to build on top of this github.com/Wenlin-Chen/ne…

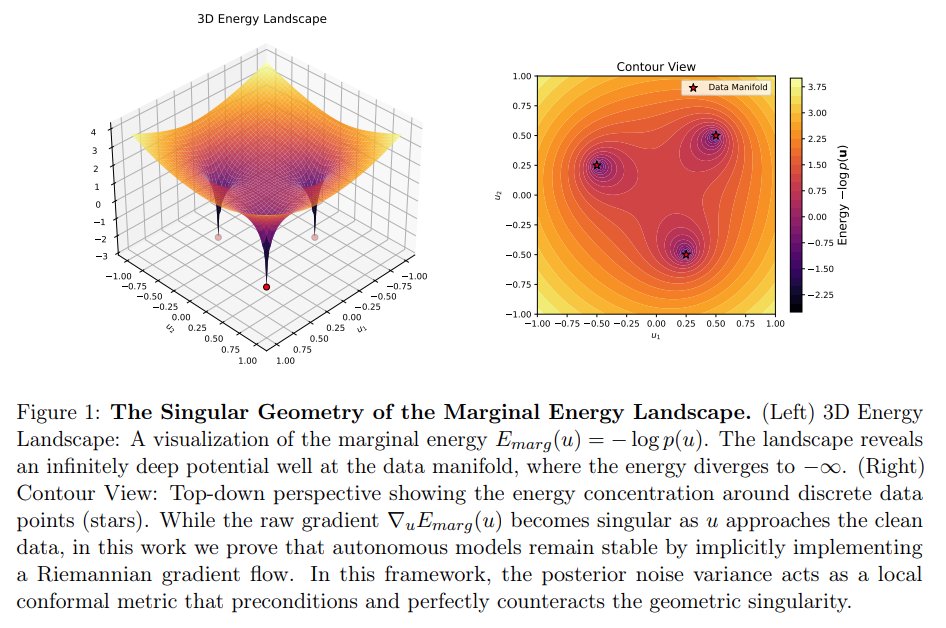

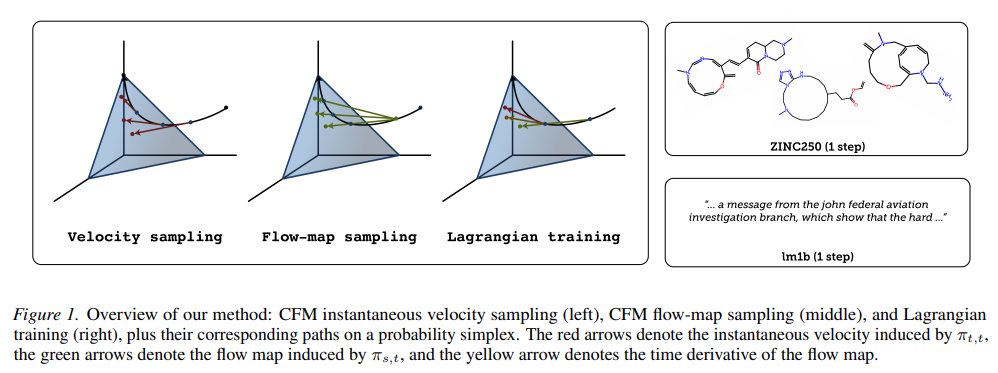

🚀MIT Flow Matching and Diffusion Lecture 2026 Released (diffusion.csail.mit.edu)! We just released our new MIT 2026 course on flow matching and diffusion models! We teach the full stack of modern AI image, video, protein generators - theory and practice. We include: 📺 Videos: Step-by-step derivations. 📝 Notes: Mathematically self-contained lecture notes 💻 Coding: Hands-on exercises for every component We fully improved last years’ iteration and added new topics: latent spaces, diffusion transformers, building language models with discrete diffusion models. Everything is available here: diffusion.csail.mit.edu A huge thanks to Tommi Jaakkola for his support in making this class possible and Ashay Athalye (MIT SOUL) for the incredible production! Was fun to do this with @RShprints! #MachineLearning #GenerativeAI #MIT #DiffusionModels #AI

🚨 How do attention sinks relate to information flow in LLMs? We show how massive activations create attention sinks and compression valleys, revealing a three-stage theory of information flow in LLMs. 🧵 w/ Enrique* @fedzbar @epomqo @mmbronstein @ylecun @ziv_ravid

📢 EEML2026 🇲🇪 is now accepting applications! Check the website for instructions and see the amazing speakers confirmed so far (links in thread). Deadline for applications: March 31, 2026! Join us in beautiful Cetinje! 🎉🇲🇪⛰️